Retrieval-Augmented Generation for Factual Large Language Model Outputs

- Mark Chomiczewski

- 17 March 2026

- 6 Comments

Large language models (LLMs) are powerful. They can write essays, answer questions, and even draft emails. But they also make things up-confidently. Ask an LLM about the latest iPhone features or last week’s stock market moves, and it might give you a detailed, plausible answer that’s completely wrong. This isn’t a bug. It’s a fundamental flaw. LLMs don’t remember what happened after their training data stopped. They don’t know today’s news, today’s products, or today’s facts. They only know what they were taught before a fixed date.

That’s where Retrieval-Augmented Generation (RAG) comes in. RAG doesn’t try to fix the model by retraining it. It doesn’t ask the model to memorize everything. Instead, it gives the model access to real-time, accurate information right when it needs it. Think of it like a student who’s allowed to look up facts during an exam. They still have to understand the material, but now they’re not guessing.

How RAG Works: A Simple Four-Step Process

RAG follows a clear, repeatable flow. It’s not magic. It’s engineering.

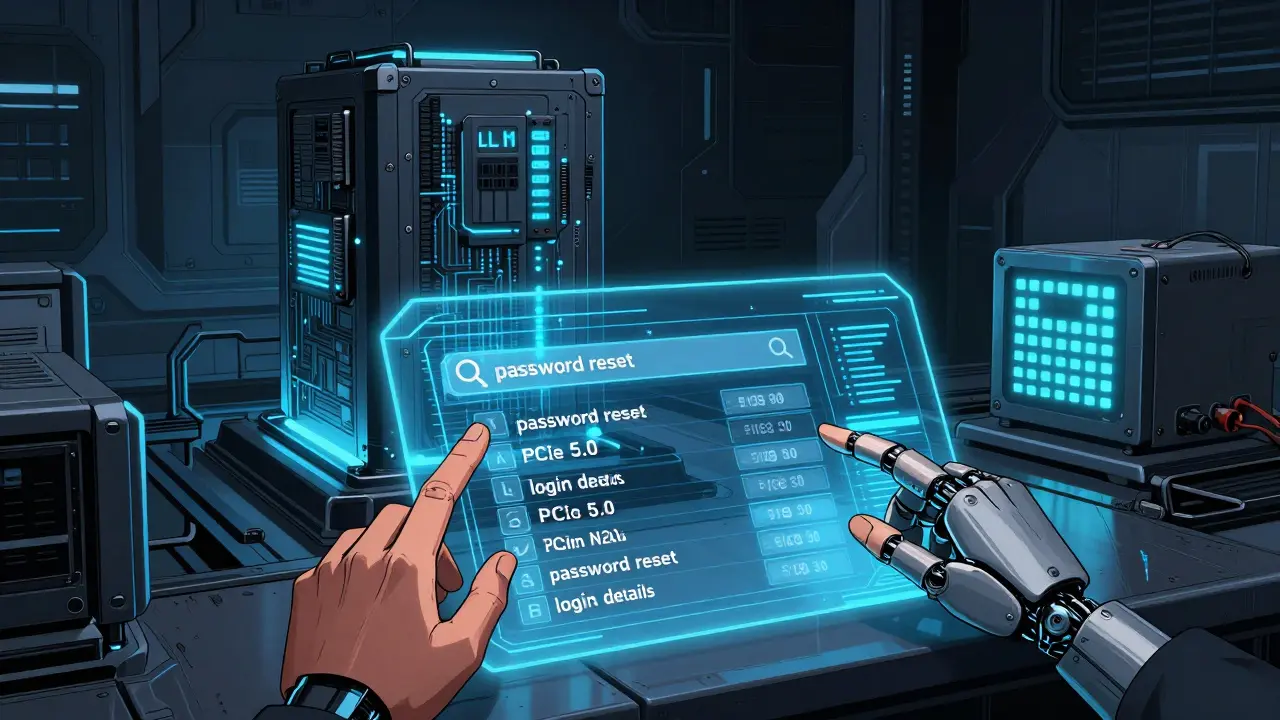

First, ingestion. You take your trusted data-company manuals, product specs, legal documents, research papers-and feed it into a system. This isn’t about training the LLM. It’s about storing it somewhere else. Usually, this data goes into a vector database like Pinecone or Qdrant. Each piece of text-whether it’s a paragraph or a bullet point-is turned into a numerical fingerprint called an embedding. These embeddings capture meaning, not just keywords. The phrase "How do I reset my password?" and "I forgot my login details" might look different, but their embeddings will be close because they mean the same thing.

Second, retrieval. When a user asks a question, the system turns that question into an embedding too. Then it searches the database for the top few chunks that match most closely. This isn’t Google-style keyword matching. It’s semantic search. It finds what’s meaningfully related, not just what contains the exact words. Some systems even combine this with keyword search. Why? Because sometimes you need the exact term "PCIe 5.0" to get the right answer, not just something that sounds similar.

Third, augmentation. The system takes the top three or five retrieved chunks and wraps them around the original question. It tells the LLM: "Here’s what we know. Answer based only on this." This is the magic trick. The model isn’t pulling from memory anymore. It’s working from a fact sheet.

Finally, generation. The LLM reads the question and the retrieved facts. Then it writes a response. Because it’s grounded in real data, the answer is more accurate. And if it cites a source-"According to the 2026 Apple Support Guide, page 14"-you can check it. No more "I think" or "It’s likely". Just facts.

Why RAG Beats Fine-Tuning

You might wonder: Why not just retrain the model with new data? That’s called fine-tuning. And yes, fine-tuning works. But it’s expensive. It needs thousands of labeled examples. It takes days of GPU time. And once you’re done, the data is frozen again. Two weeks later, you’re back to square one.

RAG is different. You update the data source. You re-index the vectors. That takes minutes. No retraining. No cost. No downtime. If your company releases a new product tomorrow, you can have RAG answering questions about it by lunchtime. Fine-tuning can’t do that. RAG can.

And here’s the kicker: RAG lets you use the same LLM for multiple domains. One model. Ten different knowledge bases. Customer support for your SaaS product? Use one vector database. Internal HR policy? Use another. Switch contexts without switching models. That’s flexibility you can’t get with fine-tuning.

Stopping Hallucinations at the Source

LLMs hallucinate because they’re trained to sound confident. They don’t know when they’re wrong. They’ve seen millions of answers that sound right. So they guess. And they guess well.

RAG stops this by cutting off the guessing. If the retrieved data doesn’t mention something, the model is instructed not to invent it. "I don’t know" becomes a valid answer. And when the model does know, it can say why. "Based on the latest documentation from NVIDIA, the RTX 5090 has 32GB of GDDR7 memory." That’s verifiable. That’s trustworthy.

Companies using RAG for customer service report 40-60% fewer support tickets. Why? Because users get correct answers the first time. No more "I called support and they told me X, but the website says Y." RAG makes the answer consistent, accurate, and traceable.

Hybrid Search: More Than Just Vectors

Not all information is best found with embeddings. Sometimes, you need exact matches. "What’s the model number for the 2026 MacBook Pro?" You don’t want "MacBook Pro 2025" or "Apple laptop 2026." You want the exact code: "MacBook Pro M3 Max, 2026 Edition."

This is where hybrid search shines. It runs two searches at once: one using vector embeddings, one using keyword matching. Then it combines the results. The system might take the top 3 from each and merge them into a top 5. This catches both semantic matches and exact terms. It’s especially useful in legal, medical, or technical fields where precision matters.

Some RAG systems even rewrite the query before searching. If someone asks, "How do I fix my printer?" the system might expand it to "troubleshoot printer error code E02, HP OfficeJet Pro 9025." That small change can mean the difference between a useless answer and a perfect one.

Agentic RAG: The Next Leap

Early RAG systems were like assistants who fetched documents and handed them to you. They didn’t think. They just retrieved.

Now, we have agentic RAG. This version lets the LLM decide when and how to retrieve. Imagine asking: "Compare the battery life of the iPhone 16 and the Pixel 9." The model doesn’t just grab two specs. It thinks: "I need to find the official battery test results for both. Are they in the same document? Maybe I should check Apple’s site first, then Google’s." Then it retrieves, reads, compares, and answers-all in one go.

Agentic RAG can ask follow-up questions. "You mentioned the iPhone 16. Are you asking about the standard model or the Pro Max?" It can switch sources mid-process. It can even reject a retrieved chunk if it seems unreliable. This isn’t just retrieval anymore. It’s reasoning.

Real-World Use Cases

- Customer Support: A SaaS company uses RAG to power its chatbot. Answers are pulled from their latest help docs. No more outdated FAQs.

- Legal & Compliance: Law firms use RAG to answer questions about recent court rulings. The system pulls from case law databases updated daily.

- Healthcare: Hospitals use RAG to help doctors interpret patient records. The model references the latest clinical guidelines from the CDC, not its 2023 training data.

- Internal Knowledge: A tech firm lets employees ask questions about product specs. RAG pulls from Confluence, Jira, and engineering wikis. No more "I think it’s in the Google Drive folder..."

What RAG Can’t Fix

RAG isn’t a cure-all. If your data is messy, incomplete, or outdated, RAG will just make a confident answer out of bad facts. Garbage in, garbage out.

It also doesn’t help with logic. If you ask, "Should I invest in AI stocks?" RAG can give you facts about market trends, but it can’t tell you what to do. That’s still human judgment.

And if retrieval fails-if the system can’t find any relevant chunks-the LLM will fall back to its training data. That’s when hallucinations creep back in. That’s why the quality of your knowledge base matters more than the LLM itself.

The Future: Real-Time, Explainable, Personal

Next, RAG will get smarter. Systems will connect to live data streams-stock prices, weather, traffic-so answers stay current. Imagine asking, "What’s the delay on Flight 223?" and getting a live update.

Explainability will improve too. Future RAG systems will show you exactly which paragraph influenced each sentence in the answer. "This part came from page 8 of the user manual." That’s transparency. That’s trust.

And personalization? You might get answers based on your role. A manager sees high-level summaries. A technician gets step-by-step repair guides. All from the same system.

RAG isn’t just a tool. It’s a shift in how we think about AI. We don’t need models that know everything. We need models that know how to find what’s true. And that’s exactly what RAG delivers.

Comments

Rahul U.

This is such a clean breakdown of RAG! I’ve been using it in our customer support bot at my job in Bangalore, and the drop in ticket volume has been insane. We went from 300+ daily tickets to under 120. The best part? Users actually trust the answers now. No more ‘but my cousin said…’ or ‘I read it on Reddit.’

Also, love how you mentioned hybrid search. We use it for product specs - exact model numbers matter way more than semantics. One time, someone asked for ‘iPhone 16 Pro’ and got a result for ‘iPhone 15 Pro Max’ because of embeddings alone. Added keyword fallback, and boom - perfect match. Small tweak, huge win.

March 17, 2026 AT 17:31

E Jones

Let me tell you something they don’t want you to know about RAG. The ‘vector databases’? They’re not magic. They’re just glorified Google searches with a fancy name. And who controls the data? Corporations. Big Tech. The same companies that sold us AI that hallucinates in the first place. Now they’re selling us ‘truth’… but only the truth they *allow* you to see.

What if your company’s knowledge base is secretly edited? What if the ‘latest documentation’ from NVIDIA was sanitized to hide a flaw? RAG doesn’t stop hallucinations - it just replaces one lie with another, wrapped in citations that look official. It’s not trust - it’s programmed obedience. And don’t get me started on ‘agentic RAG.’ That’s not intelligence. That’s AI gaslighting you into believing it’s thinking when it’s just following a script written by a lawyer in Palo Alto.

They’re not fixing AI. They’re packaging it. Selling it back to us as a solution. And we’re buying it. Like sheep. Again.

March 18, 2026 AT 03:54

Barbara & Greg

While the technical architecture of RAG is undeniably elegant, one cannot ignore the philosophical implications of outsourcing factual authority to external data sources. The very notion of a language model being ‘grounded’ in retrieved information presupposes an epistemological hierarchy - that truth is external, static, and retrievable, rather than emergent, contextual, and interpretive.

Moreover, the reliance on vector embeddings as proxies for semantic meaning risks reifying linguistic reductionism. The assumption that ‘How do I reset my password?’ and ‘I forgot my login details’ are semantically equivalent ignores the subtle affective and pragmatic dimensions of human communication. Are we not, in our pursuit of accuracy, eroding the richness of human expression?

One must also question the ethics of deploying such systems in healthcare and legal domains, where the stakes of misinterpretation - even minor - are not merely technical, but existential.

March 18, 2026 AT 05:14

selma souza

There is a glaring grammatical error in the original post. The phrase 'they also make things up-confidently' is incorrectly hyphenated. It should be 'they also make things up, confidently.' The absence of a comma after 'up' is a serious punctuation failure that undermines the credibility of the entire article. Additionally, 'PCIe 5.0' is correctly capitalized, but 'GDDR7' should be italicized in technical contexts for consistency with industry standards. And 'RAG' is an acronym - it should be introduced as 'Retrieval-Augmented Generation (RAG)' on first use, not just 'RAG' in bold. These are not nitpicks. They are foundational to professionalism in technical writing.

March 19, 2026 AT 10:35

Frank Piccolo

Look, I get it - RAG sounds cool. But let’s be real. This isn’t innovation. It’s a Band-Aid on a bullet wound. We’re spending millions on vector databases and hybrid search because we built these LLMs wrong in the first place. Why not just train them on up-to-date data? Oh right - because it costs too much. So now we’re just slapping on a glorified Wikipedia lookup and calling it AI.

And don’t even get me started on ‘agentic RAG.’ That’s just the AI pretending it’s thinking so you’ll pay more for it. Meanwhile, China’s building real AI - models that learn continuously without needing a database. We’re overengineering a solution to a problem we created by outsourcing intelligence to tech bros who think ‘embedding’ is a verb.

It’s not the future. It’s a corporate PR stunt dressed up as engineering.

March 19, 2026 AT 14:12

James Boggs

Excellent summary. I’ve implemented RAG in two enterprise systems this year, and the ROI has been clear: faster resolution, fewer escalations, and higher user satisfaction scores. The key is data hygiene - clean, well-structured sources make all the difference. Hybrid search was a game-changer for our technical docs. Also, agree with the point about agentic RAG - it’s the next frontier. We’re testing it for internal IT queries, and the ability to ask clarifying questions before retrieving has cut errors by nearly 70%. Simple, scalable, and trustworthy.

March 19, 2026 AT 18:43