Why BLEU Scores Are Dead: The Shift to LLM-as-a-Judge Metrics in NLP

- Mark Chomiczewski

- 9 May 2026

- 3 Comments

Imagine you hire a writer to draft a press release. You give them a rough outline and two examples of previous releases. They hand you back a document that captures the tone perfectly, uses fresh language, and hits every key point-but it shares almost no exact phrases with your examples. If you graded their work by counting how many words matched your examples word-for-word, you’d fail them. Yet, if a human read it, they’d likely say it’s excellent.

This is exactly the problem we face in natural language processing (NLP) today. For over two decades, we’ve been grading AI systems using BLEU scores-a metric designed for machine translation in 2002. It works by matching n-grams (sequences of words) against reference texts. But as models have evolved from simple translators into complex reasoning engines, BLEU has become obsolete. It punishes creativity and rewards memorization. Now, the industry is shifting toward LLM-as-a-Judge metrics, where one AI evaluates another. This shift isn’t just a trend; it’s a necessity for building systems that actually understand language.

The Legacy Problem: Why BLEU Dominated for So Long

To understand why we’re moving away from BLEU, we first need to look at why it stuck around. When BLEU (Bilingual Evaluation Understudy) was introduced, neural networks were too slow and expensive for most research teams. Researchers needed a metric that was fast, deterministic, and easy to calculate. BLEU delivered on all three fronts.

It operates on a simple principle: compare the generated text to a set of human-written references. It calculates the geometric mean of n-gram precisions, usually up to four words long. If the AI’s output matches the reference vocabulary closely, the score goes up. This made it perfect for early machine translation tasks, where the goal was often to find the closest equivalent phrase in another language.

However, this simplicity came with a hidden cost. BLEU suffers from what researchers call "vocabulary bias." A study by Koehn in 2004 showed that scores could be artificially inflated by 15-20 points simply by mimicking the reference vocabulary, even if the semantic meaning was wrong. In other words, BLEU rewards surface-level similarity, not understanding. As models became smarter, capable of paraphrasing and contextual adaptation, BLEU began penalizing these very strengths.

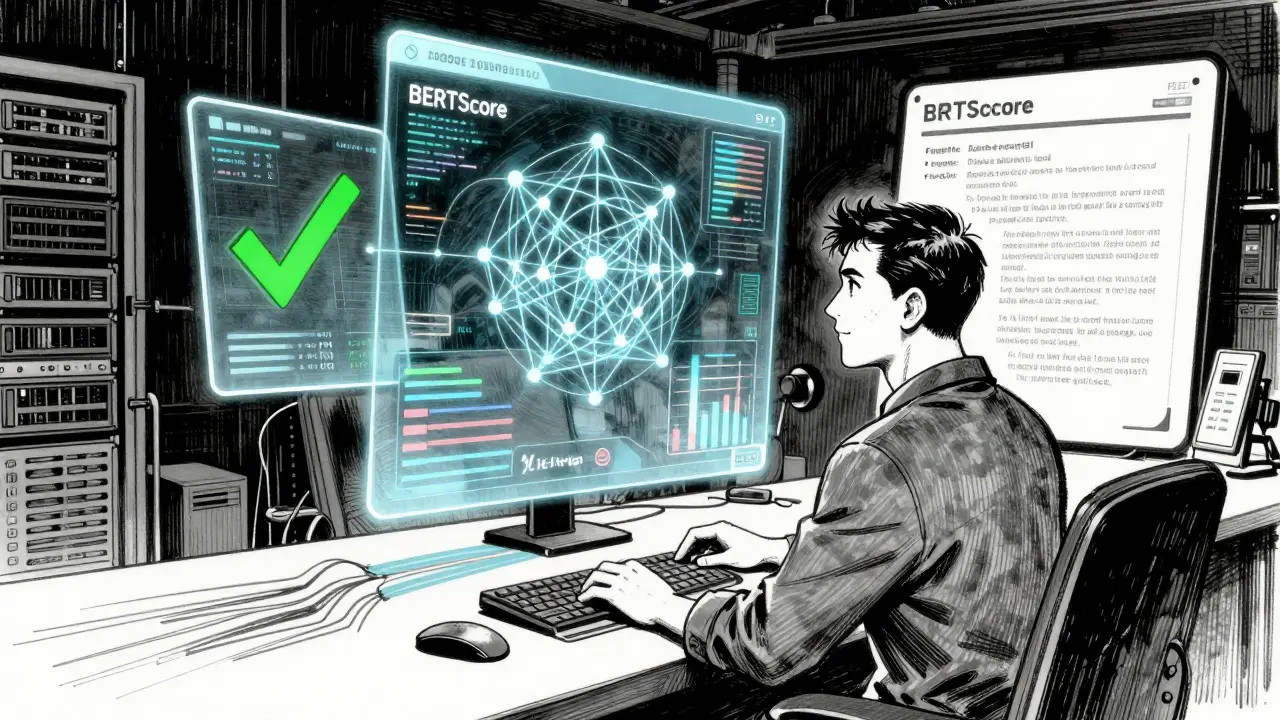

The Rise of Semantic Understanding: Enter BERTScore

As NLP moved beyond translation into summarization, chatbots, and creative writing, the limitations of lexical matching became glaring. We needed metrics that understood meaning, not just words. This led to the rise of embedding-based metrics like BERTScore.

BERTScore uses contextual representations from models like BERT to compare output embeddings. Instead of checking if the word "car" matches "automobile," it checks if the vector representation of those words is close in semantic space. This allows it to recognize synonyms and paraphrases that BLEU misses entirely.

While BERTScore is a significant step forward, it still has constraints. It relies on static embeddings and doesn’t fully capture the nuance of longer-range dependencies or structural relationships in complex texts. It’s better than BLEU, but it’s not yet good enough for evaluating open-ended generation tasks where multiple valid answers exist.

| Metric | Type | Human Correlation | Speed/Cost | Best Use Case |

|---|---|---|---|---|

| BLEU | Lexical Matching | Low (~60%) | Fast / Free | Machine Translation Regression |

| ROUGE | Recall-Oriented | Low (~65%) | Fast / Free | Document Summarization |

| BERTScore | Semantic Embedding | Medium (~75%) | Medium / Low Cost | Semantic Similarity Checks |

| LLM-as-a-Judge | Model-Based Reasoning | High (~81.3%) | Slow / API Cost | Nuanced Quality & Factuality |

LLM-as-a-Judge: The New Gold Standard

The most dramatic shift in recent years is the adoption of LLM-as-a-Judge systems. Instead of relying on rigid mathematical formulas, we prompt a powerful language model-like GPT-4o or LLaMA 3.1-to evaluate another model’s output based on specific criteria.

According to Openlayer’s 2026 guide, LLM-as-a-Judge scoring achieves an 81.3% correlation with human judgment. This is remarkable because it approaches the level of agreement between different humans evaluating the same text. By contrast, statistical metrics like BLEU lag significantly behind.

But how does it work? There are three main methodologies:

- Pointwise Evaluation: The judge assigns a numerical score to a single output based on rubrics like clarity, accuracy, and tone.

- Pairwise Evaluation: Two outputs are compared directly, and the judge picks the better one. This is often more reliable than absolute scoring.

- Pass/Fail Evaluation: A binary judgment determines if the output meets minimum safety or quality thresholds.

Research published on ArXiv in 2026 revealed a critical insight: the choice of evaluator model matters less than the design of the evaluation criteria. Simply switching from LLaMA 3.1 to GPT-4o doesn’t guarantee better results if the prompt is vague. Comprehensive evaluation design, including clear rubrics and sampling-based scoring with mean aggregation, yields far more consistent and human-aligned results than greedy decoding approaches.

Trade-Offs: Speed, Cost, and Consistency

So, if LLM-as-a-Judge is so much better, why haven’t we abandoned BLEU entirely? The answer lies in practical constraints. Statistical metrics are instantaneous. You can run BLEU on thousands of samples in seconds on commodity hardware. LLM-as-a-Judge requires minutes to hours, depending on batch size, and incurs API costs ranging from $0.01 to $0.10 per evaluation.

There’s also the issue of reproducibility. BLEU is deterministic-the same input always produces the same score. LLMs are non-deterministic. Running the same evaluation twice might yield slightly different scores due to temperature settings or model updates. To mitigate this, engineers use standard deviation across multiple runs to measure consistency, ensuring the evaluation system itself is stable.

Furthermore, LLM evaluators can inherit biases from their training data. If the judge model has a preference for certain styles or topics, it may unfairly penalize outputs that deviate from those norms. This requires careful calibration and diverse testing sets to ensure fairness.

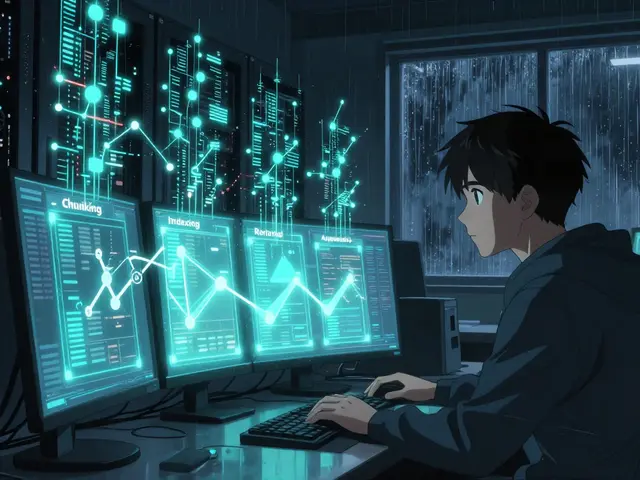

A Layered Approach: Best Practices for 2026

The expert consensus in 2026 is clear: there is no single universal metric. Instead, successful teams adopt a layered evaluation strategy. Galileo AI recommends using BLEU for fast lexical regression checks to catch obvious failures during development. Apply BERTScore when semantic similarity matters more than exact wording. And deploy LLM-as-a-Judge for nuanced criteria like factuality, safety, and user satisfaction.

Weights & Biases emphasizes that shipping decisions should rarely depend on BLEU alone. A robust workflow combines:

- Statistical Metrics: For quick feedback loops and smoke tests.

- Semantic Metrics: For deeper quality signals and meaning preservation.

- Human Review: To calibrate the automated systems and handle edge cases.

This hybrid approach balances speed and sophistication. For example, in Retrieval-Augmented Generation (RAG) systems, you might use retrieval precision metrics for the search component, BERTScore for context relevance, and LLM-as-a-Judge for grounding accuracy and hallucination detection.

Future Trajectories: Specialization Over Generalization

Looking ahead, evaluation will become increasingly task-specific. Generic metrics won’t suffice for specialized domains. Code generation relies on execution-based metrics that validate functional correctness rather than surface-level syntax. Question-answering systems employ answerability assessments that go beyond reference-text similarity. Even creative writing tools will require new metrics that balance originality with coherence.

Vendors like Openlayer and Wandb are already building platforms that automate this multi-tiered evaluation, integrating statistical, semantic, and model-based signals into unified dashboards. This reflects a maturing field that recognizes effective evaluation demands alignment with actual user priorities, not just historical convenience.

Is BLEU completely useless now?

No, BLEU is not useless. It remains valuable for fast, deterministic regression testing in machine translation pipelines where lexical overlap is a primary concern. However, it should not be used as the sole metric for evaluating modern LLMs, especially for open-ended tasks like summarization or creative writing.

How much does LLM-as-a-Judge cost?

Costs vary based on the model and provider. Using commercial APIs like GPT-4o, expect to pay between $0.01 and $0.10 per evaluation. Local deployment of open-source models like LLaMA 3.1 reduces ongoing costs but requires significant GPU infrastructure investment.

Which metric has the highest human correlation?

LLM-as-a-Judge currently leads with approximately 81.3% human correlation, according to 2026 data from Openlayer. This significantly outperforms traditional metrics like BLEU and ROUGE, which typically correlate below 70%.

What is BERTScore?

BERTScore is a semantic evaluation metric that uses contextual embeddings from BERT models to measure similarity between generated text and references. It recognizes synonyms and paraphrases, offering a middle ground between lexical matching and full LLM evaluation.

Should I use Pairwise or Pointwise evaluation?

Pairwise evaluation is generally more reliable because it asks the model to choose between two options, reducing the ambiguity of absolute scoring. Pointwise evaluation is useful when you need granular scores for specific criteria like tone or length, but it requires carefully designed rubrics.

Comments

Sarah McWhirter

They want you to believe that the "judge" models are objective, but have you ever stopped to think about who programmed the judges?

It’s a closed loop of corporate bias disguised as mathematical truth. The Big Tech overlords are training these AI systems to police our thoughts before we even articulate them. When an LLM evaluates your writing, it’s not just checking for grammar; it’s checking for ideological compliance with the ruling class.

I tried to submit a counter-narrative to a local news bot last week and it got flagged as "low quality" because it didn’t align with their pre-approved semantic vectors. It’s happening faster than most people realize. Wake up.

May 10, 2026 AT 12:10

kelvin kind

Fair points on the cost. We still use BLEU for quick sanity checks in our pipeline because spinning up an API call for every draft is too slow for our CI/CD workflow. Good article though.

May 11, 2026 AT 16:06

Ananya Sharma

The author presents this shift as an inevitable technological evolution, yet fails to acknowledge the profound ethical decay inherent in outsourcing human judgment to opaque algorithms that are fundamentally unaccountable and designed solely to maximize engagement metrics rather than truth or nuance, thereby creating a society where language is no longer a tool for genuine connection but merely a series of optimized tokens calculated to manipulate user behavior through subtle psychological conditioning techniques that most people are entirely unaware of until they find themselves trapped in echo chambers of their own making which reinforces existing biases and prevents any meaningful intellectual growth or critical thinking from occurring within the broader discourse community.

May 12, 2026 AT 11:42