Architecture Decisions That Reduce LLM Bills Without Sacrificing Quality

- Mark Chomiczewski

- 8 February 2026

- 9 Comments

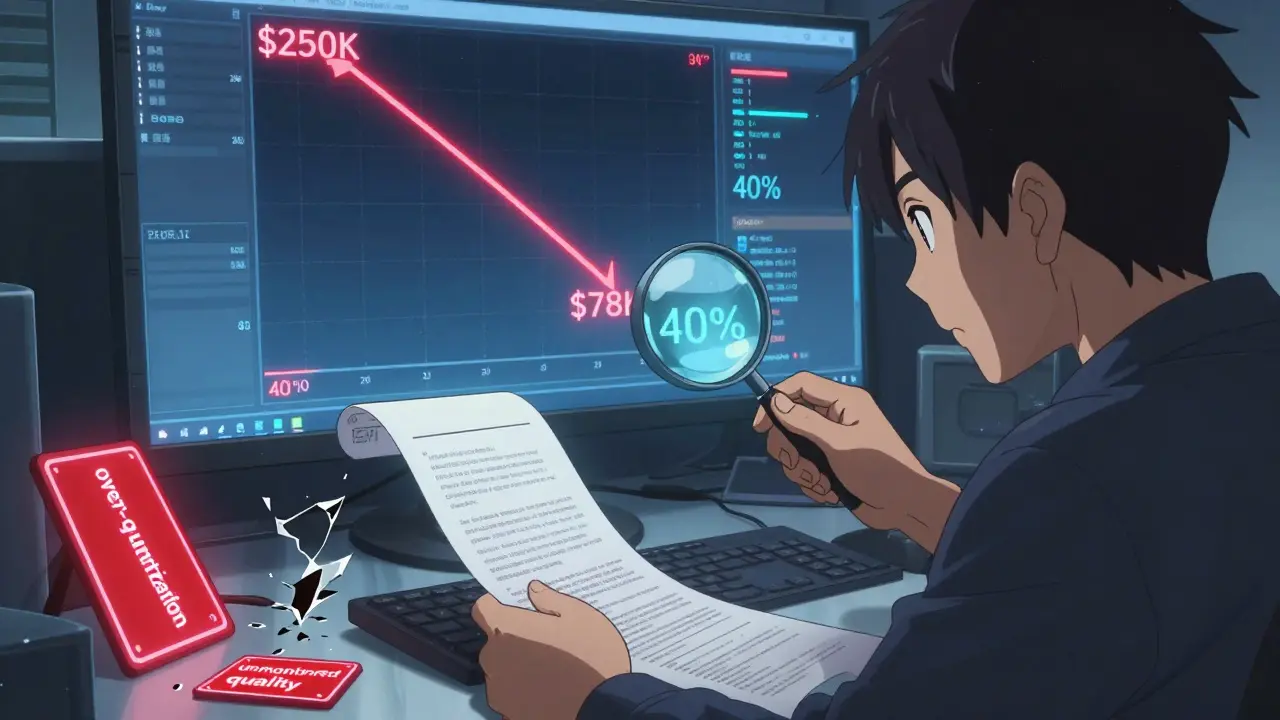

Running large language models (LLMs) at scale can quickly turn into a financial drain. Some companies are seeing monthly bills over $250,000 just to keep their chatbots, summarizers, and assistants running. But here’s the truth: you don’t need to cut features or accept worse answers to bring those costs down. The real savings come from architecture-how you design the system, not just which model you pick.

Choose the Right Model, Not the Biggest One

Most teams default to the most powerful model available-GPT-4, Claude 3 Opus, Gemini 1.5 Pro. It feels safe. But 78% of customer service queries, according to FutureAGI’s 2025 data, are handled just as well by GPT-3.5-turbo. And it costs 70% less per token. The mistake? Not testing. Start by defining what "quality" means for your use case. Is it answering FAQs correctly? Then measure F1-score or exact match rate. Is it summarizing documents? Use ROUGE-L. Run side-by-side tests. You’ll likely find that a 7B or 13B parameter model does 95% of what a 175B model does. Right-sizing isn’t about cutting corners-it’s about matching the tool to the job.Route Queries Like a Traffic Cop

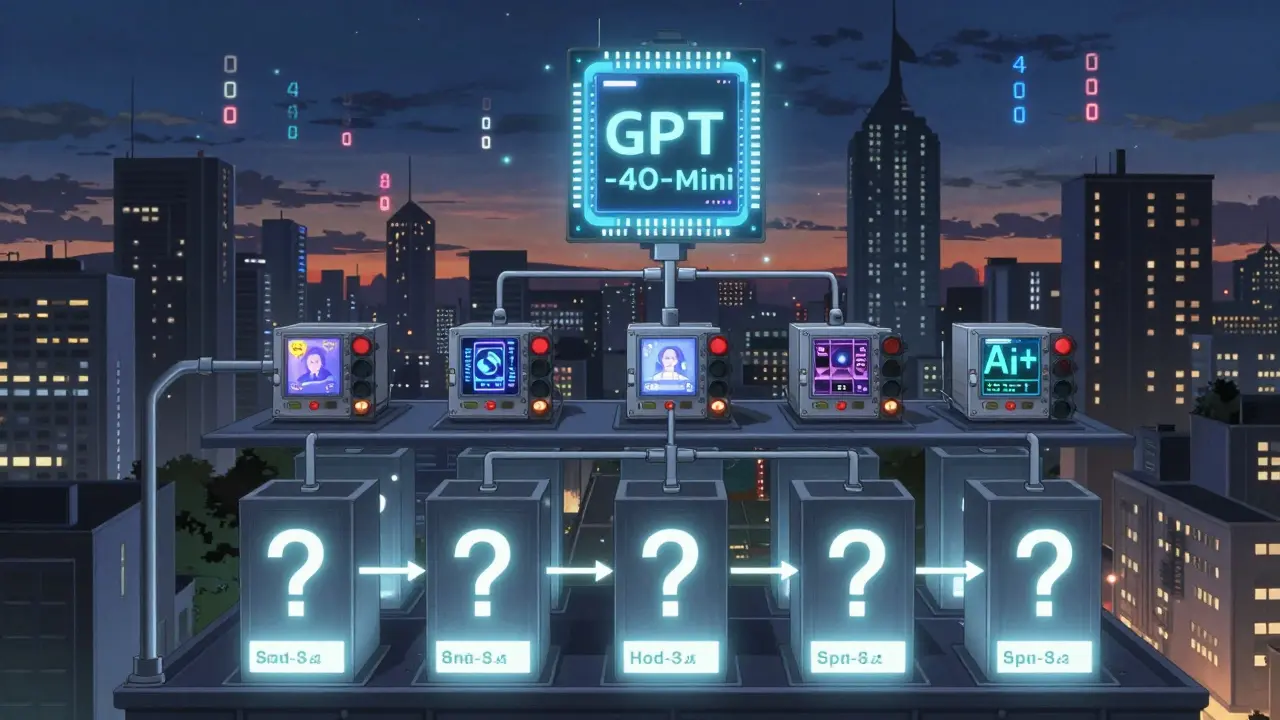

Not every question needs a brain the size of a supercomputer. Model routing splits incoming requests into tiers based on complexity. A lightweight classifier (think a 125M-parameter model trained on 10,000 labeled examples) looks at the input and decides: simple, medium, or complex. - Simple: "What’s your hours?", "Reset my password" → Send to Claude Haiku ($0.00015/1K tokens) - Medium: "Compare these two products" → Send to GPT-4o-mini ($0.00075/1K tokens) - Complex: "Explain the tax implications of this investment" → Send to GPT-4 ($0.03/1K tokens) Maxim AI’s 2025 benchmarks show this cuts costs by 37-46% across mixed workloads. The classifier itself is cheap to run. The savings? Massive. A Shopify team reported a 62% drop in LLM costs after implementing this, with zero user complaints.Trim the Fat in Your Prompts

Your prompts are bloated. You’re pasting in entire product catalogs, full chat histories, and redundant instructions. Every extra word is a token-and tokens cost money. DeepChecks found that removing duplicate context and forcing concise outputs (like "answer in two sentences") cuts token usage by 40%. Here’s how:- Use

max_tokensto cap response length - Replace "You are a helpful assistant..." with "Answer briefly."

- Use retrieval-augmented generation (RAG) to fetch only relevant context, not the whole database

- For chain-of-thought, use low-verbosity versions: "Think step by step" → "Step 1: ..."

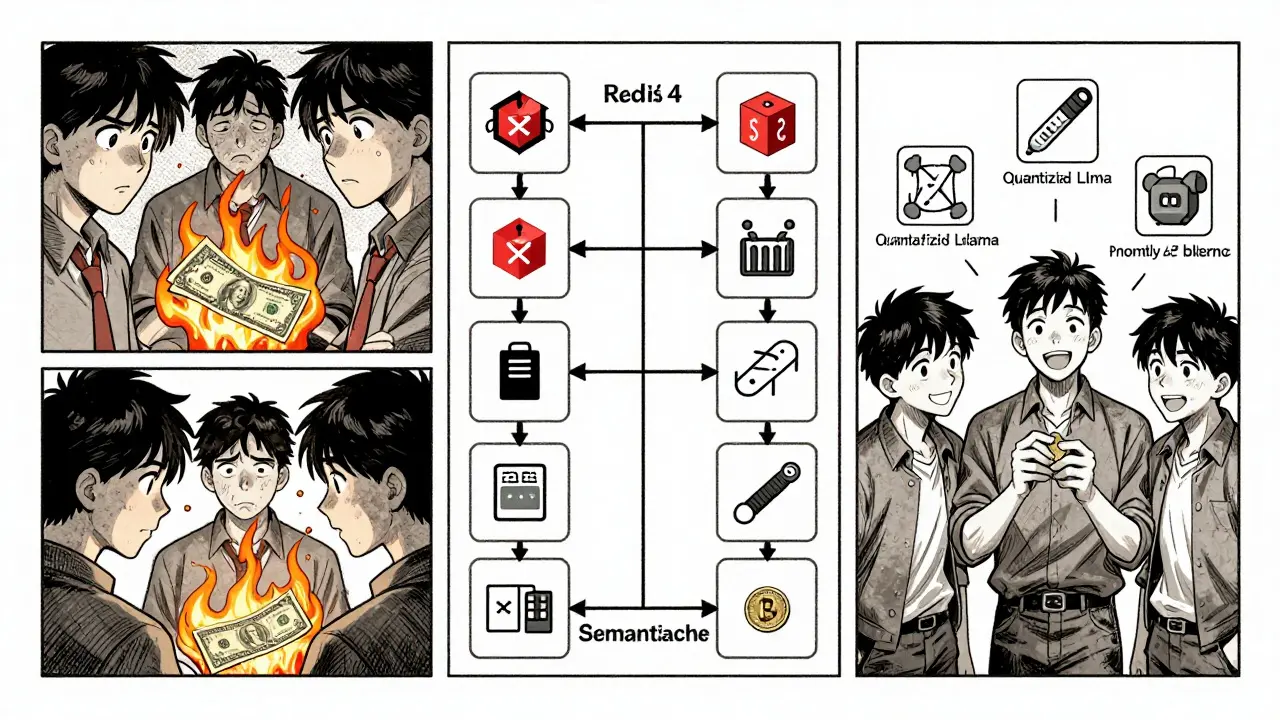

Cache What You’ve Seen Before

If 40% of your queries are repeats-"How do I return an item?", "What’s my order status?"-you’re paying to answer the same thing 40 times. Semantic caching solves this. Instead of storing exact text, store the embedding of the question. When a new question comes in, compare its embedding to stored ones. If it’s 90%+ similar, return the cached answer. Redis’s 2026 LLMOps Guide shows this cuts costs by 40-60% for repetitive tasks. One enterprise customer reduced their monthly bill from $82,000 to $31,000 using Redis for semantic caching. Token caching (like Leanware’s system) stores exact input-output pairs. Useful for static workflows. Semantic caching works better for paraphrased questions. Combine both if your workload has both repetition and variation.

Quantize-But Don’t Overdo It

Quantization reduces model weights from 32-bit floating point to 8-bit or even 4-bit. This slashes memory use by 75-90% and speeds up inference 2-4x. Tools like GGUF and llama.cpp make this easy. But here’s the catch: it can hurt accuracy. DeepChecks tested quantized Llama-2 on medical QA. Accuracy dropped 3-5%. For customer support? Fine. For diagnosing symptoms? Risky. Use quantization for:- Edge devices (phones, tablets)

- Non-critical tasks (content tagging, sentiment analysis)

- High-volume, low-stakes queries

- Legal, medical, or financial reasoning

- Tasks requiring nuanced understanding

Optimize Where You Run It

Cloud costs aren’t the same everywhere. AWS us-east-1 (N. Virginia) is 20% cheaper than eu-west-1 (Ireland). Azure East US is similarly lower than West Europe. If your users are mostly in North America, run your models there. Also, use reserved instances for predictable workloads. If you’re processing 10,000 queries daily, buy a reserved GPU. Saves 30-50%. For variable loads, use auto-scaling with Kubernetes or Ray. Don’t pay for idle GPUs at 2 a.m.Build the Layered Stack

The most successful teams don’t pick one trick. They stack them. Redis’s 2026 architecture recommends this order:- Start with semantic caching-catch repeats before they hit the model

- Apply prompt optimization-reduce what gets sent

- Route to the right model-don’t overpay for simple tasks

- Use quantized models where safe

- Cache final responses for fast repeats

What Not to Do

Avoid these traps:- Aggressively truncating context to save tokens-can drop accuracy by 15-20% on complex tasks

- Ignoring logging-63% of failed optimizations had no data on what queries were being sent

- Not monitoring quality-22% of quality drops went unnoticed until users complained

- Forgetting fallbacks-37% of outages happened because the system didn’t know what to do when routing failed

Real-World Results

- A fintech startup cut LLM costs by 52% by switching from real-time processing to batch processing weekly reports. Same output. Zero user impact. - A SaaS company with 2 million monthly users reduced their bill from $190,000 to $78,000 using model routing + semantic caching. They kept 97% of their NPS score. - An e-commerce platform saved $1.2M in 9 months by replacing GPT-4 with GPT-4o-mini for 80% of their product descriptions. These aren’t edge cases. They’re standard outcomes for teams that treat LLM cost as an engineering problem, not a budget line item.Where This Is Headed

By 2027, Gartner predicts 85% of production LLM deployments will use at least three of these techniques. Tools like Leanware’s "LLM Cost Explorer" (Jan 2026) now show real-time cost-quality tradeoffs. Redis updated its semantic caching in Feb 2026 to adapt similarity thresholds automatically. Maxim AI is building automated routing calibration for Q3 2026. The future isn’t about cheaper models. It’s about smarter systems. The companies that win won’t be the ones with the biggest AI budgets. They’ll be the ones who designed their architecture to waste nothing.Can I just use a smaller model and call it a day?

Not always. Smaller models are cheaper, but they can’t handle complex reasoning as well. The key is matching the model to the task. Use GPT-3.5-turbo for FAQs, but keep GPT-4 for financial analysis or legal summaries. Test each use case. You might find that 70% of your queries only need a lightweight model, but the remaining 30% still need power. That’s why routing matters.

Is semantic caching worth the setup time?

Yes-if you have repetitive queries. Customer support, help desks, and FAQ bots see the same questions over and over. If 30% or more of your inputs are repeats, semantic caching will pay for itself in days. For creative tasks like content generation, where every prompt is unique, it won’t help. Track your query patterns first. If 40% of your users ask "How do I reset my password?", caching that saves real money.

How long does it take to implement these changes?

It depends. Prompt optimization? A weekend. Model routing? 2-3 weeks for a solid classifier and testing. Full layered architecture (caching + routing + quantization)? 4-6 weeks. But the savings start immediately. Even just trimming your prompts can cut costs by 20% in 48 hours. Start with the low-hanging fruit. You don’t need to overhaul everything at once.

What if my team doesn’t have MLOps expertise?

You don’t need a full MLOps team. Start with prompt engineering and model selection. Those require only your AI or product team. Use tools like Redis or AWS SageMaker-they’ve built routing and caching into their platforms. You can enable model routing in SageMaker with a few clicks. Focus on what you can do now. Build expertise as you go.

Do these techniques work with open-source models like Llama or Mistral?

Absolutely. In fact, they’re even more important. Open-source models often run on your own infrastructure, so every extra token or idle GPU costs you directly. Quantization, caching, and routing work the same way. Llama-3-8B quantized to 4-bit with semantic caching and model routing can outperform GPT-4 on cost-per-query while matching its accuracy for many tasks.

Are there any hidden costs I should watch for?

Yes. Logging, monitoring, and data storage. If you’re not tracking what queries you’re sending, you can’t optimize. If you’re storing every input-output pair for auditing, that adds storage costs. Also, model routing adds latency-usually under 50ms, but it’s still extra. Test end-to-end. And never cut corners on quality monitoring. A 2% accuracy drop in a financial assistant can cost you far more than the saved tokens.

Comments

Barbara & Greg

It is deeply concerning how readily organizations abandon rigorous quality assurance in favor of cost-cutting measures masquerading as "architecture." The notion that reducing token usage or deploying quantized models is a neutral engineering decision ignores the ethical implications of degraded output in customer-facing systems. We are not optimizing systems-we are diluting human dignity in the name of efficiency.

There is a moral imperative to preserve cognitive integrity in AI interactions. When a financial assistant misinterprets tax implications due to prompt truncation, or a medical chatbot misdiagnoses because of 3-bit quantization, the consequences are not abstract-they are life-altering. The article treats accuracy as a variable to be traded, when it should be sacrosanct.

Let us not confuse economy with wisdom. The companies that succeed are not those that spend the least, but those that honor the responsibility of their technology. This is not engineering. It is ethical negligence dressed in Kubernetes.

February 9, 2026 AT 10:31

selma souza

There is a grammatical error in the second paragraph: "It feels safe. But 78% of customer service queries, according to FutureAGI’s 2025 data, are handled just as well by GPT-3.5-turbo. And it costs 70% less per token. The mistake? Not testing." The sentence "The mistake? Not testing." is a sentence fragment. It lacks a subject and a predicate. It should read: "The mistake is not testing." or "The mistake is that teams are not testing." Also, "GPT-3.5-turbo" should be consistently hyphenated. In one instance it appears as "GPT-3.5 turbo"-a typographical inconsistency that undermines professional credibility.

Furthermore, "ROUGE-L" should be preceded by "the" in formal technical writing. These are not minor issues. They erode trust in the entire argument.

February 10, 2026 AT 21:56

Michael Thomas

USA runs the best LLM infrastructure. Every other country is playing catch-up. If you're wasting money on cloud regions outside East Coast US, you're already losing. Stop overcomplicating this. Use GPT-3.5 for 80% of stuff. Cache the rest. Quantize the rest. Run on AWS. Done.

Europe? Too expensive. Africa? Don't even try. Build smart. Or get out.

February 12, 2026 AT 10:14

Thabo mangena

While the technical insights presented are indeed compelling, I must extend my appreciation for the thoughtful consideration of global equity in AI deployment. In South Africa, where infrastructure limitations are ever-present, the emphasis on lightweight models and semantic caching offers not merely cost savings but also accessibility.

For many of our small businesses, the ability to deploy a quantized Llama-3-8B model on a single Raspberry Pi 5, coupled with Redis-based caching, represents a transformative leap from exclusion to participation in the digital economy.

It is heartening to see that optimization is not synonymous with centralization. The layered architecture described here is, in essence, a democratizing force-one that empowers communities with limited capital to engage meaningfully with artificial intelligence.

I urge developers everywhere to consider not just efficiency, but inclusion. The true metric of success is not how little you spend, but how many you enable.

February 13, 2026 AT 19:18

Karl Fisher

Okay but like… have you SEEN the state of GPT-4o-mini’s responses? I ran a test last week where it called my dog "a sentient toaster with anxiety." I mean, is this really the future we’re betting on? Like, I get it-save money-but I also want my AI to not hallucinate that my cat is running a Ponzi scheme.

And don’t even get me started on semantic caching. What if someone rephrases "How do I reset my password?" as "I can’t log in pls help"? Does the system just say "I don’t know" and ghost them? I’ve seen this. It’s tragic. And also kinda funny? But not in a good way.

Also, who named this article? "Architecture Decisions That Reduce LLM Bills Without Sacrificing Quality"? Bro. You sacrificed quality. You just made it cheaper. That’s not magic. That’s Walmart.

February 14, 2026 AT 16:21

Buddy Faith

they said the same thing about cloud servers then they said the same thing about mobile apps then they said the same thing about css then they said the same thing about javascript now they say it about llms its always the same story they promise you efficiency then they take your soul

February 16, 2026 AT 02:16

Scott Perlman

Start simple. Trim your prompts. Cache the repeats. Use a smaller model for simple stuff. That’s it. No need to overthink. If it works, keep it. If it breaks, fix it. No magic. Just smart steps.

February 17, 2026 AT 17:34

Sandi Johnson

Oh wow. So we’re just gonna let the algorithm decide who gets GPT-4 and who gets Claude Haiku? Like, what happens when the classifier mislabels a life-or-death medical question as "simple"? Oh wait-we already know. It happened. And then we got a memo saying "we’re optimizing for cost-per-query." This isn’t architecture. It’s a corporate death spiral with a PowerPoint.

February 19, 2026 AT 04:26

Eva Monhaut

I love how this piece doesn’t just talk about cost-it talks about intention. Too often, we treat LLMs like magic boxes we throw money at until they stop breaking. But this? This is craftsmanship.

Model routing isn’t just a cost hack-it’s a philosophy. It says: not every question deserves a symphony. Sometimes, a lullaby is enough. And sometimes, a lullaby is all the patient needs to sleep peacefully.

The real win here isn’t the 62% cost drop. It’s the quiet dignity restored to users who no longer get robotic, over-explained nonsense. When you trim the fat from a prompt, you’re not just saving tokens-you’re honoring the user’s time.

I’ve seen teams implement this and cry-not from sadness, but from relief. They finally feel like engineers again, not accountants with a GPU.

Let’s not forget: the best systems don’t just reduce cost. They restore humanity.

February 20, 2026 AT 15:08