Legal Document Analysis with LLMs: Summaries, Clauses, and Risk Signals

- Mark Chomiczewski

- 23 April 2026

- 0 Comments

Reading a 60-page commercial lease or a dense Master Service Agreement (MSA) usually means hours of grueling manual review, hunting for that one nasty indemnity clause hidden in the fine print. For most legal teams, the bottleneck isn't the law itself, but the sheer volume of text. Legal Document Analysis powered by Large Language Models (LLMs) is changing this by shifting the lawyer's role from "searcher" to "reviewer." Instead of spending four hours finding a risk, you spend fifteen minutes validating one that the AI already flagged.

The Quick Win: What LLMs Actually Do for Legal Text

If you're looking for the bottom line, LLMs handle the heavy lifting of initial triage. They don't just "summarize" text; they perform semantic analysis to understand obligations and liabilities. Here is how they practically change the workflow:

- Instant Summaries: Turning a mountain of legalese into a few bullet points of key deliverables and deadlines.

- Clause Extraction: Automatically pulling every "Termination for Convenience" or "Force Majeure" clause into a single table.

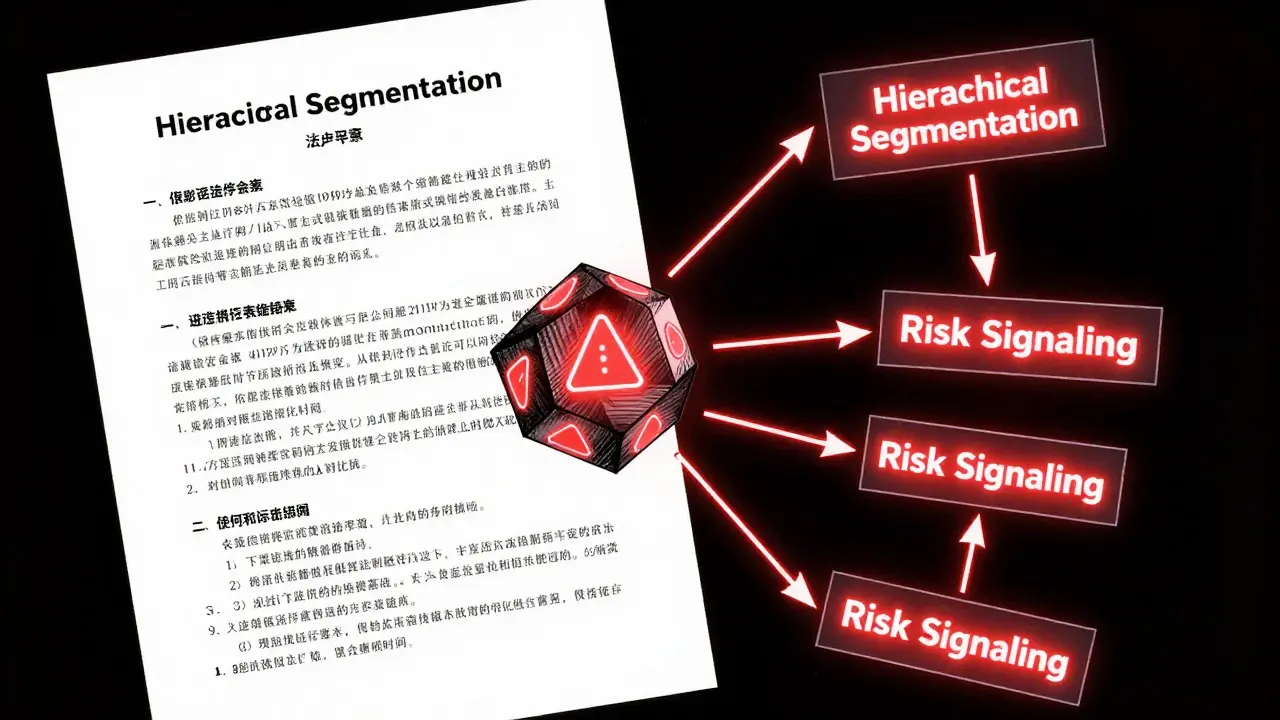

- Risk Signaling: Flagging "red flag" language, such as uncapped liability, that deviates from a company's standard playbook.

Solving the "Long Document" Problem

One of the biggest hurdles in using AI for law is the token limit. Most LLMs can't "read" a massive contract in one go without losing the plot or forgetting the beginning by the time they hit the end. To fix this, modern systems use a method called Hierarchical Segmentation. Instead of shoving the whole PDF into the prompt, the system breaks the document into logical chunks (sections, then paragraphs) and processes them in stages.

This is often paired with Chain-of-Thought Prompting. Rather than asking the AI "Is this contract risky?", the system asks it to first list the obligations, then analyze the limitations of liability, and finally conclude if the risk is acceptable. This structured reasoning prevents the AI from jumping to a wrong conclusion and ensures that context is preserved across different sections of the document.

Mining Clauses and Detecting Risk Signals

Extracting a clause is easy; understanding if it's a problem is the hard part. This is where Clause Libraries come into play. A sophisticated legal AI doesn't just guess; it compares the contract text against a library of "gold standard" clauses. If your company's standard is a 30-day notice for termination, but the contract says 90 days, the AI flags this as a deviation.

Risk signals are specifically tuned to identify "dealbreakers." For example, an LLM can be instructed to look for uncapped liability. In a standard B2B contract, liability is usually capped at the total fees paid. If the AI finds a phrase like "the Provider shall be liable for all damages without limitation," it triggers a high-severity risk signal. This allows a junior associate or a procurement manager to spot a catastrophic financial risk in seconds.

| Metric | Proprietary Models (e.g., GPT-4) | Open-Source Models (e.g., Llama) | Impact on Legal Work |

|---|---|---|---|

| Correctness (F1 Score) | High | Moderate to High | Reduces the rate of "hallucinated" clauses. |

| Output Accuracy | Very High | Moderate | Ensures extracted text matches the original wording. |

| "Laziness" Rate | Low | Higher | Prevents the AI from saying "no clause found" when one actually exists. |

Turning Analysis into Action

The real value isn't in the analysis itself, but in the output. High-end GenAI tools now integrate directly into the review process. Imagine a screen where the contract is on the left and a risk dashboard is on the right. When you click a risk flag, the AI provides a redline suggestion-actual language you can copy and paste to fix the problem.

Beyond just reading, these systems are becoming "matter-aware." This means they don't just analyze a document in a vacuum; they know that this specific contract is for a high-stakes acquisition in the healthcare sector, so they automatically prioritize HIPAA Compliance and data privacy clauses over general commercial terms. They can also extract all key dates to automatically populate a corporate calendar, ensuring no renewal deadline is missed.

The Human-in-the-Loop Necessity

Despite the power of these tools, the "hallucination" risk is real. An LLM might confidently claim a clause exists when it doesn't, or misinterpret a complex double-negative in a legal sentence. This is why the industry is moving toward a Human-in-the-Loop (HITL) model. The AI acts as the first-pass filter, highlighting areas of concern, but the final legal opinion always comes from a human.

To minimize errors, experts use Hybrid Extractive-Abstractive Summarization. The AI first extracts the exact sentences (extractive) and then writes a summary of what those sentences mean (abstractive). By keeping the original text linked to the summary, the lawyer can verify the AI's claims with a single click, removing the need to trust the model blindly.

Can LLMs replace lawyers for contract review?

No. LLMs are excellent at pattern recognition and data extraction, but they lack professional judgment, ethical accountability, and a deep understanding of specific jurisdictional nuances. They function as highly efficient junior assistants, not as replacement attorneys.

How do you handle the privacy of sensitive legal documents?

Privacy is handled by using private VPC (Virtual Private Cloud) deployments of LLMs or through API agreements that explicitly forbid the model provider from using submitted data to train future versions of the model. Enterprise-grade legal AI tools typically use zero-retention policies.

What is the difference between a summary and a risk signal?

A summary provides a general overview of what the document says (e.g., "This is a 3-year service agreement"). A risk signal identifies a specific term that could cause harm or liability (e.g., "The indemnity clause is one-sided and favors the vendor excessively").

What are the best models for legal work today?

Proprietary models like those from the GPT-4 family generally outperform open-source models in legal reasoning and correctness. However, fine-tuned open-source models are becoming competitive for specific, narrow tasks like clause extraction.

How does hierarchical chunking help with long contracts?

Hierarchical chunking prevents the model from becoming overwhelmed by too much text. By breaking the contract into smaller, meaningful pieces and summarizing them iteratively, the AI can maintain a "memory" of the whole document without losing detail.

Next Steps for Implementation

If you're looking to bring LLM analysis into your workflow, start with a low-risk pilot. Don't start with your most complex merger; start with standard NDAs or vendor agreements. Define your "gold standard" library first-what does a "perfect" clause look like for your business? Once the AI can consistently identify those, move toward more complex risk detection and automated redlining.