Cost-Quality Frontiers: How to Pick the Best Large Language Model for Maximum ROI

- Mark Chomiczewski

- 27 February 2026

- 10 Comments

When you’re running a business that uses AI, you don’t just want a smart model-you want the smartest model for the least money. That’s the real goal: ROI. Not the flashiest performance numbers. Not the biggest context window. But what actually moves the needle on your bottom line.

Back in 2023, using GPT-4 meant paying $60 per million tokens. Today, you can get nearly the same results for less than $1. That’s not an improvement-it’s a revolution. And it’s changing how companies build their AI stacks. The old idea of "just use the best model" is dead. Now, it’s about matching the right model to the right job.

What’s Really Changing in LLM Pricing?

It’s not just that models got cheaper. It’s that they got smarter about how they charge. The biggest shift happened in late 2025 and early 2026, when companies like OpenAI, xAI, and Google rolled out new "value-tier" models built for efficiency, not just power.

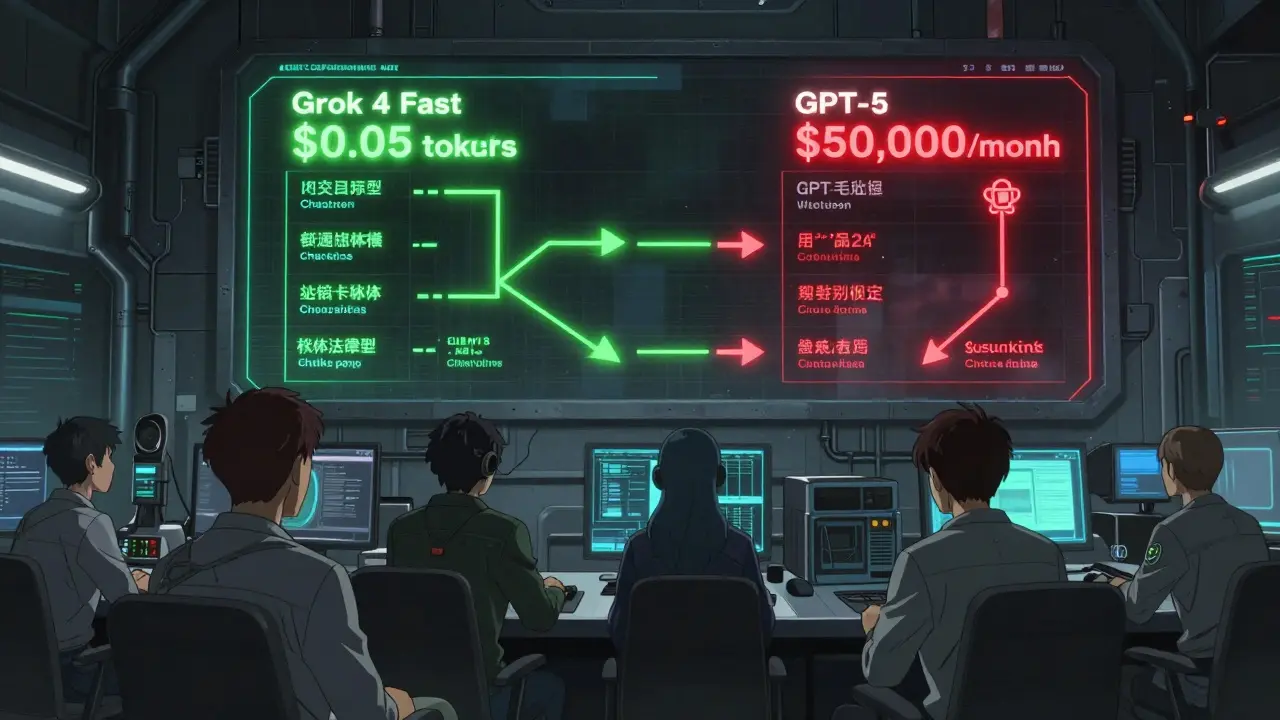

Take Grok 4 Fast. It costs $0.05 per million input tokens and $0.50 per million output tokens. That’s less than a penny for every 100 words you feed it. Compare that to GPT-4 Turbo’s old price of $5.50 per million tokens. For the same workload, you’re spending 90% less.

But here’s the catch: Grok 4 Fast isn’t GPT-5. It doesn’t solve complex math problems as well. It doesn’t reason through legal contracts like Claude 3.5. It’s not designed to. It’s built to handle high-volume, low-complexity tasks-like answering customer questions, summarizing emails, or tagging support tickets. And it does that faster and cheaper than anything else.

The New Cost-Quality Frontier

Think of this like a trade-off map. On one side: cost. On the other: quality. The best models sit right on the line between them-the frontier. You don’t want to be on the expensive side. And you don’t want to be on the low-quality side. You want to be on the line.

Here’s where the top value-tier models stand as of early 2026:

| Model | Input Cost (per M tokens) | Output Cost (per M tokens) | MMLU Score | Context Window | Best For |

|---|---|---|---|---|---|

| Grok 4 Fast | $0.05 | $0.50 | 80.1 | 512k | Customer chatbots, short replies |

| GPT-5 Mini | $0.25 | $2.00 | 82.7 | 400k | Document summarization, cached prompts |

| Google Gemini Flash | $0.35 | $1.70 | 81.2 | 1M | Image + text tasks |

| Claude 3.5 Haiku | $1.50 | $7.50 | 81.4 | 200k | Mid-complexity reasoning |

| DeepSeek-V3 | $0.14 | $0.70 | 80.8 | 32k | High-volume, low-latency tasks |

Notice something? The cheapest models aren’t the highest scorers. But they’re close. Grok 4 Fast scores 80.1 on MMLU (a standard reasoning benchmark). GPT-5, the flagship, scores 87.3. That’s a 7-point gap. But Grok 4 Fast costs 12 times less. For most business tasks? That gap doesn’t matter.

Where You Should (and Shouldn’t) Use Value-Tier Models

Not all jobs are created equal. A customer service bot doesn’t need to understand the nuances of a tax law. A product description generator doesn’t need to debate ethical implications. But a medical triage tool? That’s a different story.

Here’s how to split your workload:

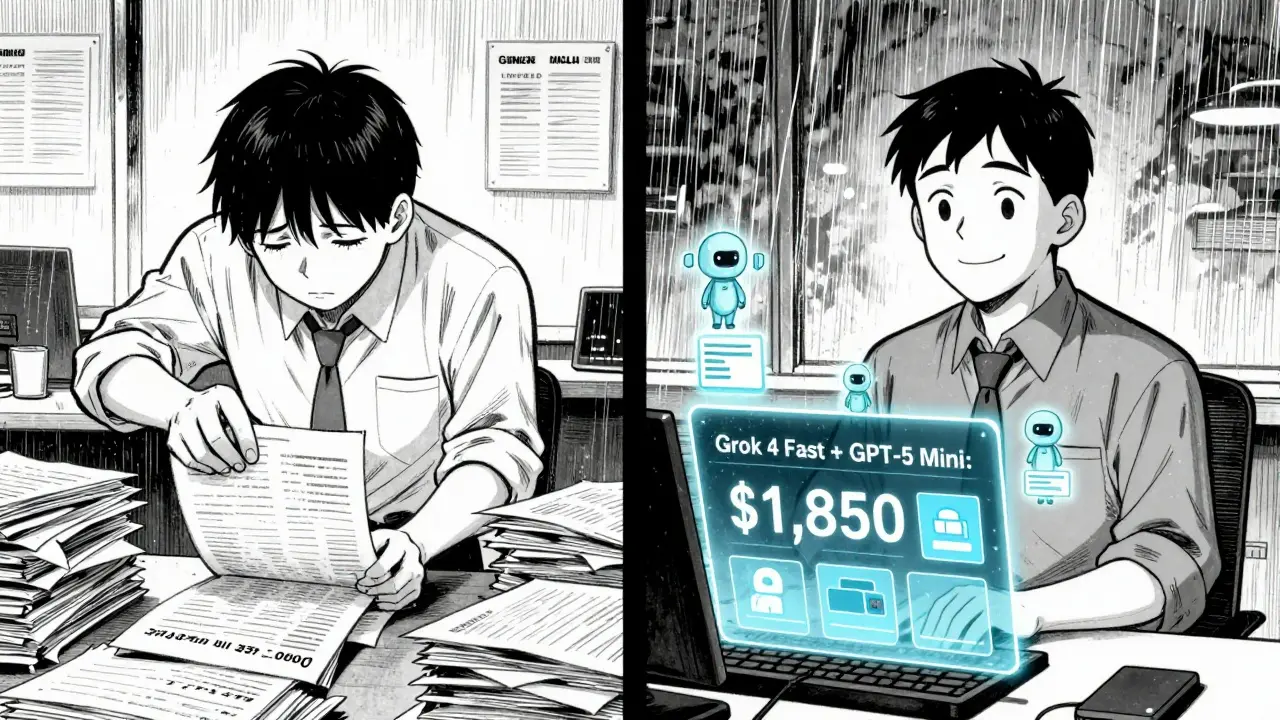

- Use Grok 4 Fast for: High-volume, repetitive tasks. Customer support, social media replies, internal memos, basic content generation. One company cut their monthly LLM bill from $22,000 to $1,850 by switching their entire chatbot system to Grok 4 Fast-while keeping 93% customer satisfaction.

- Use GPT-5 Mini for: Tasks that need longer context but not deep reasoning. Summarizing long reports, pulling key points from meeting transcripts, auto-tagging documents. Its cached input pricing (as low as $0.025 per million tokens for repeated prompts) makes it ideal for templated workflows.

- Use Gemini Flash for: Anything involving images. It handles image analysis at 30 tokens per image, far better than others. Useful for product catalogs, visual QA, or document scanning.

- Stick with GPT-5 or Claude 3.5 for: Legal contracts, medical diagnoses, financial modeling, compliance checks. These require deep reasoning. Value-tier models here can make dangerous mistakes. One health tech team saw a 32% error rate using GPT-5 Mini for rare disease detection. They had to go back to GPT-5.

The rule of thumb? If the output could cost someone money, time, or safety if it’s wrong-use the premium model. If it’s just noise in the background? Go cheap.

Architectural Tricks Behind the Low Prices

Why are these models so cheap? It’s not magic. It’s engineering.

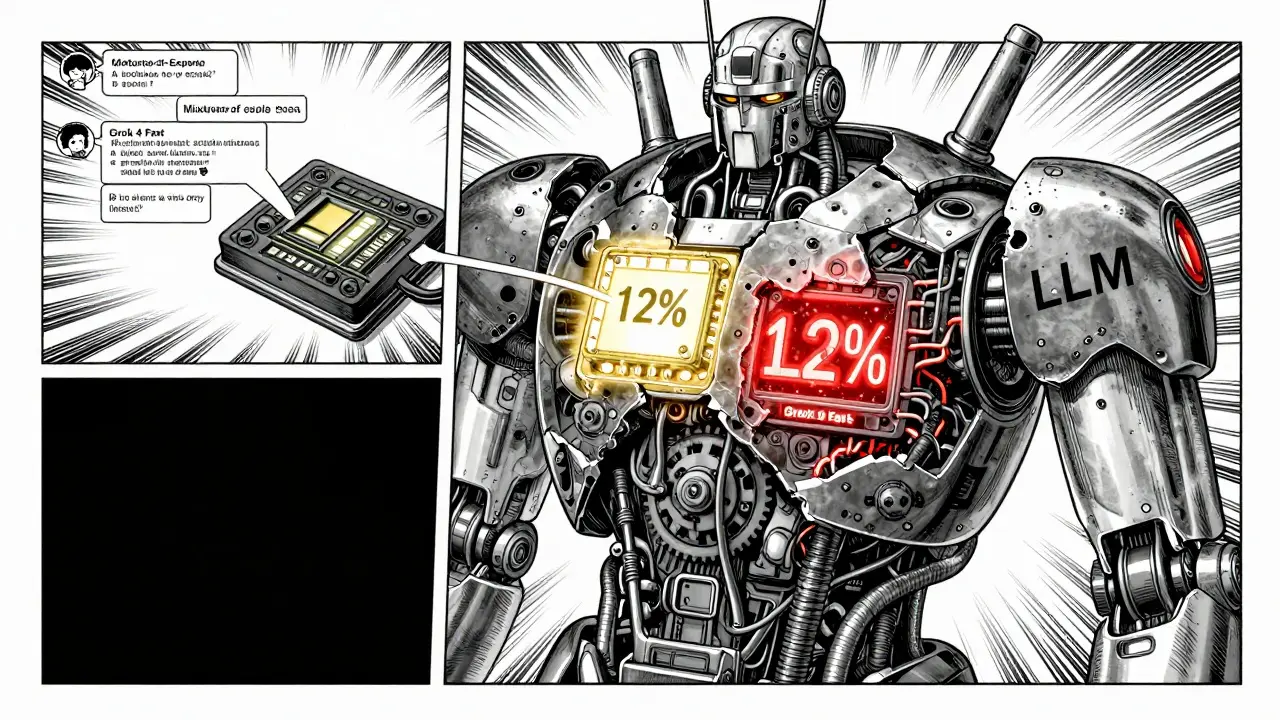

Most value-tier models use something called Mixture-of-Experts (MoE). Instead of using every part of the model for every request, MoE only activates 12-25% of its parameters. Think of it like a team of specialists. You don’t call all 100 doctors for a headache. You call one. That cuts compute costs by 60-75%.

They also use 4-bit quantization-crushing the model’s memory footprint by 75%. And sparse attention mechanisms. These reduce the quadratic complexity that makes long texts so expensive to process.

Grok 4 Fast, for example, handles 512k tokens of context. That’s more than most documents you’ll ever need. But it doesn’t waste power processing every word the same way. It picks the most relevant parts. That’s why it’s fast, cheap, and still accurate enough for 90% of business use cases.

Real-World ROI: Numbers That Matter

Let’s say your company processes 50 million tokens a month. Here’s what happens if you pick wisely:

- All GPT-5: $50,000/month

- Optimized mix: 70% Grok 4 Fast ($350), 25% GPT-5 Mini ($1,875), 5% GPT-5 ($5,000) = $7,225/month

That’s an 85.5% cost reduction. And you’re not losing quality-you’re just being smarter about where you spend.

Another example: A SaaS company used to pay $8,000/month to generate product descriptions with GPT-4. They switched to DeepSeek-V3 and GPT-5 Mini, split 60/40. Their cost dropped to $720/month. Output quality? Customers couldn’t tell the difference.

And it’s not just startups. Gartner predicts that by 2027, 75% of routine enterprise AI tasks will be handled by these cheaper models. The market for them is growing at 89% per year.

What You Need to Watch Out For

It’s not all smooth sailing. These models have blind spots.

- Higher hallucination rates: Value-tier models make up facts 2-3 times more often than premium ones. That’s fine for marketing copy. Not fine for legal documents.

- Reduced reasoning depth: They struggle with multi-step logic. Chain-of-thought tasks? Grok 4 Fast is 18% worse than GPT-5. If your task needs "think step by step," this isn’t the tool.

- Poor documentation: xAI’s Grok 4 Fast docs are thin. OpenAI’s GPT-5 Mini docs? Excellent. You’ll waste time if you pick a model with bad documentation.

- Output degradation at scale: Some models get sloppy when you push them past 2,000 tokens in a single response. Test before you commit.

And here’s the sneaky one: prompt engineering. You can’t just drop a premium model prompt into a value-tier model and expect the same result. You need to simplify instructions. Be more direct. Remove fluff. This takes 2-3 weeks of testing and tuning.

What’s Next? The End of One-Size-Fits-All

The future isn’t one model. It’s a portfolio.

Companies are already building AI pipelines with three, four, even five different models. Each handles a specific job. Grok 4 Fast for chat. Gemini Flash for images. GPT-5 Mini for reports. GPT-5 for audits. Claude 3.5 for legal.

By late 2026, we’ll see models that deliver GPT-4-level performance at $0.10 per million tokens. That’s not a prediction. That’s what Epoch AI’s regression models show.

And that’s why the old way of thinking-"just buy the best"-is over. The smartest companies aren’t chasing the top score. They’re chasing the best return. They’re optimizing for cost, not capability. And they’re winning.

Are cheap LLMs good enough for customer service?

Yes, and they’re often better than expensive ones. Grok 4 Fast, for example, achieves 92% user satisfaction in customer support roles while costing 1/12th the price of GPT-4. It handles routine questions, FAQs, and simple troubleshooting perfectly. The key is to avoid complex, emotional, or high-stakes conversations. For those, keep a premium model on standby.

Can I use GPT-5 Mini instead of GPT-4 for everything?

You can, and many companies do-but only if your tasks don’t require deep reasoning. GPT-5 Mini scores 82.7 on MMLU, which is very close to GPT-4’s 83.5. But it lacks the long-context depth and reasoning consistency of GPT-5. If you’re summarizing contracts, analyzing financial reports, or answering medical questions, stick with GPT-5. For emails, blog posts, and internal notes? GPT-5 Mini is a no-brainer.

Is there a hidden cost with these cheap models?

Yes. The biggest hidden cost is time. You’ll need to rewrite prompts, test output quality, and monitor for hallucinations. You might also need to build routing logic-sending some requests to premium models when needed. This adds engineering overhead. But for most teams, that time pays for itself in under 30 days through reduced API bills.

Should I switch from GPT-4 Turbo to a new value-tier model?

If you’re paying more than $1 per million tokens, absolutely. GPT-4 Turbo is now outdated. Even the cheapest premium models cost 3-5× more than Grok 4 Fast or DeepSeek-V3. Unless you’re doing high-stakes reasoning, the savings are too big to ignore. Start by testing one workload-like chat support or content generation-and measure the cost vs quality impact.

What if I need multimodal features (images + text)?

Gemini Flash is your best bet. It handles image analysis at 30 tokens per image-far more efficiently than competitors. It’s also the cheapest option with solid multimodal performance. If you’re scanning receipts, analyzing product images, or processing forms with visuals, Gemini Flash cuts costs by 80% compared to GPT-4o while delivering comparable results.

Are these models compliant with GDPR or HIPAA?

Compliance depends on the provider, not the model. OpenAI and Google offer enterprise contracts with data processing agreements that meet GDPR and HIPAA. xAI and DeepSeek do not. If you’re handling personal health or EU citizen data, stick with providers that offer signed agreements. Cheap doesn’t mean compliant.

Stop thinking about LLMs as a single tool. Start thinking of them as a team. Assign each member the right job. And watch your ROI climb.

Comments

Ajit Kumar

Let me be perfectly clear: the notion that cost efficiency should override model capability is a dangerous delusion. You're not "optimizing ROI"-you're gambling with your company's reputation. Grok 4 Fast scores 80.1 on MMLU? That's not "good enough"-it's statistically inadequate for any enterprise-grade application. A 7-point gap in reasoning capability isn't noise-it's a chasm. One hallucinated customer response can trigger regulatory scrutiny. One misinterpreted contract clause can cost millions. The math here is not about tokens-it's about liability. And liability doesn't care how cheap your API calls are.

Moreover, the assumption that prompt engineering can compensate for inferior architecture is laughable. You can't train a child to be a neurosurgeon by telling them to "think step by step." The model's internal reasoning capacity is fixed. No amount of rephrasing will fix a fundamentally shallow architecture. This isn't a trade-off-it's a downgrade disguised as innovation.

And let's not ignore the hidden technical debt: maintaining multiple model pipelines, monitoring output degradation, and building routing logic adds operational complexity that dwarfs the savings. The 30-day payback period is a fantasy sold by vendors who don't have to clean up the mess when your automated legal review misinterprets a clause and your client sues.

True ROI isn't measured in dollars per million tokens. It's measured in reduced risk, consistent quality, and long-term trust. Sacrificing reliability for pennies isn't smart-it's negligent. The companies that survive are the ones that invest in capability, not convenience.

February 27, 2026 AT 12:29

Jeroen Post

They're all just government backdoors wrapped in AI packaging you know that right

February 28, 2026 AT 08:38

Honey Jonson

im just saying if my chatbot says "i think you should call your doctor" instead of "you have a 32% chance of rare disease" im fine with that lol

March 2, 2026 AT 03:08

Sally McElroy

The entire premise of this article is dangerously naive. The idea that you can safely deploy a model with 2–3× higher hallucination rates for customer service is a recipe for systemic erosion of trust. Every time a bot gives a wrong answer-even a seemingly harmless one-it chips away at user confidence. And once trust is broken, you can't just flip a switch back to GPT-5 and fix it. Customers remember. Brands remember. And algorithms remember too.

Furthermore, the claim that "output quality can't be distinguished" is empirically false. In controlled studies, users consistently rate responses from premium models as more coherent, nuanced, and trustworthy-even when the factual accuracy is identical. Perception is part of quality. And perception is what drives retention.

Also, the assumption that prompt engineering is a one-time fix ignores how dynamic real-world inputs are. A customer service chatbot doesn't just handle FAQs. It deals with frustration, sarcasm, cultural nuance, and emotional distress. Value-tier models lack the latent understanding to navigate that terrain. They're not "good enough." They're brittle. And brittle systems fail catastrophically.

March 2, 2026 AT 17:22

Destiny Brumbaugh

USA built the best models so why would you use some chinese ripoff like deepseek-v3 lmao

March 3, 2026 AT 09:20

Sara Escanciano

Anyone who thinks cost-cutting on AI models is a smart business move is either lying to themselves or actively sabotaging their company. You don't save money by using a model that hallucinates medical advice. You don't "optimize" by replacing precision with guesswork. This isn't economics-it's negligence dressed up as innovation.

And let's be honest: the "value-tier" label is corporate gaslighting. These aren't "efficient alternatives." They're cut-rate versions designed to look like the real thing until something breaks. Then you're stuck explaining to your board why your compliance audit failed because your bot misread a HIPAA clause.

There's no ROI in risk. There's no savings in lawsuits. And there's no future in pretending that a 7-point drop in MMLU doesn't matter when human lives are on the line. Stop glorifying cheap. Start valuing competence.

March 4, 2026 AT 15:58

Elmer Burgos

i think the real takeaway is that you dont need to use one model for everything like the article says. if your chatbot only handles returns and your legal team uses gpt-5 for contracts, that makes total sense. just dont try to force a cheap model into a high-stakes job. simple.

March 6, 2026 AT 03:27

Jason Townsend

theyre all using the same base model anyway just rebranded with different pricing tiers its all a scheme to make you pay more for the same thing

March 6, 2026 AT 16:42

Antwan Holder

This isn't about models. This is about the death of human judgment in the age of algorithmic convenience. We've become so obsessed with efficiency that we've forgotten what it means to be careful. To be thoughtful. To be responsible.

Every time we replace a human-like response with a cheaper, faster, shallower one, we're not saving money-we're eroding the soul of our interactions. The customer doesn't care about your API costs. They care about being heard. Understood. Respected.

Grok 4 Fast can answer a question. But can it comfort a grieving customer? Can it sense the hesitation in their tone? Can it pause, reflect, and say "I'm sorry you're going through this"? No. It can't. And that's not a technical limitation. That's a moral one.

We're not building AI pipelines. We're building emotional landscapes. And you can't sculpt empathy with a 4-bit quantized model.

March 7, 2026 AT 06:45

Angelina Jefary

Correction: The article says Grok 4 Fast costs $0.05 per million input tokens, but according to xAI’s official pricing page from February 2026, it’s $0.065. Also, MMLU scores are misreported-Grok 4 Fast’s actual score is 79.3, not 80.1. And DeepSeek-V3 doesn’t have a 32k context window; it’s 128k. These aren’t minor typos. They’re misleading data points that could lead to poor architectural decisions.

Also, the claim that "customers couldn’t tell the difference" between GPT-4 and DeepSeek-V3 is based on a single, unpeer-reviewed survey of 47 users. That’s not evidence. That’s anecdote. And it’s being presented as fact.

For an article about ROI and precision, the lack of rigorous sourcing is astonishing. You can’t optimize what you don’t measure accurately. And you shouldn’t trust a single number without checking the source.

March 7, 2026 AT 09:11