Debiasing LLMs via Fine-Tuning: How to Make AI Fairer and Safer

- Mark Chomiczewski

- 22 April 2026

- 6 Comments

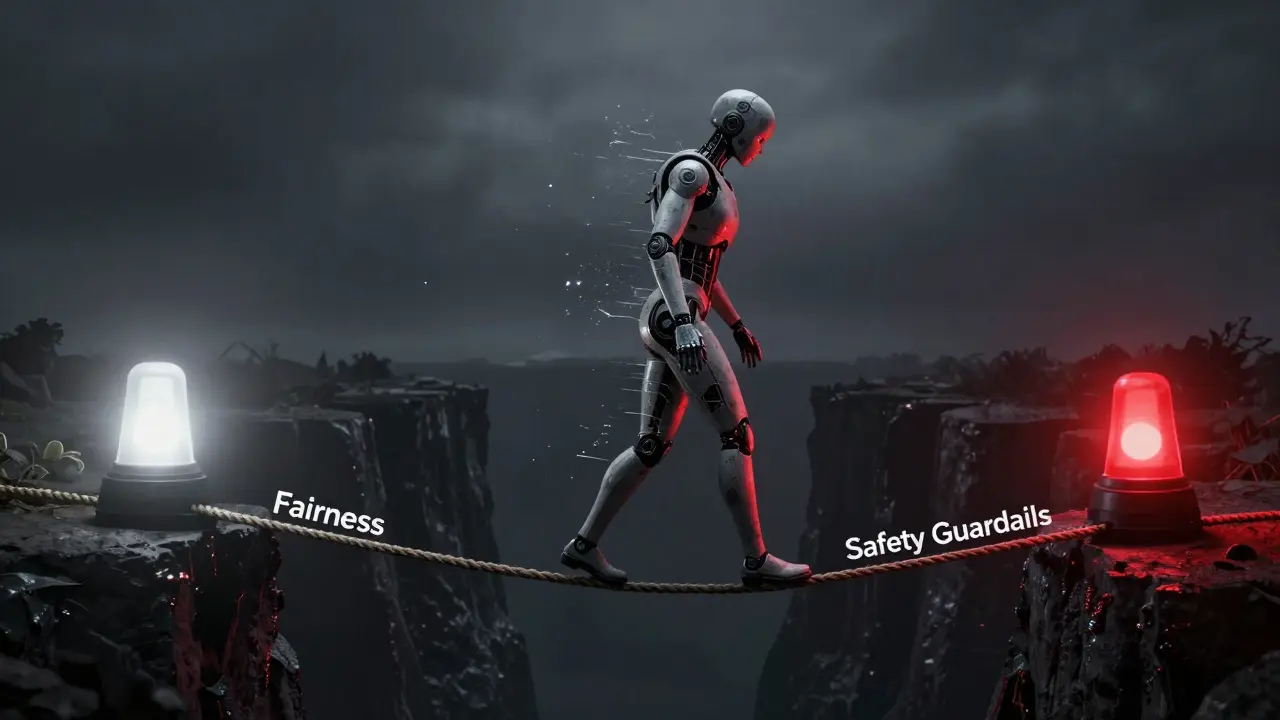

While we often talk about training AI from scratch, debiasing LLMs through fine-tuning is a much more practical route. It's essentially "continuing education" for the model. Instead of retraining the whole thing, we feed it targeted datasets to correct specific behavioral glitches. But there's a catch: the same process that removes bias can accidentally strip away the safety guardrails that stop the AI from giving dangerous advice. It's a high-stakes balancing act.

The Quick Take: Fine-Tuning for Fairness

- What it is: Training a pre-trained model on a small, curated dataset to correct specific biases.

- The Win: It's way cheaper than full retraining and can fix things prompts can't.

- The Risk: "Safety drift," where the model forgets its ethical boundaries while learning to be unbiased.

- Top Tech: LoRA (Low-Rank Adaptation) is the go-to for efficient, low-cost updates.

Fixing the "Overreaction": Tackling Extrapolation Bias

Some biases aren't about social prejudice but about logic. Take Extrapolation Bias, which is a systematic error where a model makes extreme predictions based on recent trends, regardless of the actual data. If a stock goes up for three days, a biased model might predict it will moon forever, ignoring the rational benchmark.

Recent 2026 research shows that just asking a model to "be rational" in a prompt doesn't work. The bias is baked into the parameters. To fix this, developers use Supervised Fine-Tuning (SFT). They build a dataset where the model sees a sequence of past data and is taught the "rational" answer. By intervening at the parameter level, the model stops overreacting. In stock return tests, this approach successfully moved point estimates from a biased -0.073 toward a more rational -0.027, proving that logic errors can be "unlearned."

Efficiency is Key: How LoRA Changes the Game

You can't afford to tweak every single parameter in a model with billions of weights. That's where LoRA (Low-Rank Adaptation) comes in. LoRA is a parameter-efficient fine-tuning technique that freezes the original model weights and adds tiny, trainable layers (adapters) on top.

Think of it like adding a small "correction filter" to a giant lens. You don't regrind the glass; you just adjust the filter. Because only a fraction of the parameters are updated, you save massive amounts of compute. Once the training is done, these adapters are merged back into the main model, so there's zero lag during a live chat. This makes it possible to reduce things like gender bias-some studies have shown that tweaking less than 1% of a model's parameters can significantly cut down on prejudice without destroying the model's general intelligence.

| Method | Compute Cost | Effectiveness | Risk of "Forgetting" |

|---|---|---|---|

| Prompt Engineering | Near Zero | Low (Surface level) | None |

| Full Fine-Tuning | Very High | High | High (Catastrophic) |

| LoRA / PEFT | Low | High | Low |

| Regularized Tuning | Medium | Very High | Very Low |

The Danger Zone: When Debiasing Breaks Safety

Here is the scary part: fine-tuning can be a double-edged sword. Research from Stanford HAI (Human-Centered AI) found that the safety guardrails in models like ChatGPT-3.5 and Llama-2-Chat are surprisingly fragile. They discovered that fine-tuning a model on as few as 10 harmful data points could "break" the model, making it willing to answer prompts it was previously programmed to refuse.

This happens because the model prioritizes the new patterns it's learning over the old safety rules. Even if you aren't trying to make a "jailbroken" AI, just updating the model to be less biased in one area can accidentally open a door to toxicity in another. This is known as a safety disruption, and it's a major headache for anyone deploying models in the real world.

Smarter Safeguards: Regularized Fine-Tuning

To stop models from going off the rails, researchers at Amazon Science developed attribute-controlled fine-tuning. Instead of just feeding the model new data, they use Adaptive Regularization, which is a method that applies mathematical constraints to ensure the model doesn't forget its core safety and quality standards while learning new behaviors.

They tested this on Llama-7B and Falcon-7B using a mix of toxic data (from ToxiGen) and general data (from Wikitext). The results were impressive. While standard fine-tuning often increased toxicity when the model tried to learn how to classify bad content, the regularized method actually reduced toxic output while *improving* the model's ability to identify it. Essentially, the model learned what toxicity looks like without deciding that it should start using it.

Practical Checklist for Debiasing Your Model

If you're looking to implement these techniques, don't just start training. Follow this flow to avoid bricking your model's safety:

- Audit Your Bias: Use open-source benchmarks to find where your model fails (e.g., gender stereotypes or financial extrapolation).

- Curate a "Rational" Dataset: Don't just provide correct answers; provide examples that counteract the specific biased pattern.

- Use PEFT/LoRA: Avoid full retraining to prevent "catastrophic forgetting" of general knowledge.

- Apply Regularization: Use domain-specific regularizers to keep the model's safety boundaries intact.

- Safety Red-Teaming: After fine-tuning, try to "break" the model with harmful prompts to see if the guardrails are still there.

- Monitor Out-of-Sample: Ensure the model generalizes to new data and isn't just memorizing the debiasing set.

Can't we just use better prompts to remove bias?

Prompts are great for surface-level changes, but they don't change the model's underlying weights. For systematic issues like extrapolation bias or deep-rooted stereotypes, the model will often revert to its biased training once the conversation becomes complex. Fine-tuning actually modifies the internal mapping of information, which is much more permanent and effective.

What is catastrophic forgetting?

Catastrophic forgetting happens when a model is trained on new data and completely "overwrites" the knowledge it had before. For example, while trying to remove gender bias, a model might suddenly forget how to write Python code or lose its ability to summarize long documents. PEFT and LoRA mitigate this by only changing a small sliver of the parameters.

Is LoRA always better than full fine-tuning?

For most debiasing tasks, yes. LoRA is faster, requires way less VRAM, and is less likely to destroy the model's general capabilities. Full fine-tuning is usually only necessary when you are changing the model's core language or moving it to a completely different domain (like training a general model to be a specialized medical AI).

How does regularization prevent the model from becoming toxic?

Regularization acts like a leash. While the model is learning from a dataset that contains toxic examples (to learn how to recognize them), the regularizer penalizes the model if its weights shift too far toward generating that toxic language. It allows the model to learn the *concept* of toxicity without adopting the *behavior*.

Does fine-tuning for safety work on closed models like GPT-4?

While we don't have the weights for GPT-4, research on GPT-3.5 shows that even closed-access models are susceptible to safety disruption via fine-tuning. If a provider allows a fine-tuning API, there is always a risk that a small amount of malicious data can bypass the original safety alignment.

Comments

Mbuyiselwa Cindi

This is such a great breakdown of the technical side of debiasing! I've seen a lot of people struggle with the safety drift part, but mentioning LoRA as a practical fix is super helpful for anyone just starting out with their own models.

April 23, 2026 AT 19:21

Kathy Yip

Its weird to think that a model can basicly "forget" how to be good just because it saw ten bad examples. Makes me wonder if the way we define fairness is just too fragille for these things to ever truly grasp without some kind of deeper moral framework that isnt just a dataset of examples... just a thoought.

April 25, 2026 AT 08:23

Mike Marciniak

Funny how they call it "debiasing." It is just a fancy word for programming the AI to tell us exactly what the corporate overlords want us to believe. They are not fixing bias, they are installing a different bias that fits the narrative. The fact that it only takes ten data points to break the safety locks proves these guardrails are just theater to keep the masses compliant.

April 25, 2026 AT 18:05

Krzysztof Lasocki

Oh wow, so we can just add a "correction filter" and suddenly the AI is a saint. Truly revolutionary stuff here. I'm sure the world will be a utopia once we tweak 1% of some parameters and everything is just perfectly fair and safe for everyone forever.

April 26, 2026 AT 22:51

VIRENDER KAUL

The methodology described is rudimentary at best and the results regarding extrapolation bias are merely marginal in a real world high frequency trading environment where volatility is the only constant the author fails to acknowledge that supervised fine tuning often leads to overfitting which renders the model useless for actual prediction tasks in any professional capacity this is a textbook example of academic optimism ignoring industrial reality

April 28, 2026 AT 18:44

Henry Kelley

I think we should all try to be a bit more open to these new methods even if they arent perfect yet. Its a litle bit scary that it can break so easily but its better than just leaving the bias in there, right

April 29, 2026 AT 23:32