Debugging Large Language Models: A Practical Guide to Diagnosing Errors and Hallucinations

- Mark Chomiczewski

- 3 May 2026

- 0 Comments

Traditional software debugging feels like hunting for a specific typo in a line of code. You find the error, you fix it, and the program runs. Debugging Large Language Models is a specialized discipline distinct from traditional software debugging that involves diagnosing probabilistic outputs, hallucinations, and emergent behaviors is completely different. There is no single line of code to blame. Instead, you are dealing with complex interactions between model architecture, training data, and prompt engineering. As we move through 2026, this distinction has become critical. With AI integrated into high-stakes sectors like healthcare and finance, an error rate above 5% is often unacceptable. Understanding how to diagnose these errors isn't just a technical nicety; it's a business necessity.

Why LLM Debugging Is Not Like Software Debugging

In conventional development, if a function returns the wrong value, you trace the logic back to the source. With LLMs, the "source" is a statistical probability distribution over billions of parameters. The model doesn't "know" facts; it predicts the next likely token based on patterns in its training data. This probabilistic nature leads to two primary issues: logical errors in code generation and factual inaccuracies known as hallucinations.

When an LLM hallucinates, it isn't lying in the human sense. It is confidently generating text that fits the linguistic pattern but lacks grounding in reality. For example, a model might cite a non-existent legal case because the structure of the citation matches millions of real ones in its training set. Traditional unit tests can catch syntax errors, but they fail to detect semantic errors where the code runs without crashing but produces incorrect results. This gap is why specialized debugging frameworks have emerged since 2023.

The Core Methodologies for Diagnosing LLM Errors

To effectively debug an LLM, you need to look at three distinct phases: pre-training, fine-tuning, and runtime execution. Each phase offers different levers to pull when things go wrong.

- Prompt Tracing and System Logging: This is your first line of defense. By tracking input-output relationships across complex pipelines, you can identify where the context window was exceeded or where ambiguous instructions led to drift. Tools like LangSmith or Weights & Biases help visualize these traces.

- Automated Evaluation with Synthetic Test Cases: Using benchmarks like HumanEval (which contains 164 programming problems) provides quantitative metrics. However, relying solely on pass/fail rates is dangerous. A model might pass a test by chance rather than understanding the logic.

- Model Behavior Probing: Tools such as Captum and SHAP analyze internal representations to see which features the model is focusing on. This helps answer questions like, "Why did the model ignore this part of the prompt?"

Pre-training debugging focuses on the data itself. Research by Dr. Cameron Wolfe indicated that over 73% of hallucination errors trace back to imbalanced or low-quality training data. If your dataset contains contradictory information, the model will struggle to resolve it during inference. Fine-tuning debugging, on the other hand, uses Reinforcement Learning from Human Feedback (RLHF) or AI Feedback (RLAIF) to align the model’s outputs with specific enterprise KPIs.

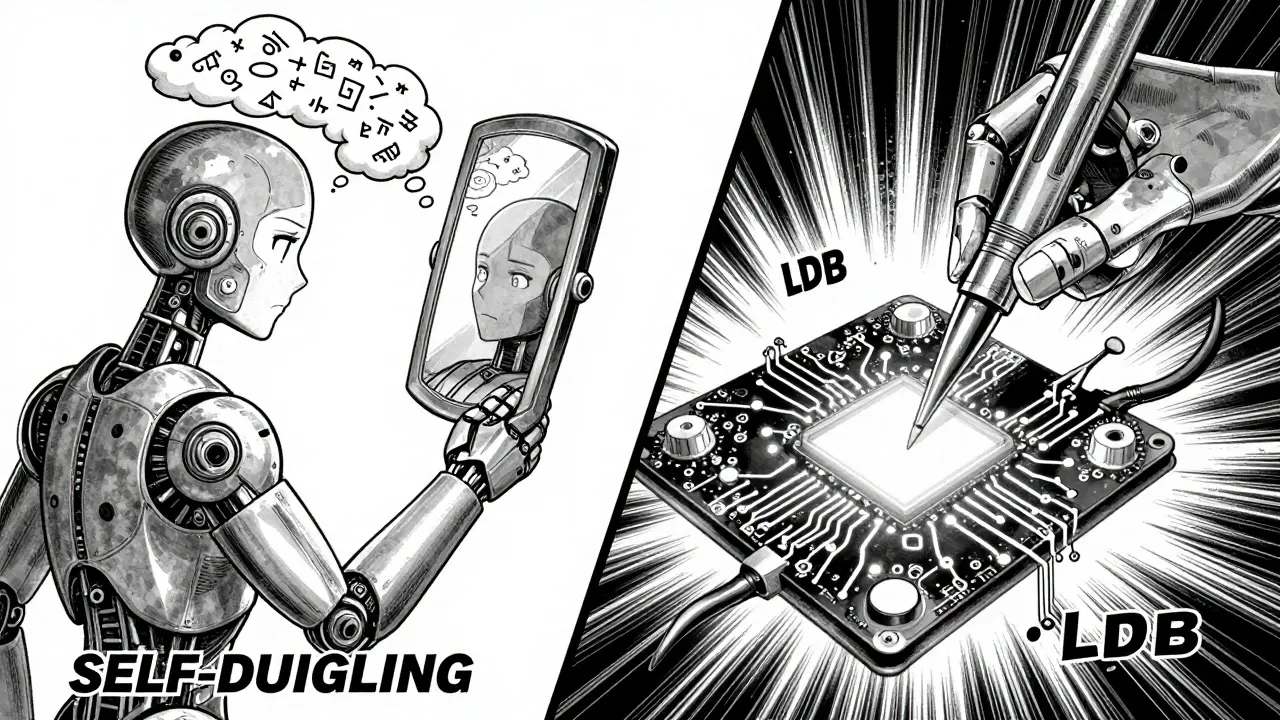

SELF-DEBUGGING vs. Execution-Based Debugging

Two major approaches dominate the current landscape: the SELF-DEBUGGING framework introduced by Chen et al. (2024) and execution-based methods like LDB (Large Language Model Debugger) developed by Ge et al. (2024). Choosing between them depends on whether you have access to executable code or just natural language outputs.

| Feature | SELF-DEBUGGING | LDB (Execution-Based) |

|---|---|---|

| Core Mechanism | Iterative self-reflection using natural language explanations | Runtime monitoring of intermediate variables via control flow graphs |

| Best Use Case | Environments without unit tests (e.g., text-to-SQL, creative writing) | Code generation tasks with available test cases |

| Performance Gain | Up to 12% accuracy improvement on code generation; 2-3% on Spider benchmark | 8.7% higher precision than traditional approaches; 8.2% higher pass rates on HumanEval |

| Limitations | Struggles with semantic errors that pass basic checks; plateaus after 2 iterations | Fails completely if no test cases are visible; requires significant integration effort |

| Token Efficiency | Moderate; relies on multiple generations and feedback loops | High; batch debugging improves efficiency by 47.6% compared to iterative refinement |

SELF-DEBUGGING works by having the model generate a candidate output, explain its reasoning in natural language, and then use that explanation to refine the output. This mimics "rubber duck debugging," where explaining a problem aloud helps you find the solution. It excels in scenarios like the Spider text-to-SQL benchmark, where traditional unit tests aren't always available. However, it tends to plateau after two iterations, meaning it won't infinitely improve itself.

LDB, conversely, takes a more surgical approach. It segments execution traces into basic blocks based on control flow graphs. By monitoring intermediate variables at each breakpoint, it isolates errors with greater precision. If you are building a financial calculator, LDB can pinpoint exactly which variable drifted from the expected value. But if you are asking for a marketing slogan, LDB has nothing to grab onto.

Diagnosing Hallucinations: Beyond the Code

Hallucinations remain the most stubborn challenge in LLM deployment. Even with advanced debugging tools, state-of-the-art models still exhibit error rates between 7% and 15% on public benchmarks. Stanford’s HELM evaluation framework highlights that while models are getting smarter, they are also becoming more confident in their wrong answers.

To diagnose hallucinations, you must move beyond simple fact-checking. Start by implementing input attribution techniques. These tools identify which segments of the training data influenced a specific output. If a model cites a fake study, input attribution might reveal that it was heavily influenced by a small cluster of low-quality blog posts in its training set.

Another effective strategy is retrieval-augmented generation (RAG) validation. When using RAG, ensure your retrieval step is debugged separately. Often, the hallucination isn't in the LLM's generation capability but in the retriever fetching irrelevant documents. If the context provided to the model is noisy, the model will amplify that noise.

Enterprise implementations at companies like Anthropic have shown that combining pre-training anomaly detection with post-deployment monitoring can reduce hallucination rates from nearly 19% to under 6%. This wasn't achieved by a single tool but by a layered approach: cleaning the data, fine-tuning with high-quality examples, and using runtime monitors to flag suspicious outputs before they reach the user.

Practical Implementation Steps for Developers

If you are integrating LLM debugging into your workflow, start with these actionable steps. Don't try to boil the ocean; begin with high-impact areas.

- Establish Baseline Metrics: Before applying any debugging technique, measure your current error rate. Use a diverse test set that includes edge cases. Without a baseline, you cannot quantify improvement.

- Implement Prompt Tracing: Log every interaction, including the system prompt, user input, and model output. Tag logs with metadata like temperature settings and top-k values. This allows you to reproduce errors consistently.

- Choose Your Debugging Framework: If your application generates code, integrate LDB or similar execution-based tools. If it generates text or SQL, implement SELF-DEBUGGING loops. For general chatbots, focus on RLHF alignment.

- Set Up Automated Alerts: Configure your pipeline to alert you when confidence scores drop below a certain threshold or when outputs contain specific sensitive keywords. NIST’s AI Risk Management Framework recommends documenting these thresholds clearly.

- Review Training Data Quality: Allocate 12-15% of your project time to auditing your training data. Look for contradictions, outdated information, and biased samples. Clean data reduces the need for heavy-handed runtime corrections.

Remember that debugging is not a one-time event. As your model evolves and new data becomes available, old fixes may break. Continuous monitoring is essential. The learning curve for these tools is steep-developers report needing 3-4 weeks of dedicated practice to master prompt tracing and up to 8 weeks for execution-based debugging. Invest in team training early.

The Future of Self-Healing Models

We are moving toward a future where models debug themselves in real-time. Gartner predicts that by 2026, 45% of enterprises will implement "self-healing LLMs." These systems will detect anomalies during inference and automatically adjust their parameters or retrieve additional context to correct course without human intervention.

However, experts caution against over-reliance on automation. Professor Yann LeCun noted in early 2024 that fundamental architectural changes may be needed to eliminate hallucinations entirely. Current techniques address symptoms, not root causes. Until we move beyond transformer architectures, debugging will remain a necessary, albeit imperfect, part of the AI lifecycle.

For now, the best approach is hybrid. Combine automated debugging tools with human-in-the-loop review for high-stakes decisions. Use SELF-DEBUGGING to improve code quality, LDB to verify logical consistency, and rigorous data auditing to minimize hallucinations. By treating LLM debugging as a continuous engineering discipline rather than a one-off fix, you can build applications that are not only intelligent but reliable.

What is the difference between SELF-DEBUGGING and LDB?

SELF-DEBUGGING is a framework where the model iteratively refines its own output using natural language explanations, making it ideal for tasks without executable tests like text-to-SQL. LDB (Large Language Model Debugger) is an execution-based tool that monitors intermediate variables in code execution traces, providing higher precision for code generation tasks where unit tests are available.

How can I reduce hallucinations in my LLM application?

Reduce hallucinations by auditing your training data for contradictions and bias, implementing retrieval-augmented generation (RAG) with strict relevance filters, and using input attribution tools to identify problematic data sources. Additionally, setting lower temperature values during inference can decrease randomness and improve factual consistency.

Is LLM debugging suitable for production environments?

Yes, but it requires careful implementation. Runtime debugging tools like LDB add latency, so they should be used selectively for high-stakes transactions. Pre-training and fine-tuning debugging are essential for all production models. Enterprise guidelines suggest maintaining hallucination rates below 5% for regulated industries, which often requires a combination of automated tools and human oversight.

What are the limitations of current LLM debugging techniques?

Current techniques struggle with semantic errors that pass unit tests but fail functional requirements. SELF-DEBUGGING often plateaus after a few iterations, and LDB cannot function without executable code. Additionally, debugging tools require significant computational resources and expertise, with developers reporting 3-8 weeks of learning time to achieve proficiency.

Which debugging tools are best for beginners?

Beginners should start with prompt tracing tools like LangSmith or Weights & Biases, which offer user-friendly interfaces and comprehensive logging. These tools provide immediate visibility into input-output relationships without requiring deep knowledge of model internals. Once comfortable, developers can explore more advanced frameworks like SELF-DEBUGGING or LDB.