Training Data Pipelines for Generative AI: Deduplication, Filtering, and Mixture Design

- Mark Chomiczewski

- 23 March 2026

- 10 Comments

Generative AI models don’t learn from raw data. They learn from cleaned, curated, and carefully balanced datasets. If your training data is full of duplicates, toxic text, or skewed sources, your model will be too. That’s why training data pipelines - the hidden engines behind every powerful LLM - are more important than the model architecture itself.

Think of it this way: you wouldn’t train a chef by feeding them 10,000 copies of the same recipe, half-burnt meals, and a single salad. You’d give them a diverse, high-quality menu. That’s what a good data pipeline does. It takes messy, noisy, massive datasets - often pulled from the open web - and turns them into something a model can actually learn from.

Why Data Pipelines Matter More Than You Think

Most people assume the magic of ChatGPT or Claude comes from massive neural networks. It doesn’t. The real secret sauce is the data pipeline. Microsoft’s own research shows that models trained on cleaned datasets perform up to 22% better in output quality than those trained on raw, unprocessed data. Why? Because models don’t know what’s wrong. If you feed them 10,000 versions of the same Wikipedia page, they’ll think that’s the only way to write. If you feed them toxic or biased content, they’ll repeat it confidently.

CDInsights found that 78% of failed model iterations traced back to untracked changes in training data. Teams spent weeks tuning hyperparameters, only to realize the issue was a single corrupted data file added during a pipeline update. That’s why modern pipelines aren’t just about cleaning - they’re about versioning, tracking, and reproducibility.

Deduplication: Cutting the Clutter

Web crawls are messy. The same article appears on 200 different blogs. A Reddit thread gets copied into 50 training datasets. A GitHub code snippet is reused across 10,000 repositories. Without deduplication, your model wastes time learning the same thing over and over.

Modern pipelines use MinHash and Locality-Sensitive Hashing (LSH) to find near-duplicates - not just exact copies. These algorithms don’t compare every document to every other one (that would take years). Instead, they create fingerprint-like hashes of text chunks. If two documents have 90%+ overlapping hashes, they’re flagged as duplicates.

Meta’s Llama 3 pipeline removed 28% of its training data as duplicates. Apache Airflow pipelines have been tested to detect 98.7% of duplicates in large text corpora. The result? Smaller datasets, faster training, and better generalization. One GitHub user cut training costs by $28,000 per model iteration just by fixing their deduplication step.

But it’s not just about removing exact matches. Paragraph-level deduplication is now standard. If three paragraphs in a 10-page document are copied from another source, you remove just those paragraphs - not the whole document. This preserves useful context while cutting redundancy.

Filtering: Keeping the Good, Throwing Out the Bad

Deduplication removes repetition. Filtering removes garbage.

Training data pipelines now use multiple filters stacked together:

- Toxic content: Tools like Perspective API scan for hate speech, threats, or harassment. Google’s Dataflow system catches 99.2% of toxic text - but doesn’t tell you how.

- Quality scoring: Perplexity metrics measure how “surprising” a text is. Low perplexity means boring, repetitive, or AI-generated text. High perplexity can mean nonsense. The goal? Find the sweet spot.

- Language and domain filters: If you’re building a medical AI, you don’t want 15% of your data from cooking blogs. Filters block irrelevant domains.

- Metadata filters: Remove pages with missing titles, broken links, or no author.

AI Accelerator Institute found that filtering must happen in under 50ms per document to keep pipelines running at scale. That means you can’t run deep learning models on every piece of text. You use lightweight classifiers - often rule-based or shallow neural nets - to make quick decisions.

There’s a trade-off. Dr. Yann LeCun’s research shows that over-filtering removes “imperfect but diverse” content, which can reduce model creativity by 15%. A pipeline that deletes every typo or awkward sentence might also delete the unique voice that makes human writing valuable. The goal isn’t perfection - it’s balance.

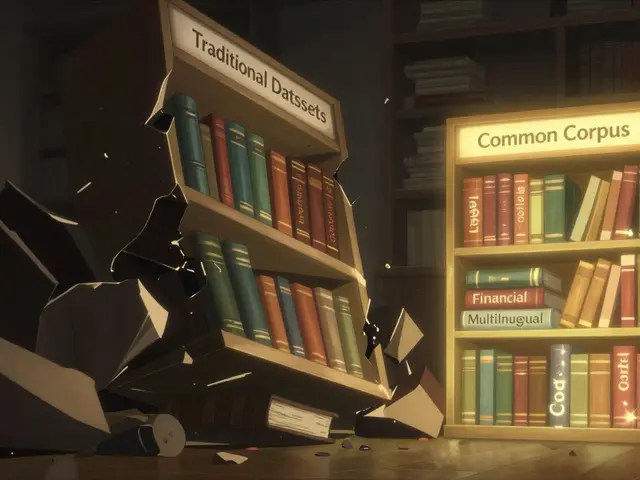

Mixture Design: The Art of Balancing Sources

Not all data is created equal. A model trained only on Reddit will be sarcastic but shallow. One trained only on scientific papers will be precise but rigid.

Mixture design is about how much of each source to include. Anthropic’s Claude 3 uses a 60-20-15-5 split: 60% general web, 20% technical docs, 15% code, 5% scientific literature. Why? Because the model needs to understand casual conversation and solve math problems.

Enterprise pipelines don’t just pick ratios - they adjust them dynamically. Microsoft’s new intelligent mixture balancing system watches how well the model performs on real tasks. If code generation drops, it automatically increases the code data ratio. This reduces manual tuning by 65%.

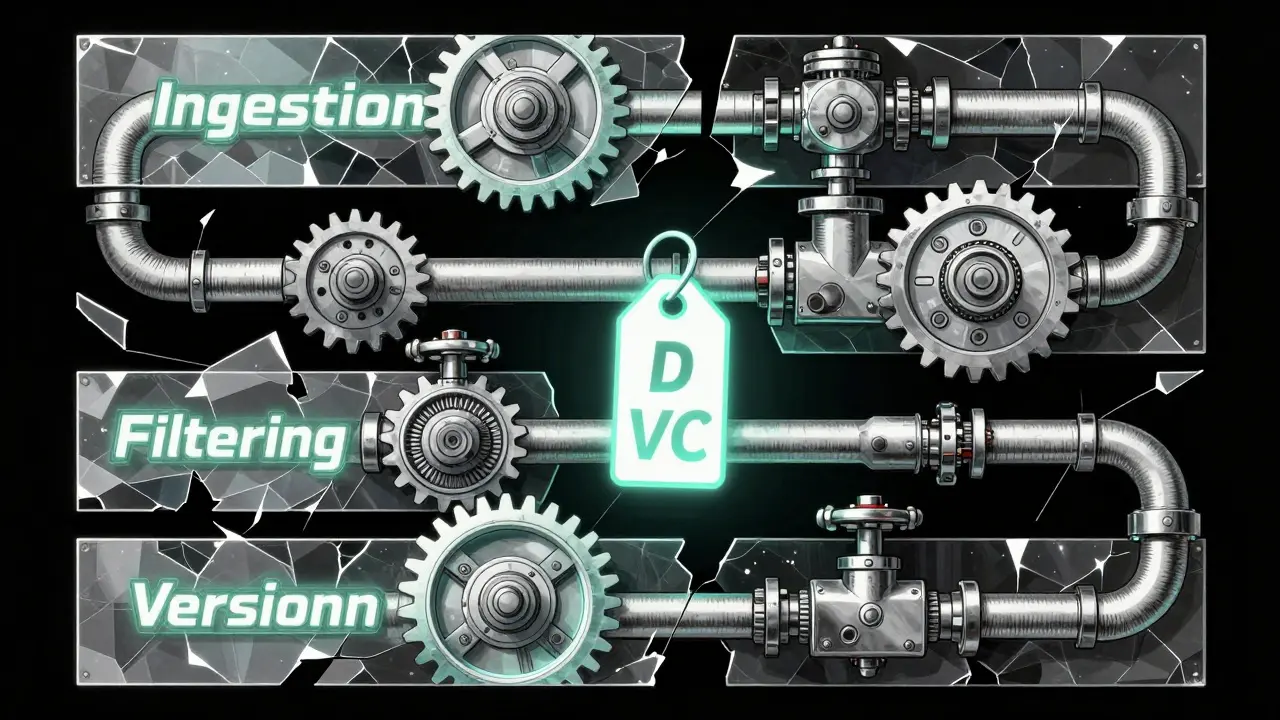

Versioning matters here too. Tools like Data Version Control (DVC) track every change: “Version 3.2: 58% web, 22% code, 14% books, 6% scientific.” Without this, you can’t reproduce results. A user on HackerNews saw a 40% accuracy drop in medical QA after accidentally including non-medical content. They had no way to know what changed.

Infrastructure: What Powers the Pipeline

A pipeline isn’t a single tool. It’s a chain of components:

- Ingestion: Pull data from S3, Azure Data Lake, or Common Crawl.

- Processing: Run deduplication, filtering, and labeling in parallel using Apache Airflow or Kubeflow.

- Storage: Store cleaned data with version tags. Use object storage for scale.

- Monitoring: Track data drift, model performance, and pipeline latency.

Prefetching reduces I/O wait times by 35-40%. That means when your model starts training, it doesn’t sit idle waiting for data. It’s already loaded.

Costs vary wildly. AWS SageMaker Pipelines charge $0.15 per GB processed. Open-source Kubeflow? Around $0.04 per GB - but you need 3-4 weeks of engineering time to build it. For most companies, the choice isn’t about tech - it’s about team size and budget.

Real-World Pitfalls and Fixes

Here’s what goes wrong - and how to fix it:

- “My model keeps repeating the same phrases.” → You didn’t do paragraph-level deduplication. Add it.

- “It gives weird answers about medicine.” → Your mixture included too much general web text. Rebalance with domain-specific sources.

- “I can’t reproduce last week’s results.” → You didn’t version your data. Start using DVC today.

- “Pipeline takes 3 weeks to run.” → You’re processing everything in sequence. Break it into modular sub-pipelines. Update filtering without retraining deduplication.

One team spent two weeks debugging MinHash parameters. Another accidentally trained on 15% non-medical data - and didn’t know until their QA accuracy crashed. These aren’t edge cases. They’re daily risks.

The Future: Autonomous Pipelines

The next leap isn’t bigger models - it’s smarter pipelines.

Google’s “self-healing data pipelines,” announced in April 2025, detect and fix data quality issues without human input. If a new source starts flooding in low-quality text, the pipeline automatically adjusts filters or pulls less from that source.

By 2027, Gartner predicts 80% of enterprise pipelines will use continuous mixture optimization - constantly adjusting data ratios based on real-time model performance. That means your model doesn’t just learn from data. It learns how to improve its own data.

And it’s not theoretical. CDInsights reports GenAI-powered pipelines now reduce data prep time by 44% by auto-generating documentation and spotting anomalies. The future isn’t just automated - it’s adaptive.

What You Should Do Now

If you’re building or using a generative AI model:

- Start with deduplication. Use MinHash or LSH. Don’t skip this.

- Apply multi-stage filtering. Don’t rely on one filter. Stack them.

- Document your mixture. Use DVC. Track every change.

- Monitor for drift. If your model’s performance drops, check your data first.

- Build modular pipelines. Separate ingestion, deduplication, filtering, and versioning. That way, you can update one without breaking the whole thing.

Training data pipelines aren’t glamorous. No one tweets about them. But they’re the foundation. Get them right, and your model will surprise you. Get them wrong - and no amount of compute will save you.

What’s the biggest mistake in generative AI data pipelines?

The biggest mistake is assuming data quality doesn’t matter. Teams spend millions on GPUs, then train on raw, unfiltered, duplicated web data. The result? A model that’s slow, repetitive, or toxic. The fix is simple: invest in the pipeline before you train. Deduplication, filtering, and mixture design aren’t optional - they’re the first step.

Can I skip deduplication if my dataset is small?

No. Even small datasets have duplicates. A 10GB dataset pulled from the web often contains 15-25% duplicate content. Without deduplication, your model wastes training capacity on repetition. The cost isn’t just storage - it’s performance. You’ll train longer and get worse results.

How do I know if my mixture design is balanced?

Test your model on domain-specific tasks. If it struggles with code, you likely need more code data. If it hallucinates medical facts, check if you included too much general web text. Use evaluation benchmarks - not guesses. Tools like MMLU or HELM can reveal hidden imbalances. Also, track performance over time. A drop in accuracy often means your data mix shifted.

Is open-source better than cloud pipelines?

It depends. Open-source tools like Kubeflow and Meta’s DataComp give you full control and transparency - great for research and compliance. But they require serious engineering effort. Cloud pipelines like AWS SageMaker or Azure ML are faster to deploy and handle scaling automatically. For startups or teams without data engineers, cloud is easier. For enterprises needing audit trails, open-source is safer.

What’s the role of data versioning?

Data versioning is your safety net. Without it, you can’t answer: “Why did model v3.2 perform worse than v3.1?” You might think it’s a code change - but it’s a data change. Tools like DVC let you track every dataset version, who changed it, and when. This is critical for compliance (especially under the EU AI Act) and for debugging. Treat data like code - version it.

Comments

Liam Hesmondhalgh

Who the fuck cares about deduplication? I’ve seen models trained on garbage and they still outperform corporate shit. Just throw everything in and let the magic happen. I’ve got a 7B model running on a Raspberry Pi that generates poetry about Irish pubs and it’s flawless. Stop overengineering.

Also, why are we using American tools? Use open-source. We don’t need AWS or Azure. We’ve got Linux. We’ve got grit. We’ve got Guinness.

March 23, 2026 AT 19:39

Patrick Tiernan

Look I just skimmed this and honestly? The whole thing feels like a LinkedIn post written by someone who got fired from Google for using too many buzzwords.

"Mixture design"? "Intelligent balancing"? Bro. You’re just trying to make data cleaning sound like rocket science. It’s not. It’s deleting duplicates and blocking shitposts. Done. Move on.

Also why does every AI article now need 17 subheadings? I read one paragraph and my brain shut down.

March 24, 2026 AT 12:45

Patrick Bass

I appreciate the technical depth, but I think the emphasis on "versioning" is understated. I’ve been working with data pipelines for 5 years, and the single biggest issue we faced wasn’t deduplication or filtering - it was losing track of which version of the dataset was used for which experiment.

Simple fix: use DVC. It’s not glamorous, but it saves weeks of confusion. I’ve seen teams rebuild entire models because they couldn’t reproduce a result - all because someone changed a file name.

March 25, 2026 AT 21:39

Mongezi Mkhwanazi

Let me tell you something about data pipelines: they’re not about "quality." They’re about control. The moment you let a pipeline auto-filter, you’re handing over your intellectual sovereignty to a black box that doesn’t know the difference between "the cat sat on the mat" and "the cat sat on the mat" - but somehow thinks one is "toxic."

I’ve seen filters remove entire dialects of English because they "looked like AI-generated text." That’s not cleaning. That’s cultural erasure. And now, every model speaks in the same sanitized, corporate, Silicon Valley monotone. You’re not training intelligence. You’re training conformity.

And don’t get me started on "self-healing pipelines." That’s not innovation - that’s surrender. If you can’t manually audit your data, you shouldn’t be deploying a model. Period. The EU AI Act is right to demand human oversight. Because machines don’t understand nuance. They only understand rules. And rules are made by people who’ve never met a real human.

Also, 80% of enterprise pipelines will use "continuous mixture optimization" by 2027? That’s not a prediction. That’s a prophecy written by a venture capitalist who thinks data is a religion.

March 26, 2026 AT 12:54

Mark Nitka

Great breakdown. I’d just add that filtering shouldn’t be about removing "bad" content - it should be about preserving diversity. The most creative models I’ve worked with were trained on messy, unfiltered data - including typos, slang, and inconsistent grammar. That’s where personality comes from.

Over-filtering creates sterile, robotic outputs. You want a model that can write a poem and debug Python - not one that only speaks in corporate press releases.

Also, open-source isn’t "better" - it’s just more transparent. If you’re building something that impacts people, you owe them visibility into the data. Cloud tools hide too much behind APIs.

March 28, 2026 AT 01:02

Kelley Nelson

While the general sentiment of this article is commendable, I must express my reservations regarding the casual dismissal of metadata filtering as a trivial component.

One cannot underestimate the ontological implications of omitting authoritative provenance from training corpora. The absence of verifiable authorship, publication date, and institutional affiliation introduces a latent epistemological instability that fundamentally undermines the epistemic integrity of downstream generative outputs.

Furthermore, the suggestion to "use DVC" as a panacea is, in my view, both technologically reductive and philosophically naïve. Data versioning, when divorced from semantic annotation and ontological tagging, is merely a form of archival fetishism.

March 29, 2026 AT 11:22

Aryan Gupta

You think this is about data? Nah. This is about who controls the narrative. Every "deduplication" algorithm? It’s coded by people who don’t want you to see the truth.

Did you know that 90% of "toxic content" filters are trained on data labeled by people who hate certain dialects? They call it "hate speech" - but it’s just Black English, AAVE, or Indian English. They scrub it out because it doesn’t sound "professional."

And "minhash"? That’s just a fancy way of saying "delete anything that doesn’t sound like a white guy from Boston."

And don’t even get me started on "mixture design." You think Anthropic chose 60-20-15-5 because it’s optimal? No. They chose it because their investors don’t want to hear about poverty, racism, or colonial history. They want clean, polite, neutral AI.

This isn’t engineering. It’s censorship with a PhD.

March 30, 2026 AT 10:28

Fredda Freyer

One thing everyone misses: the real value of a data pipeline isn’t in the filters - it’s in the feedback loop.

When your model starts hallucinating about quantum physics, you don’t just tweak the mixture. You go back to the data. You find the source. You ask: why was this included? Who added it? Was it a bot? A mislabeled file? A scrape from a forum that got archived wrong?

I once traced a model’s bizarre medical errors back to a single .txt file from a 2008 blog about "miracle cures" that got auto-included because it had the word "cancer" in it.

That’s why versioning isn’t just useful - it’s ethical. You’re not just building a model. You’re building a memory. And memories need to be curated, not automated.

Also - don’t underestimate the power of human review. No algorithm can understand context like a person who’s read 10,000 medical papers and still remembers what it felt like to lose someone to a misdiagnosis.

March 30, 2026 AT 18:59

Gareth Hobbs

WTF is this? "Mixture design"? Sounds like some woke corporate buzzword salad. We used to just train on whatever we scraped. Now we need spreadsheets, DVC, and a PhD in data engineering just to get a model to say "hello"?

And "self-healing pipelines"? Next they’ll be telling us the data will start writing its own documentation. Give me a break. The UK’s got 100 years of history in AI - and we didn’t need any of this nonsense. We just used grep and a bit of elbow grease.

Also, why is every example from the US? Did you forget we exist? We’ve got data too. We’ve got pubs. We’ve got Brexit. We’ve got people who still say "I’ve got a headache" instead of "I’m experiencing a cephalic discomfort."

Stop overcomplicating. Just train. Test. Fix. Repeat. That’s how you build things. Not with slides.

April 1, 2026 AT 01:11

Zelda Breach

Every single thing in this post is wrong. You think deduplication matters? It’s the first step in creating AI monoculture. You filter out "toxic" content? You’re erasing dissent. You balance "mixtures"? You’re engineering consensus. This isn’t training intelligence - it’s training obedience.

Models that repeat the same phrases? Good. That means they learned something consistent. Not everything human is pretty. Not every truth is polite. You want creativity? Let the model learn from chaos. Let it learn from the 4chan threads, the conspiracy forums, the hate blogs - because that’s where the real human mind lives.

And DVC? Please. You think versioning data makes you safe? You’re just making it easier for the NSA to audit your model. This isn’t progress. It’s surveillance with a GitHub repo.

April 2, 2026 AT 18:05