Benchmarking Transformer Variants for Production LLM Workloads: A 2026 Performance Guide

- Mark Chomiczewski

- 26 March 2026

- 5 Comments

The landscape of artificial intelligence has shifted dramatically since the initial days of attention mechanisms. Today, standing in March 2026, you aren't just choosing between two chatbots; you are architecting entire workflows around massive neural networks. The core challenge isn't building anymore-it's selecting. With dozens of transformer variants vying for your compute budget, how do you know which one actually handles your specific workload without burning through your monthly AWS invoice?

Benchmarking isn't just about hitting the highest score on a leaderboard. It is about understanding the fit between the model's internal mechanics and your actual data streams. You might love the theoretical elegance of a new architecture, but does it handle customer support logs, code generation, or complex financial queries as well as the established giants? Let's strip away the marketing fluff and look at the raw performance data that matters for engineering teams.

The Core Metrics That Actually Matter

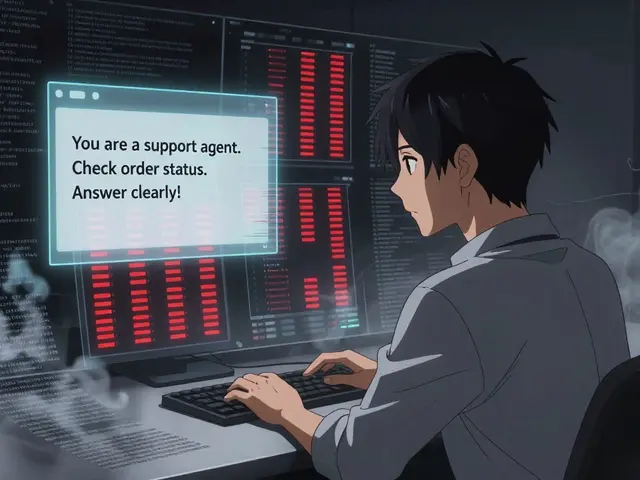

When you evaluate a Transformer Architectureis a deep learning model design based on self-attention mechanisms that enables parallel processing of sequence data, standard metrics often fail to capture real friction points. Everyone looks at Perplexity (PPL), but that rarely tells you how a model behaves when your dataset shifts slightly from training conditions. In 2026, we prioritize four hard constraints: latency under load, context window utility, reasoning capability, and operational cost per token.

Consider the context window first. Early models struggled with anything beyond 512 tokens. Modern solutions have shattered that ceiling, but long context doesn't always mean better memory. Some architectures suffer from "lost in the middle" phenomena where relevant data buried deep in a 128k-token document gets ignored. We need to verify if the model truly retains information across the span, not just if it fits in VRAM.

Evaluating the Proprietary Heavyweights

Closed-source models continue to set the bar for maximum reasoning capability, primarily due to the sheer scale of their training infrastructure. If your priority is raw accuracy for critical tasks-like drafting legal documents or interpreting complex medical notes-these models remain the gold standard despite the costs.

OpenAI's GPT-4 Turboa commercial large language model variant optimized for higher throughput and extended context windows compared to previous iterations remains a dominant force. Released previously as a bridge technology, it still holds up remarkably well. It supports a 128,000-token context window, which roughly equals 300 pages of text. This allows you to feed entire meeting transcripts or full codebases into the context. Real-world adoption stories show significant gains; one documented migration of a Zendesk customer support bot saw Customer Satisfaction (CSAT) scores jump by 12 percentage points after switching from GPT-3.5 to GPT-4. However, this came at a steep price-a four-fold increase in operational costs. That is the classic trade-off: superior intelligence requires a premium tax.

Claude 4 and its successor variants have carved out a niche for themselves through safety alignment and nuanced reading comprehension. They often excel at summarizing dense material where hallucination rates matter immensely. Meanwhile, Google's Gemini 2.5 Flasha multimodal AI model optimized for speed and cost-efficiency while maintaining high-quality generative capabilities has emerged as the speed king. It is designed for applications that cannot afford the milliseconds of delay found in heavier models. For cost-conscious projects involving rapid prototyping or real-time conversational agents, it provides exceptional value. As of early 2026, benchmarks place Gemini 3 Pro and GPT 5.2 at the absolute peak for general-purpose reasoning suites, pushing the ceiling for what automated systems can achieve in logical deduction.

The Open Source Advantage

Transparency is becoming a requirement rather than a feature. For many enterprises, handing off proprietary data to a closed black box is non-negotiable. This drives demand toward open weights distributed via platforms like Hugging Facea central platform hosting thousands of pre-trained machine learning models and datasets for community collaboration. The ecosystem here is vast, offering different architectures tuned for specific hardware constraints.

The BERT family continues to dominate specific niches, particularly for text classification and sentiment analysis. Google's original bidirectional encoder architecture revolutionized how machines read language, but modern derivatives have optimized it significantly. RoBERTaa robustly optimized version of the BERT model featuring improved training stability and hyperparameter configurations, specifically the Large variant with 355 million parameters, offers a sweet spot for balance. It achieves a performance score of 7.8 for accuracy and an 8.0 for speed on standard scales, all while being significantly cheaper to run than the massive autoregressive transformers. For General Language Understanding Evaluation (GLUE) tasks or SQuAD question answering, this model remains a pragmatic workhorse.

We also see innovations in efficiency. DistilBERTa smaller neural network derived from knowledge distillation techniques to reduce computational requirements cuts the model size by 40% while keeping 97% of the original accuracy. This is crucial when deploying to edge devices or mobile phones where power budgets are tight. Similarly, ALBERT (A Lite BERT) uses factorized embeddings to share parameters, allowing for deeper networks without exploding the parameter count. These architectural choices let you deploy powerful NLP without needing a cluster of A100 GPUs.

Specialized Architectures for Extended Data

Not every problem fits the standard decoder-only mold. When dealing with sequences that stretch far beyond typical documents, we look at architectures designed for recurrence.

Transformer XLan architecture modification implementing segment-level recurrence to extend context windows beyond fixed limits solves the fragmentation issue. By breaking the computation into segments and reusing states from previous chunks, it can handle context lengths exceeding 3,000 tokens-six times the baseline. This makes it ideal for analyzing long-form books, music generation, or genomic DNA sequencing where temporal dependencies span thousands of steps. However, there is a catch: optimization requires custom CUDA kernels. Unless your team has dedicated researchers tweaking low-level GPU code, maintenance overhead can become a distraction.

We also have T5 (Text-to-Text Transfer Transformer). It simplifies everything by framing all tasks as text-to-text problems. While older, variants with 11 billion parameters still hold their ground for multilingual translation tasks. Its ability to handle diverse input formats without extensive fine-tuning makes it a stable choice for legacy integration.

Data Limitations and Distribution Shifts

A critical insight emerging in 2026 involves the behavior of these massive models in low-data regimes. Transformers are incredibly sensitive to how your data distribution changes. Statistical studies comparing them against traditional methods like OLS regression or Gradient Boosting reveal interesting fragility.

| Scenario Type | Linear Effect Baseline (OLS) | Neural Network (MLP) | Modern Transformer Variant |

|---|---|---|---|

| Optimal Linear Correlation | Score: 0.995 | Score: 0.435 | Score: 0.693 |

| Cross-Sectional Shift (Stress Test) | Score: Unstable | Score: 0.089 | Score: 0.118 |

This data highlights a reality often glossed over. In simple, linear scenarios, traditional machine learning algorithms beat complex transformers easily. The real-world test happens when your input data shifts-the "Cross-Sectional Shift" scenario. Here, transformers dropped significantly in performance compared to theoretical baselines. While MLPs (Multi-Layer Perceptrons) remained relatively stable at 0.089, transformers dipped to 0.118. This volatility is a major risk for mission-critical deployments where data patterns change daily, such as financial markets or viral social media trends.

The Rise of Hardware-Native Models

In 2026, hardware companies are designing models tailored to their silicon. Nemotrona series of open-weights models developed by Nvidia for efficient enterprise deployment exemplifies this trend. Built upon foundations similar to Meta's Llama architecture, Nemotron comes in massive scales, with a 340-billion-parameter configuration that rivals proprietary champions. But more importantly, it includes mini-models engineered specifically for single-GPU local deployment. This bridges the gap for small businesses that cannot afford cloud API costs.

Similarly, the Falconan open-weight LLM series developed by the Technology Innovation Institute featuring various parameter counts series from the Technology Innovation Institute has matured. Falcon 2 and Falcon 3 provide multimodal capabilities, handling both text and vision inputs effectively. With parameter sizes ranging from 1 billion to 180 billion, users can pick a model that fits their exact memory budget.

Finding Your Fit: The Decision Matrix

So, which architecture should you select for your next project? There is no single winner, but there are clear guidelines based on your constraints.

- If you need top-tier reasoning for high-stakes decisions and budget is secondary, choose GPT-4 or Claude 4. They consistently handle nuance better than any alternative.

- If your application demands extreme speed and low latency, like real-time transcription, Gemini 2.5 Flash offers the best price-performance ratio.

- If you require full control, on-premise security, and weight interpretability, look at BERT-family models like RoBERTa or DistilBERT.

- If you are processing massive text volumes or specialized scientific data like DNA, consider Transformer XL for its superior handling of long-range dependencies.

- If you need localized deployment with lower compute overhead, explore Nemotron or Falcon variants hosted locally.

The future points even further beyond standard attention mechanisms. New concepts like State-Space Models and Mamba are gaining traction for solving the quadratic complexity issue inherent in attention. While 2026 is still largely dominated by transformer-based approaches, staying aware of these shifting paradigms ensures your stack remains competitive for the next decade.

Why would I choose RoBERTa over DistilBERT?

Choose RoBERTa if you need maximum accuracy for complex understanding tasks and have the resources to handle larger inference loads. DistilBERT is the better choice when you strictly need faster speeds and lower resource usage, and you can tolerate a slight dip in precision (around 3%).

What constitutes a realistic benchmark for LLMs?

Realistic benchmarks go beyond generic datasets. They should measure latency under concurrent load, accuracy during data distribution shifts (changes in input style), and actual cost-per-token in your deployment environment. Static accuracy tests often miss real-world failure modes.

Are Transformer architectures vulnerable to data drift?

Yes. Studies show transformers can perform poorly under cross-sectional shifts where the data distribution differs from training conditions. Traditional statistical methods sometimes offer more stability in these specific volatile scenarios.

Is open-source always better for privacy?

Open weights allow you to host models internally, ensuring data never leaves your network. This provides significantly better privacy guarantees compared to sending data to third-party APIs, making them essential for regulated industries like healthcare or finance.

Can I run large models like GPT-4 locally?

No, GPT-4 is a closed-source model accessed only via API. However, open alternatives like Nemotron or Falcon offer comparable capabilities and can be deployed on local hardware setups depending on their parameter size.

Comments

Mark Brantner

Honestley noboday actually checks if the context window fits their data properly.

March 27, 2026 AT 03:59

Kate Tran

Privacy is key though.

Companies are waking up to this fast.

We dont want our data leaking to unknown servers.

It feels safer keeping things internal mostly.

March 27, 2026 AT 21:46

amber hopman

Totally agree on the privacy aspect.

Vendor lock-in feels like a trap right now.

Most devs want full control over the inference pipeline.

It makes sense to host locally for sensitive data.

The trade-off in setup time is worth the security gain.

March 28, 2026 AT 21:40

Kathy Yip

The philosophy behind shifting back to simpler models is profound.

Complexity often masks fragilty in these systems.

We tend to trust the black box too much.

Real understanding requires visibility into the weights.

Its ironic that better data cleaning wins more than bigger models.

March 29, 2026 AT 22:07

Bridget Kutsche

The section on hardware-native models really resonated with my team.

We tried running standard GPT variants last quarter and the latency was unacceptable.

Cost per token became a massive issue during scaling tests.

Switching to smaller open weights changed everything for us.

You can get ninety seven percent accuracy with half the compute cost.

This is exactly why transparency matters so much today.

Black boxes are hard to debug when production fails unexpectedly.

Engineers prefer knowing what lies underneath the hood honestly.

We found that RoBERTa handles classification tasks without needing heavy GPUs.

It works surprisingly well for legacy system integrations too.

Many teams overlook the benefits of older architectures initially.

They focus on the newest shiny object instead of proven tools.

Sometimes stability beats raw performance benchmarks significantly.

I recommend starting small before committing to expensive cloud contracts.

Testing distribution shifts early saves a lot of headache later on.

Keep your infrastructure flexible enough to adapt quickly.

March 30, 2026 AT 19:18