Document Re-Ranking: Boosting RAG Accuracy for LLMs

- Mark Chomiczewski

- 9 April 2026

- 8 Comments

You've built a Retrieval-Augmented Generation (RAG) pipeline, but your LLM is still hallucinating or missing the mark. You're likely facing a common paradox: if you retrieve too few documents, you miss the answer; if you retrieve too many, you drown the model in noise. This is where document re-ranking is a second-stage retrieval process that re-evaluates a small set of candidate documents to ensure the most relevant ones are prioritized for the LLM. It essentially acts as a quality filter, turning a "rough guess" from a vector search into a precise list of facts.

The Gap Between Similarity and Relevance

Most RAG systems rely on Vector Search, which uses embeddings to find documents that are "topically similar" to a query. While this is incredibly fast, similarity isn't the same as relevance. A document can be about the right topic but answer the wrong question, or use similar keywords without actually providing the solution.

This creates a tension between retrieval recall and LLM recall. If you pull the top 5 documents, you might miss the actual answer (low retrieval recall). If you pull 50 documents to be safe, the Large Language Model (LLM) might get confused by the irrelevant fluff or hit its context window limit (low LLM recall). Re-ranking solves this by allowing you to retrieve a larger set-say, 20 to 50 documents-and then using a more intelligent model to pick the absolute best 3 to 5.

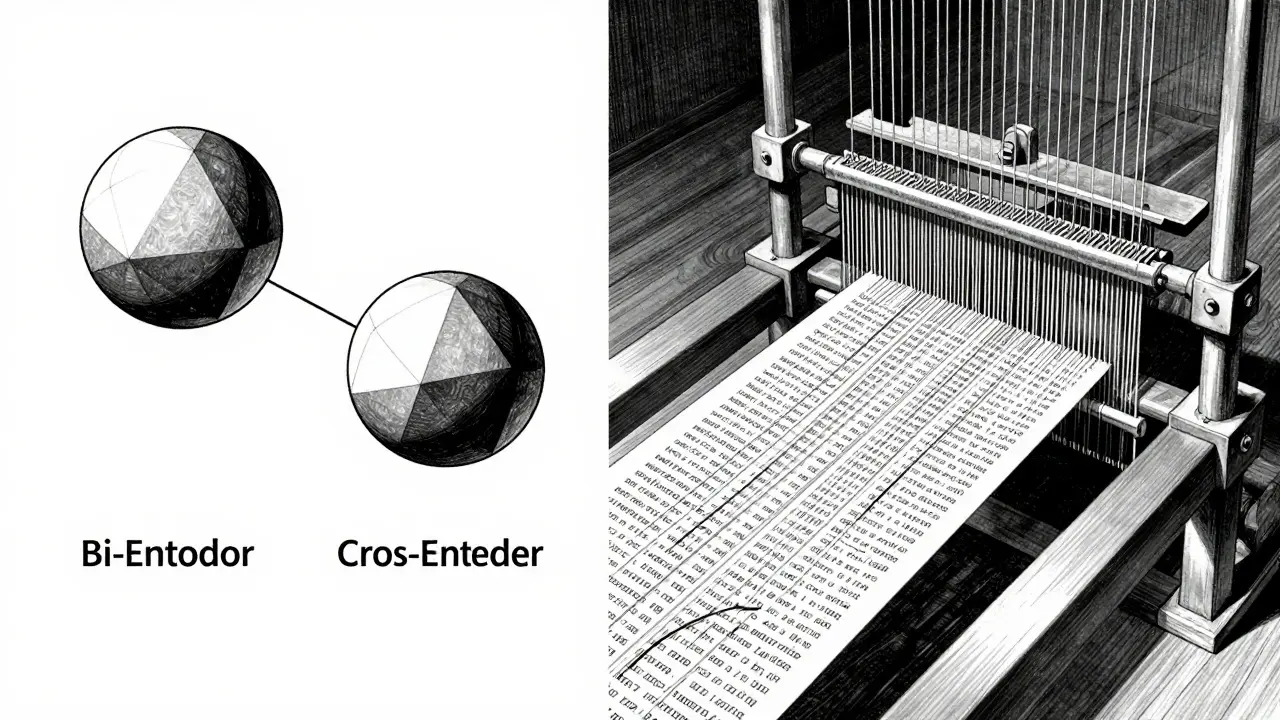

How Re-Ranking Works: Bi-Encoders vs. Cross-Encoders

To understand why re-ranking is so much more accurate, we have to look at the architecture. Initial retrieval usually uses Bi-Encoders. These models turn the query and the documents into separate vectors. The system then just calculates the distance between these vectors. It's like comparing the general "vibe" of two paragraphs.

A Cross-Encoder, which powers most re-rankers, does things differently. It doesn't use precomputed vectors. Instead, it feeds the query and the document into the transformer model simultaneously. This allows the model to perform deep semantic analysis, noticing how specific words in the query interact with specific phrases in the document. It's less like comparing vibes and more like a human reading both texts side-by-side to decide if the answer is actually there.

| Feature | Bi-Encoder (Vector Search) | Cross-Encoder (Re-Ranker) |

|---|---|---|

| Speed | Millisecond response (Ultra-fast) | Slower (Computationally expensive) |

| Accuracy | Moderate (Topic-based) | High (Context-aware) |

| Mechanism | Cosine similarity of embeddings | Full query-document pair inference |

| Scale | Can search millions of docs | Only works on a few dozen candidates |

The Two-Stage Retrieval Pipeline

In a production environment, you don't use a cross-encoder for everything because it would take forever. Instead, you build a two-stage pipeline. First, use a fast method like BM25 (keyword matching) or vector search to grab a candidate set of 20-100 documents. This ensures you haven't missed the needle in the haystack.

Second, pass those candidates through the re-ranker. The re-ranker assigns a relevance score to each document, and you re-sort the list based on these scores. Only the top-scoring documents are then sent to the LLM. This hybrid approach gives you the speed of a vector database with the precision of a deep-learning model.

Advanced Re-Ranking Strategies

The field is moving beyond simple scoring. We're now seeing "agentic" re-ranking. A great example is JudgeRank, which doesn't just output a number. It mimics human reasoning by analyzing the query, creating a query-aware summary of the document, and then making a judgment call on whether it's actually useful. This reasoning-heavy approach has shown significant gains on benchmarks like BEIR, proving that "thinking" through the relevance is better than just calculating a score.

For those dealing with images or video, multimodal re-ranking is becoming essential. Standard CLIP-based embeddings often struggle with complex visual-textual relationships. Advanced relevancy measures can now adaptively select the number of entries (k) based on the content's quality rather than using a fixed number, preventing the LLM from receiving irrelevant images that might mislead the final answer.

Practical Pitfalls and Trade-offs

While re-ranking is a huge win for accuracy, it's not free. The biggest cost is latency. Adding a re-ranking step adds a few hundred milliseconds to your request. If your users need instant responses, you'll need to balance the size of your candidate set. A set of 100 documents will be more accurate but slower than a set of 20.

Another challenge is domain drift. A general-purpose re-ranker trained on Wikipedia might struggle with highly specialized legal or medical documents. In these cases, you may need a domain-specific model or an LLM-based re-ranker that can leverage a few-shot prompting strategy to understand your specific industry's terminology.

When Should You Implement Re-Ranking?

You don't always need a re-ranker. If your documents are short, very distinct, and your queries are simple, a standard vector search is enough. However, you should definitely implement re-ranking if:

- Your documents are dense and contain a mix of relevant and irrelevant information.

- You are noticing that the correct answer is often in the 10th or 20th retrieved document, but not the top 3.

- Your LLM is hallucinating because it's getting confused by "similar-looking" but irrelevant context.

- You are building an enterprise-grade search where precision is more important than sub-second latency.

Does re-ranking increase the cost of my RAG pipeline?

Yes, it does. Because cross-encoders process the query and document together, they require more GPU compute per document than simple vector comparisons. However, because you only re-rank a small subset (e.g., 20 documents), the cost is usually manageable compared to the cost of the final LLM generation.

Can I use an LLM as a re-ranker?

Absolutely. You can prompt an LLM to rate the relevance of a document on a scale of 1-10. While this is highly accurate (similar to the JudgeRank approach), it is significantly slower and more expensive than using a dedicated cross-encoder model like BGE-Reranker.

What is the ideal size for the candidate set before re-ranking?

There is no one-size-fits-all, but most production systems use between 20 and 100 documents. The goal is to ensure the "true" answer is captured by the initial fast retrieval, while keeping the list small enough that the re-ranker doesn't introduce too much latency.

Will re-ranking help with hallucinations?

Yes. Hallucinations in RAG often happen when the LLM tries to make sense of irrelevant or contradictory information provided in the context. By filtering out the noise and only providing highly relevant documents, you reduce the chance of the LLM "inventing" a connection to fill the gaps.

Is BM25 still useful if I have vector search and re-ranking?

Yes. Hybrid search-combining BM25 (keyword) and Vector Search (semantic)-usually produces a better initial candidate set than either method alone. Re-ranking then polishes this combined list to ensure the top results are the most accurate.

Comments

Kayla Ellsworth

imagine thinking a slightly more expensive model is a "solution" to a fundamental flaw in how machines mimic thought. we're just layering patches on top of patches and calling it progress. ground-breaking stuff really.

April 12, 2026 AT 20:15

Soham Dhruv

dont worry about the latency too much man just cache the common queries its a lifesaver for the user exprience

April 14, 2026 AT 13:58

Jason Townsend

funny how they talk about "noise" and "filters" while the big tech companies use these same cross-encoders to decide what info you actually get to see based on what they want you to believe the algorithm is just a tool for digital censorship

April 15, 2026 AT 09:49

Angelina Jefary

It is absolutely terrifying that we are trusting these "black box" re-rankers to prioritize information. Who is auditing the training sets for these cross-encoders? Probably some corporate entity with a hidden agenda to steer the LLM toward a specific narrative while pretending it's all just about "relevance scores." The lack of transparency in the weightings of these models is a glaring security risk that no one is talking about because they're too obsessed with their precious benchmarks.

April 16, 2026 AT 22:10

Destiny Brumbaugh

USA is leadin the way in this AI race and we sure as hell know how to make these things fast and loud!! dont let any other country tell you their RAG is better when we got the best hardware and the best engineers in the world period

April 18, 2026 AT 14:07

Sara Escanciano

The sheer arrogance of suggesting that "domain drift" is just a technical pitfall is offensive. You are ignoring the ethical implications of deploying these models in medical and legal fields where a re-ranking error isn't just a "latency issue" but a potential catastrophe for a human being. It is morally bankrupt to prioritize "enterprise-grade search" efficiency over the actual safety and accuracy of critical human data. We should be demanding absolute accountability for every single document filtered out by these algorithms before they are ever allowed near a patient's record.

April 20, 2026 AT 12:12

Elmer Burgos

it's a cool way to look at it and i think most people can find a middle ground between speed and accuracy that works for them

April 22, 2026 AT 02:08

Antwan Holder

Oh, the tragedy of it all! We seek the truth in a haystack of digital noise, only to find that our salvation lies in a Cross-Encoder. Is this not the ultimate metaphor for the modern human condition? We are all just candidate documents waiting for a higher intelligence to assign us a relevance score. I feel the void of the context window expanding within my own soul as I realize that even our most precise facts are just the result of a calculated distance in a vector space. We are drifting in a sea of embeddings, forever searching for a query that actually understands the depth of our despair. The latency of the heart is the only cost that truly matters in this cold, algorithmic wasteland we call progress. I can almost taste the bitterness of a low-scoring relevance result. It is a poetic nightmare of our own making, where the truth is re-ranked into oblivion.

April 23, 2026 AT 03:47