Keyboard and Screen Reader Support in AI-Generated UI Components

- Mark Chomiczewski

- 21 March 2026

- 10 Comments

When AI starts building user interfaces, it doesn’t just need to look good-it needs to work for everyone. That includes people who rely on keyboards instead of mice, or screen readers instead of vision. The reality? Most AI-generated UIs today fail at this. They might generate a button, a form, or a modal dialog, but if the focus order is broken, ARIA labels are missing, or keyboard traps are lurking, then it’s not usable. And that’s not just a technical flaw-it’s a civil rights issue.

Why This Matters Now

In 2023, WebAIM found that only 3% of the top 1 million websites met basic accessibility standards. That’s not because designers didn’t care. It’s because manually adding keyboard navigation, proper focus management, and screen reader support is slow, expensive, and easy to miss. Enter AI. Tools like UXPin, Workik, and Adobe’s React Aria now promise to automate accessibility. But automation doesn’t mean perfection. It means starting point.The goal isn’t to replace human judgment. It’s to give developers a head start. A 2024 study by Dr. Cynthia Bennett at Carnegie Mellon showed AI tools hit 78% compliance for basic keyboard navigation-but only 52% for complex screen reader interactions. That gap isn’t small. It’s the difference between a button that works and a form that locks users out.

What AI Actually Generates

AI doesn’t just spit out HTML. It generates semantic structure, ARIA attributes, and focus logic-all wrapped in code that frameworks like React or Vue can use. For example, if you ask an AI tool to create a modal dialog, it should output:- A

<div>withrole="dialog" - Proper

aria-labelledbyandaria-describedbyattributes - Keyboard trap logic that keeps focus inside the dialog until closed

- A way to close the dialog with

EscandTabnavigation

Tools like UXPin’s AI Component Creator do this by analyzing design mockups and translating them into accessible React components. It doesn’t just make the button blue-it adds tabindex="0", ensures the button has a label, and checks contrast ratios against WCAG 2.1’s 4.5:1 minimum.

Workik’s AI code generator goes further. It scans your existing UI, finds accessibility errors using Axe Core and Lighthouse, and then writes fixes. If a button has no text, it suggests an aria-label. If focus jumps randomly, it reorders the tabindex flow. This isn’t magic. It’s pattern recognition based on thousands of real-world accessibility audits.

Key Technical Requirements

For AI-generated components to be truly accessible, they must meet four technical benchmarks:- Semantic HTML: Use the right element for the job. A button should be a

<button>, not a<div>with a click handler. - ARIA attributes: When HTML alone isn’t enough, ARIA fills in. Think

role="alert"for error messages oraria-expanded="true"for collapsible menus. - Focus management: When a modal opens, focus must move to it. When it closes, focus must return to what triggered it. AI often misses this.

- Keyboard navigation: All interactive elements must be reachable and operable with

Tab,Enter,Space, and arrow keys.

These aren’t optional. They’re required by WCAG 2.1 Level AA-the standard most legal frameworks, including the U.S. Section 508 and EU EN 301 549, enforce.

Tools Compared: What Works and What Doesn’t

| Tool | Focus | Framework Support | Cost | Screen Reader Support | Limitations |

|---|---|---|---|---|---|

| UXPin | Design-to-code workflow | React, Vue | $19/user/month | Good for static components | Fails on dynamic content, modal focus traps |

| Workik | Code fixes for existing UI | React, Angular, Vue | Free tier; $29/month premium | Strong on ARIA fixes | Limited to known patterns, no design input |

| React Aria (Adobe) | Low-level accessibility primitives | React only | Free, open-source | Excellent if implemented correctly | Requires deep expertise-no automation |

| Aqua-Cloud | Testing and auditing | Any | $499/month | Identifies issues, doesn’t fix them | Doesn’t generate code |

Here’s the truth: no tool gets it 100% right. UXPin’s AI might generate a perfectly labeled button, but if that button opens a dynamic dropdown with live-updating content, the screen reader might not announce the change. Workik can fix the label-but it won’t know if the user needs to navigate through 12 items with arrow keys. That’s where human testing comes in.

Real-World Failures and Fixes

In June 2024, a developer on Reddit reported spending three days fixing a keyboard trap in an AI-generated modal from UXPin. The modal closed fine withEsc, but when tabbing away, focus disappeared. The AI didn’t track where focus came from. It just closed the dialog and left the user stranded.

Another case: a financial services app used AI to generate charts. The AI assigned alt text like “Chart showing sales data.” But users with screen readers needed to know exact values: “Q1 sales: $2.3M, Q2: $2.8M.” AudioEye’s 2024 research found AI-generated alt text is only 68% accurate for complex visuals. That’s not good enough.

The fix? Hybrid workflows. Let AI build the base. Then, have an accessibility specialist run a screen reader test with JAWS or NVDA. Use tools like Axe or Lighthouse. Check focus order. Verify all controls respond to keyboard. This approach cut accessibility defects by 63% in a Fortune 500 company’s 2024 pilot.

What’s Missing-and What’s Coming

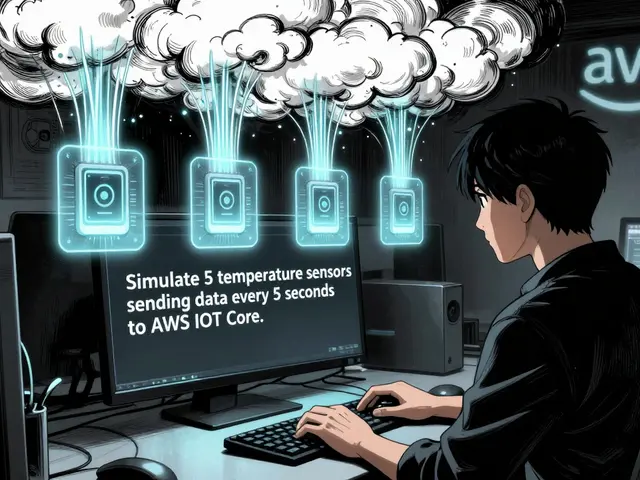

Current AI tools handle static components well. But they struggle with:- Dynamic content updates (like live chat or real-time alerts)

- Complex drag-and-drop interfaces

- Personalized navigation (e.g., adapting to a user’s motor skills)

That’s changing. In September 2024, Google added AI-powered focus suggestions for dynamic content. Microsoft’s Fluent UI now auto-generates ARIA labels using Azure AI. And Jakob Nielsen’s vision-generating interfaces tailored to each user’s needs-is no longer sci-fi. Imagine an AI that notices you use voice commands more than a mouse, and automatically restructures the UI for speech control.

Dr. Shari Trewin of IBM predicts AI will handle 80% of routine accessibility tasks by 2027. But she warns: “Complex cognitive and sensory needs? Those still need humans.”

What You Need to Do

If you’re using AI to build UIs, here’s your checklist:- Never trust AI-generated code without testing. Always run Axe or Lighthouse.

- Test with a real screen reader. NVDA (free) or VoiceOver (built into Mac/iOS) are enough to start.

- Check focus order. Tab through every interactive element. Does it make sense?

- Verify all form controls have labels. No

aria-labelwithout a visible label if it’s not self-explanatory. - Allocate 15-20% of your sprint time to accessibility. Treat it like testing, not an afterthought.

And if you’re building for government, finance, or education? You’re legally required to meet WCAG 2.1 AA. AI can help-but it won’t save you from a lawsuit. The DOJ settled a case in July 2024 where an organization’s AI-generated site failed Section 508, even though automated tools said it passed. The lesson? Automation doesn’t equal compliance.

Final Thought

Accessibility isn’t a feature. It’s a foundation. AI can scale it. But only humans can ensure it’s done right. The goal isn’t to build interfaces that pass tests. It’s to build interfaces that work for everyone. That’s not just good design. It’s the right thing to do.Can AI-generated UIs pass WCAG 2.1 automatically?

AI can help generate components that meet many WCAG 2.1 criteria, especially around semantic HTML and basic ARIA usage. But automated tools alone cannot guarantee full compliance. Complex interactions-like dynamic content updates, focus management in modals, or keyboard navigation in custom widgets-often require manual testing. Studies show AI tools achieve 78% compliance for keyboard access but drop to 52% for screen reader interactions. Human validation is still required.

Which screen readers work with AI-generated components?

AI-generated components that follow WCAG standards work with all major screen readers: JAWS 2023, NVDA 2023.3, VoiceOver (macOS Ventura and iOS 16), and TalkBack on Android. Compatibility depends on proper ARIA implementation and semantic HTML-not the AI tool itself. If the component uses correct roles, states, and labels, it will be announced correctly. If it doesn’t, no screen reader will fix it.

Do I need to hire an accessibility expert if I use AI tools?

Yes, at least one person on your team should have accessibility training. AI tools reduce workload, but they don’t eliminate the need for human judgment. An expert can spot issues AI misses-like focus traps, ambiguous labels, or incorrect ARIA usage. According to IAAP’s 2024 survey, teams with a dedicated accessibility specialist had 40% fewer compliance failures. Even if you use the best AI, you still need someone to test with a screen reader and verify keyboard flow.

Are AI-generated alt texts reliable for images?

No, not for complex images. AI can generate basic alt text like “bar chart” with 90% accuracy. But for data-rich visuals-charts with trends, infographics, or diagrams-it only gets it right 68% of the time, according to AudioEye’s 2024 research. For example, an AI might say “sales chart” when the real need is “Q1 sales up 12% from Q4.” Always review and rewrite AI-generated alt text for accuracy, especially in financial, medical, or educational contexts.

What’s the biggest risk of relying on AI for accessibility?

The biggest risk is false confidence. If your AI tool says your interface is “accessible,” you might skip manual testing. That’s dangerous. The U.S. Department of Justice settled a case in July 2024 where an organization’s AI-generated site passed automated tests but failed Section 508 because focus order was broken and screen readers couldn’t announce form errors. Automation catches surface issues. It doesn’t understand user experience. Always test with real users and real assistive tech.

Comments

Kevin Hagerty

AI ain't gonna save us from our own laziness. I've seen tools generate 'accessible' modals that trap users like a mouse in a jar. And now we're supposed to pat ourselves on the back because it 'passed automated tests'? Lol. Just because your code doesn't crash doesn't mean it works. Someone's gonna get sued because someone thought AI = compliance. Stay woke.

March 22, 2026 AT 10:55

Janiss McCamish

I tested this with NVDA last week. AI got the button labels right 9/10 times. But when the modal opened, focus vanished. No one noticed until a user called support. We fixed it in 10 minutes. AI helps. But you still gotta tab through it yourself. Seriously. Just do it.

March 23, 2026 AT 23:40

Richard H

This whole accessibility thing is just woke corporate theater. Who even uses screen readers anymore? Most people just zoom in or use voice assistants. Stop making devs jump through hoops for 3% of users. We got real problems to solve. Like keeping our apps from crashing on Android 12.

March 25, 2026 AT 08:49

Kendall Storey

Let’s be real - AI is the new linting tool for accessibility. It catches the low-hanging fruit: missing alt text, bad contrast, wrong element types. But when you get into focus traps or dynamic ARIA updates? That’s where the real dev work begins. I use UXPin’s AI to scaffold components, then I throw it into Axe and test with NVDA. It’s not magic. It’s workflow. And yeah, I still need my accessibility specialist. No shame in that.

March 26, 2026 AT 13:37

Akhil Bellam

Oh, how quaint. You speak of 'semantic HTML' as if it were sacred scripture. I, however, have worked with legacy systems where 'div' was king and 'button' was a myth. AI-generated components? They're better than nothing - but let's not pretend this is a panacea. The real issue? We're outsourcing empathy to algorithms. Who programmed the AI to understand that a user with tremors needs larger tap targets? Did it learn from lived experience? Or just from GitHub repos of privileged devs? Pathetic.

March 27, 2026 AT 00:56

Amber Swartz

I just cried reading this. I have a friend who’s blind and uses JAWS. She told me last week she spent 45 minutes trying to submit a form because the AI-generated 'submit' button had no label. 45 MINUTES. And we’re talking about ‘cost’ and ‘automation’? This isn’t a bug. It’s a moral failure. We’re building digital walls. And people are getting trapped inside. I’m done being polite.

March 28, 2026 AT 13:39

Robert Byrne

You say 'AI gets 52% of screen reader interactions right'? That’s not a number - that’s a crime. I’ve audited code where aria-live regions were missing, focus didn’t return after modal close, and labels were just 'button-123'. No one caught it because 'it passed Lighthouse'. That’s like saying a bridge is safe because it doesn’t collapse in a wind tunnel. Real users aren’t test bots. You’re not just failing standards - you’re failing people. Fix your process. Or get out.

March 29, 2026 AT 05:34

Tia Muzdalifah

I work with a lot of older users. Some of them use screen readers. Others just hate mice. I’ve seen AI tools generate buttons that look perfect but don’t work with keyboard. I just go in, tab through, and fix what’s broken. It takes 10 minutes. Why not make that part of the build? We’re not doing this for the algorithm. We’re doing it for grandma who just wants to pay her bill.

March 30, 2026 AT 13:09

Zoe Hill

I love how the article says 'AI can help' but then lists all the ways it fails. Why not just say 'AI is a tool, not a replacement'? I’m so tired of tech folks acting like automation is the answer to everything. We still need humans. We always will. And that’s okay. It’s not a flaw. It’s the point.

March 31, 2026 AT 01:07

Albert Navat

Look, I get it - focus traps, ARIA, semantic HTML. But let’s not ignore the elephant in the room: most dev teams don’t even have accessibility in their CI/CD pipeline. AI tools are great, but if your pipeline doesn’t run Axe, or you don’t have a screen reader test in your QA cycle, you’re just delaying the inevitable. The real bottleneck isn’t the AI. It’s your org’s culture. Fix that first. Otherwise, you’re just decorating a dumpster fire.

April 1, 2026 AT 09:17