Legal Operations and Generative AI: Automating Contract Review and Redlining

- Mark Chomiczewski

- 15 April 2026

- 5 Comments

| Metric | Traditional Manual Process | AI-Enhanced Process (2026 Standard) | Projected Improvement |

|---|---|---|---|

| Review Cycle Time | Days to Weeks | Minutes to Hours | 50-90% Reduction |

| Risk Identification | Human-dependent (prone to fatigue) | Algorithmic scanning + Human oversight | 90%+ Accuracy Rate |

| Legal Spend | High outside counsel reliance | In-house automation of repetitive tasks | Up to 90% savings on specific tasks |

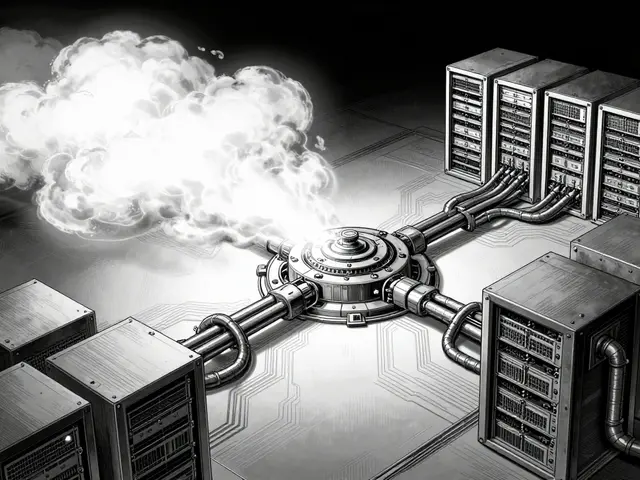

The Engine Under the Hood: LLMs and RAG

To get a legal operations system that actually works, you can't just plug in a generic chatbot. If you do, you'll run into "hallucinations"-where the AI confidently invents a legal precedent that doesn't exist. Modern systems avoid this by combining Large Language Models (LLMs) is neural networks based on transformer architecture trained on massive datasets to understand and generate human-like language with a technique called Retrieval-Augmented Generation (RAG) is an architecture that optimizes LLM output by retrieving relevant documents from a trusted external knowledge base before generating a response . Think of RAG as giving the AI an open-book exam. Instead of relying on its training memory, the AI pulls the exact wording from your company's actual past deals or your current approved policy. This ensures that if the AI suggests a change to a "Limitation of Liability" clause, it's basing that suggestion on a deal you actually signed last month, not a generic template from the internet. Some specialized tools, like ReviewPro, go even further by using "Sifters"-proprietary algorithms trained specifically on thousands of real-world agreements to hit accuracy rates of 95% or higher.Turning Expertise into Code: The Power of Legal Playbooks

If LLMs are the engine, Legal Playbooks are structured rule sets that encode an organization's legal standards, risk appetite, and preferred negotiation positions into a digital format the steering wheel. Without a playbook, an AI might tell you a clause is "unusual," but it won't tell you if it's "acceptable for this specific vendor in the EMEA region." Playbooks transform a lawyer's head-knowledge into a scalable asset. They define the "Gold Standard" (what we want), the "Fallback Positions" (what we can live with), and the "Walk-away Points" (what is a deal-breaker). When an AI like Sirion scans a contract, it doesn't just look for grammar; it compares the clause against these atomic risk elements. If a vendor insists on a 30-day payment term but your playbook mandates 60, the AI flags the deviation in real-time and suggests the exact fallback language your GC has already approved.

The New Workflow: From Intake to Archive

Integrating AI isn't about replacing the lawyer; it's about changing the order of operations. The manual "first pass" is gone. Here is how the modern AI-assisted workflow actually looks:- Intake and Drafting: You start with a standard template. The system captures metadata-like the total contract value and the risk posture-before a single word is written.

- The AI First Pass: The AI scans the incoming counter-party draft. It identifies every deviation from your playbook, flags missing mandatory clauses, and suggests context-aware fixes.

- Attorney Review: This is where the human expertise kicks in. You don't spend time finding the errors; you spend time deciding if the AI's proposed fixes are strategically sound for this specific relationship.

- Efficient Negotiation: You use AI-surfaced leverage points. For example, the AI might note, "This vendor typically accepts our indemnity clause after the second round of redlines," giving you a strategic edge.

- Finalization and Obligations: Once signed, the AI extracts key dates and obligations and pushes them into your contract management repository so you don't miss a renewal date.

Embedded AI vs. Standalone Platforms

One of the biggest hurdles in legal tech is "tool fatigue." Lawyers hate switching between five different tabs. This has led to a split in how AI is delivered. On one side, you have workflow-embedded tools like Spellbook. These run natively inside Microsoft Word is the industry-standard word processing software used by legal professionals for document creation and redlining . It flags non-market terms and suggests redlines directly under the lawyer's name, preserving the traditional "track changes" experience while augmenting the speed. On the other side are purpose-built platforms like ReviewPro or Sirion. These are more like "command centers." They offer deeper analytics, better obligation tracking, and sophisticated agent-based architectures. For instance, Sirion uses specialized "Redline Agents" and "IssueDetection Agents" that work in tandem to provide explainable outcomes. Instead of a vague suggestion, the system can tell you: "I am suggesting this change because it aligns with the 2025 updated Data Privacy Policy for California residents."

Overcoming the Trust Gap: Traceability and Validation

Let's be honest: no GC is going to sign off on a contract that was "AI-generated" without verification. The key to adoption is traceability. A high-quality AI system doesn't just give you a suggestion; it gives you a citation. You should be able to click a redline and see the exact historical contract it was modeled after. Moreover, the intelligence layer must be dynamic. Your business risk appetite in January might be different by July. The best systems update their logic automatically as new deals are closed, creating a virtuous cycle. The more you negotiate, the smarter the AI becomes at predicting what your counter-parties will accept.Does Generative AI replace the need for a qualified attorney in contract review?

Absolutely not. AI is an amplifier, not a replacement. While AI can handle 90% of the repetitive "scanning" and "flagging," it cannot handle strategic nuance, high-level relationship management, or novel legal challenges that fall outside the parameters of a playbook. Human oversight remains the mandatory final step for all legal decision-making to ensure accuracy and ethical compliance.

How do legal teams prevent AI "hallucinations" in contracts?

The most effective way is through Retrieval-Augmented Generation (RAG). By forcing the AI to retrieve information from an organization's own verified documents and playbooks rather than relying on its general training data, the risk of fabricated terms is drastically reduced. Additionally, a strict human-in-the-loop validation process ensures every AI suggestion is approved by a lawyer.

What is the difference between a generic LLM and a legal-specific AI tool?

A generic LLM is designed for broad conversation and general text generation. A legal-specific tool, like ReviewPro or Sirion, integrates LLMs with specialized legal algorithms, domain-specific training data, and organizational playbooks. These tools are built specifically for the "redlining" workflow, offering traceability, risk scoring, and integration with tools like Microsoft Word that generic chatbots lack.

How long does it take to implement an AI redlining system?

Implementation time varies based on the maturity of your playbooks. If you have clear, written standards, a native Word-based tool can be deployed in days. However, building a full-scale, playbook-driven intelligence system requires an upfront investment in encoding your risk positions and training your team, which typically takes several weeks to a few months of calibration.

Can AI redlining actually reduce outside counsel spend?

Yes. By automating the first and second passes of contract review, legal teams can handle a much higher volume of contracts in-house. Instead of sending every mid-level agreement to a law firm for a preliminary review, the in-house team can use AI to clear the standard terms and only engage outside counsel for high-complexity, high-risk exceptions.

Next Steps for Implementation

If you're a legal ops lead looking to start, don't try to automate everything at once. Start with your most repetitive contract type-usually NDAs or simple SaaS agreements.- For the Novice: Start with a Word-native AI tool to get your team comfortable with AI-assisted drafting.

- For the Scaling Department: Invest in building your digital playbooks. Document your fallback positions clearly before choosing a platform.

- For the Enterprise: Look into agent-based platforms that offer full lifecycle management, from intake to obligation tracking.

Comments

Pamela Watson

I already knew all this because I work in a law office! 🙄 It's basically just fancy autocorrect but for lawyers who can't find their own mistakes lol 💅

April 15, 2026 AT 11:54

Renea Maxima

The obsession with "efficiency" is just a mask for our collective descent into intellectual atrophy 🙃 Why rush a contract when the slow agony of reading is where the real meaning lives? We're just automating the soul out of the law. ☁️

April 17, 2026 AT 09:54

Christina Kooiman

It is absolutely appalling, and I simply cannot fathom how some people get away with such atrocious phrasing in professional settings, but more importantly, the sheer lack of attention to basic punctuation in the tech world is driving me to the brink of a total nervous breakdown! I mean, really, if we are going to trust an algorithm to handle our multi-million dollar liability clauses, the very least we can do is ensure that the humans overseeing the process have a rudimentary grasp of the Oxford comma, because otherwise, we are just hurtling toward a linguistic apocalypse where meaning is discarded in favor of speed and everything just becomes a blurred mess of corporate speak that makes my head spin with sheer frustration!!!

April 18, 2026 AT 22:54

michael T

This whole "automation" vibe is just a cold, sterile wasteland that sucks the life out of the courtroom drama! 😫 I remember when law was about the blood, sweat, and tears of a handwritten brief, and now we're just feeding our hopes and dreams into a digital shredder called RAG. It's a kaleidoscopic nightmare of efficiency that leaves us all feeling like hollowed-out husks of our former professional selves, just drifting in a sea of optimized redlines while our passion evaporates into the cloud. Absolute tragedy!

April 20, 2026 AT 05:45

Stephanie Serblowski

Omg, the synergy here is just breathtaking! 🌟 I love how we're leveraging these paradigm-shifting LLMs to optimize our low-hanging fruit, even though the idea that a machine can "understand" a relationship is just peak comedy. 🤡 It's so wonderful that we're all just pretending the human element isn't being totally disrupted while we celebrate our new 90% reduction in thinking time! Total win-win for the ecosystem! ✨

April 21, 2026 AT 13:34