Long-Context Generative AI: Rotary Embeddings, ALiBi, and Memory Mechanisms

- Mark Chomiczewski

- 14 April 2026

- 8 Comments

The real struggle with long contexts is that standard Transformers are computationally expensive. As the text gets longer, the memory needed grows quadratically. To fix this, researchers developed three main paths: smarter ways to track position (RoPE and ALiBi) and ways to manage memory without keeping everything in active RAM. If you're building an app that needs to analyze entire codebases or sustain an 8-hour conversation, understanding these trade-offs is the difference between a tool that works and one that hallucinates every five minutes.

The Precision of Rotary Positional Embeddings

For a long time, AI models used absolute positional embeddings-basically tagging each word with a number (Word 1, Word 2, etc.). This fails miserably when you encounter a sequence longer than what the model saw during training. Enter Rotary Positional Embeddings, often called RoPE. Introduced in the 2021 "RoFormer" paper, RoPE doesn't just tag a word; it rotates the representation of the word in a high-dimensional space.

Think of it like a clock hand. Instead of saying "this word is at position 50," RoPE uses rotation matrices to preserve the relative distance between any two words. Because the rotation is consistent, the model can extrapolate to sequences 2 to 4 times longer than its training set with surprising reliability. This is why 63% of the top 20 long-context models rely on it. If you're doing coding tasks, RoPE is your best bet because it has superior positional awareness-it knows exactly how a function call on line 10 relates to a definition on line 1,000.

The catch? It's not free. RoPE adds about 10-15% computational overhead. More importantly, once you push past 500K tokens, the memory growth becomes a wall. You'll need a beefy rig-think 80GB+ VRAM on an NVIDIA H100-to keep things from crashing.

ALiBi: The Efficiency Powerhouse

While RoPE is about precision, ALiBi (Attention with Linear Biases) is about raw scaling. Developed by researchers at Cornell and Google, ALiBi does away with positional embeddings entirely. Instead, it adds a simple linear penalty to the attention score based on how far apart two words are. The further away a word is, the less the model "pays attention" to it by default.

This approach is incredibly elegant. Because there are no embeddings to learn or store, ALiBi reduces memory requirements by 7-12% and can slash pretraining costs by up to 22%. In terms of scaling, it's a beast-it can reliably extend to 8 times the training length. If you're using a model like Falcon 200B, you're seeing ALiBi in action.

However, this simplicity comes with a cost. ALiBi is slightly worse at "positional reasoning." If your task requires the AI to be pinpoint-accurate about the exact structure of a complex document, you might notice a 5-7% drop in accuracy compared to RoPE. There's also a technical quirk: ALiBi doesn't always play nice with 4-bit quantization, which can lead to an 8-10% accuracy drop in compressed models.

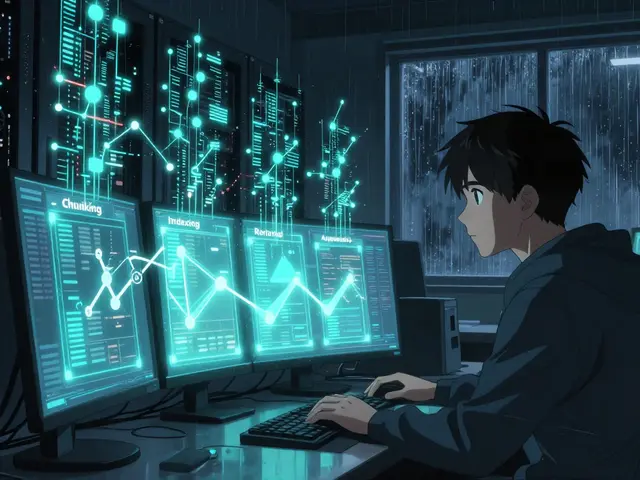

| Feature | Rotary Embeddings (RoPE) | ALiBi |

|---|---|---|

| Primary Strength | High positional precision | Extreme length extrapolation |

| Computational Cost | +10-15% overhead | -7-12% memory usage |

| Max Scaling | 2-4x training length | Up to 8x training length |

| Best Use Case | Coding, Complex Logic | Fast Pretraining, Massive Text |

Memory Mechanisms: Beyond Brute Force

Even with RoPE or ALiBi, you can't just keep expanding the window forever. Eventually, the energy cost becomes astronomical-processing 1 million tokens takes over 3 times the power of a 128K window. This is where Memory Mechanisms come in. These are strategies to "remember" the past without keeping every single token active in the attention head.

There are three main ways AI handles this now:

- Hierarchical Compression: Used in models like Claude Opus 4.5. It takes old parts of the conversation and compresses them into summaries, reducing the data to about 20-30% of its original size. It keeps the flow, but you lose the nuance-roughly 12-15% of the fine details vanish per cycle.

- External Vector Storage: This is essentially RAG (Retrieval-Augmented Generation) baked into the model. GPT-5.2 uses this to maintain 95% recall across 1 million tokens by storing data in a Pinecone vector database. It's incredibly accurate, but it introduces a slight lag (120-180ms) every time the AI has to "look something up."

- Recurrent State Preservation: Featured in Z AI's GLM-4.7. Instead of a window, it maintains a 4096-dimensional hidden state that evolves. It's a more "fluid" memory, with only 0.8% degradation per 100K tokens.

The industry is currently split. Some, like Google with Gemini 3 Pro Preview, are pushing the 1 million token brute-force window. Others, like Yann LeCun at Meta, argue that we should stop the "arms race" and instead build AI that forgets things the way humans do-keeping only what's relevant and tossing the rest.

Real-World Performance: The "Needle in a Haystack" Problem

If you see a model claim a "1M token window," don't trust it blindly. The gold standard for testing this is AA-LCR (Long Context Retrieval), which checks if the model can actually find a specific fact buried in the middle of a massive text block. Raw length is a vanity metric; retrieval accuracy is the reality.

For example, Gemini 3 achieves about 64% accuracy on these tests. GPT-5.2, despite having a smaller 500K window, hits 62%. This tells us that a smaller, more efficient window is often better than a giant one that suffers from "lost in the middle" syndrome, where the AI remembers the start and end of a prompt but forgets the center.

In the field, users are feeling this. On Reddit's r/LocalLLaMA, developers overwhelmingly prefer RoPE for coding because the AI doesn't lose track of variable definitions. Meanwhile, enterprise users on G2 praise Gemini 3's ability to handle 500-page technical documents without needing to be reminded of the context every few prompts.

What is the main difference between RoPE and ALiBi?

RoPE (Rotary Positional Embeddings) uses rotation matrices to keep track of relative positions, offering high precision which is great for coding and logic. ALiBi (Attention with Linear Biases) applies a linear penalty to distant tokens, which makes it much faster to train and better at scaling to extreme lengths, though it's slightly less precise with document structure.

Does a larger context window always mean a better AI?

No. Raw context length is often a vanity metric. The critical measure is AA-LCR (Long Context Retrieval) accuracy. Many models experience "context degradation" where hallucinations increase by up to 35% in the final 10% of a long prompt, regardless of the theoretical window size.

How does hierarchical compression affect the AI's memory?

Hierarchical compression summarizes older parts of a conversation to save space. While this maintains the general thread of a discussion, it typically results in a loss of 12-15% of nuanced information per compression cycle, meaning the AI might remember *that* you discussed a topic, but forget the specific wording you used.

What hardware is required to run long-context models?

Processing contexts above 500K tokens generally requires high-end enterprise hardware. Because RoPE and other mechanisms increase memory overhead, systems with 80GB of VRAM (like the NVIDIA H100) are typically necessary to avoid out-of-memory errors.

Is RAG better than a long-context window?

It depends on the goal. RAG (via external vector storage) is better for preserving nearly 100% of original information across millions of documents and is more energy-efficient. A long-context window is superior for tasks requiring deep reasoning across a single, cohesive document where the relative order of information is crucial.

Next Steps and Implementation

If you're a developer looking to implement these, start with LangChain's RAG integration. It's the fastest way to get a "virtual" long context without needing a cluster of H100s. If you're fine-tuning your own model, RoPE is the safest bet for compatibility, as it only requires a few hundred lines of code change in most Transformer implementations.

For those in enterprise environments, focus on reliability metrics rather than token counts. A model that reliably handles 200K tokens is far more valuable than one that claims 1M but hallucinates every time you ask a question about the middle of the document. Keep an eye on upcoming releases like Claude 5, which promises "adaptive memory" to bridge the gap between brute-force windows and smart compression.

Comments

Kendall Storey

The VRAM wall is real. Trying to push high token counts on consumer gear is basically a nightmare unless you're into extreme quantization that nukes the perplexity. RoPE is definitely the meta for dev work right now.

April 14, 2026 AT 20:48

Janiss McCamish

RAG is still the most practical way for most people.

April 15, 2026 AT 15:20

Pamela Tanner

It is essential to remember that the "lost in the middle" phenomenon is not merely a hardware limitation but a fundamental challenge in attention mechanism design. Ensuring a model maintains high recall throughout the entire context window requires rigorous validation, as raw token counts often mislead developers regarding actual utility.

April 17, 2026 AT 12:31

Ashton Strong

I find it truly remarkable how these architectural advancements are democratization the ability to analyze vast datasets. It is my belief that the synergy between RoPE and external vector storage will lead to an era of unprecedented productivity for researchers worldwide.

April 19, 2026 AT 02:39

Richard H

American companies need to stop relying on these research papers and just build the most powerful hardware possible to crush the competition. Who cares about a 10% overhead when we have the most compute power on the planet? Just throw more H100s at the problem and dominate the market.

April 19, 2026 AT 20:43

Steven Hanton

The point regarding hierarchical compression is quite interesting, as it mirrors certain aspects of human cognitive processing. While the loss of nuance is a drawback, perhaps this trade-off is a necessary step toward creating more efficient, human-like memory systems that prioritize relevance over raw data retention.

April 20, 2026 AT 05:19

Kristina Kalolo

The comparison between Gemini and GPT's retrieval accuracy is the most useful part of this. It proves that marketing a million tokens is often just a distraction from the actual precision of the model's retrieval capabilities.

April 21, 2026 AT 11:32

ravi kumar

This is a very helpful breakdown for anyone starting with LLMs. For those struggling with memory issues, I suggest starting with smaller windows and gradually scaling up after verifying the retrieval accuracy. It takes patience but leads to much more stable implementations. Just keep iterating and you will get the hang of it. The community is here to help. Many of us faced the same OOM errors when we first started. Don't let the complexity discourage you. Every small optimization counts. Focus on the logic first, then the scale. The hardware will eventually catch up to our needs. Consistency is key in fine-tuning. You'll see the improvements over time. Just stay curious and keep testing different embeddings. It is a long journey but very rewarding. Keep pushing forward.

April 21, 2026 AT 19:08