Regional Adoption Patterns: How Regulation Shapes Vibe Coding Usage

- Mark Chomiczewski

- 29 March 2026

- 10 Comments

Imagine asking your computer to build an app just by describing what you want it to do. You skip the syntax, ignore the semicolons, and focus entirely on the logic. This is the core promise of Vibe Coding, which is a paradigm shift in software development where users express intentions through natural language prompts. Coined by Andrej Karpathy in early 2025, this approach has moved from buzzword to practical workflow in many studios. However, as we navigate into late 2026, a critical question remains unanswered: does geography matter when you let an AI write your code? While hard data on regional adoption is still maturing, the regulatory environment is quietly dictating who gets to use it and how.

The Mechanics Behind Vibe Coding

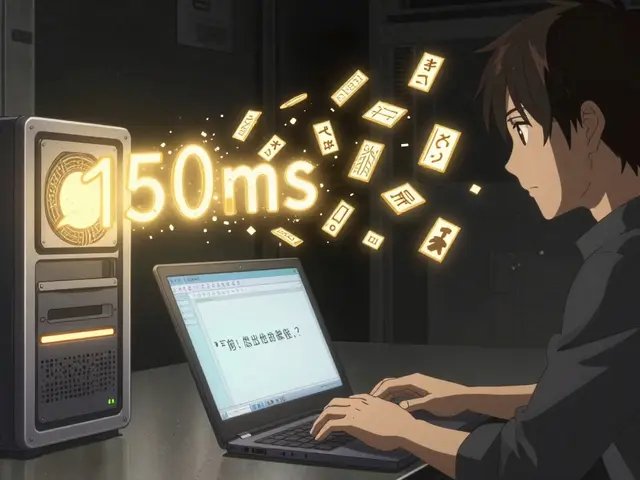

To understand why regulation matters, you first need to grasp what is actually happening under the hood. When a developer engages in vibe coding, they aren't typing commands in a traditional terminal. Instead, they interact with Large Language Models such as LLMs trained on massive datasets of public code. The process starts with prompt understanding. You tell the system you want a login screen with social authentication. The model retrieves patterns it learned during training, plans the architecture, and generates executable code across frameworks like FastAPI or React.

This pipeline relies heavily on dependency management and automated assembly. In a standard workflow, a developer spends hours configuring environments and resolving package conflicts. With vibe coding, tools handle dependency injection automatically. This speed comes at a cost, though. Because the model operates on probability rather than deterministic logic, the generated code might work perfectly for a prototype but fail in production without human review. This creates a tension between efficiency and compliance that regulators are starting to notice.

Current Adoption Metrics

The speed at which developers are adopting AI-assisted tools suggests vibe coding is becoming industry standard faster than most anticipated. Recent surveys indicate that 84 percent of developers now utilize some form of AI coding assistance. More strikingly, estimates suggest that 41 percent of global code is already AI-generated. These numbers imply a massive infrastructure shift. However, these figures represent a global aggregate. They mask the nuances of where this code is being written and sold.

In the United States, the culture leans heavily toward rapid prototyping. Startups prioritize getting a minimum viable product to market before worrying about legacy standards. In contrast, enterprise sectors like healthcare or finance often require stricter validation protocols. This cultural difference acts as a soft regulator even before formal laws kick in. Developers in Silicon Valley might embrace the "code first, refine later" mindset immediately, whereas developers in Berlin might demand verification steps before deploying any AI-generated line.

Regulatory Frameworks and AI Governance

Now, let's talk about the rules. There isn't a single law called the "Vibe Coding Act," but existing frameworks create a complex web of requirements. The General Data Protection Regulation (often referred to as GDPR) in Europe sets a high bar for data privacy. Since vibe coding tools often send prompts to cloud servers for processing, companies must ensure that user data within those prompts doesn't violate privacy rules.

If a developer uses vibe coding to build a feature for a European customer, they have to ask: where does my prompt go? Does the AI provider log it? If so, does it cross borders? Under GDPR, transferring personal data out of the European Economic Area requires specific safeguards. This means tech teams operating in Zurich face different constraints than those in Seattle. In the U.S., state-level laws like the California Consumer Privacy Act (CCPA) add another layer, demanding transparency on how consumer data is used. These laws force organizations to implement guardrails around AI tools that might otherwise seem frictionless.

| Region | Primary Regulation | Impact on Workflow | Risk Profile |

|---|---|---|---|

| European Union | GDPR, EU AI Act | Requires strict data consent, audit trails for AI decisions. | High Compliance Risk |

| United States (Federal) | N/A (Sector Specific) | Flexible innovation, sector-based restrictions (Finance, Health). | Moderate Legal Risk |

| United States (State) | CCPA, CPRA | Consumer notification, opt-out mechanisms required. | Data Privacy Risk |

| China | Generative AI Measures | Content filtering, algorithm registration mandated. | Strict Operational Restriction |

Intellectual Property Ambiguities

Beyond privacy, there is the elephant in the room: ownership. Who owns the code generated by StarCoder2, which is a specialized open-source model for code generation? In the United States, copyright law currently leans toward protecting human authorship. If an AI writes a function block without significant human intervention, the resulting code might fall into the public domain. This creates risk for companies trying to patent software built via vibe coding.

Legal scholars argue that if a company buys a subscription to a vibe coding platform, their contract usually dictates the terms of use. Some providers claim full ownership transfers to the user; others retain licenses to reuse your generated code for training. For a regulated entity like a bank, this ambiguity is unacceptable. They need guaranteed ownership. Consequently, banks might adopt vibe coding internally for utility scripts but ban it for core banking products until legislation clarifies IP status.

The Human-in-the-Loop Requirement

One of the few certainties in the current regulatory climate is that automation cannot be fully blind. Regulators are moving toward requiring "explainability" in algorithms. If an AI tool makes a decision that affects financial credit scores or medical diagnoses, a human must be able to explain why. Vibe coding reduces the visibility of the underlying code because developers often stop reading the syntax lines they didn't write.

This introduces a paradox. Vibe coding is designed to make code transparent enough to hide. But regulations want code transparent enough to verify. To satisfy this, many enterprises are implementing mandatory review gates. Even if you generate a whole module in seconds, a senior engineer must sign off on the logic. This slows down the "rapid prototyping" advantage but keeps the company safe from liability. As of 2026, this "context engineering"-knowing when to rely on AI versus manual intervention-is becoming a distinct job skill.

Emerging Risks and Vendor Lock-In

Another factor shaping regional usage is platform dependency. Full-stack platforms offering vibe capabilities often create proprietary ecosystems. If you build your entire application using a specific closed-source vibe generator, migrating away becomes difficult. This vendor lock-in is scrutinized heavily in regions that favor antitrust enforcement. In the EU, the Digital Markets Act targets gatekeepers who prevent switching. A vibe coding tool that traps data could face regulatory pushback.

Security is also paramount. Generated code often inherits vulnerabilities from the training set. If the LLM was trained on insecure open-source repositories, it might replicate those flaws. Security audits must happen before deployment. Regional differences here involve how aggressively nations mandate cybersecurity standards. Countries with mature digital sovereignty agendas may require that the AI models themselves run locally within national borders to prevent data exfiltration.

Looking Ahead to the Next Wave

As we move through 2026, the conversation is shifting from "Can we use AI?" to "How do we govern it responsibly?" The adoption rates will likely remain high due to the productivity gains, but the methods of implementation will diversify based on jurisdiction. We are seeing a bifurcation where some regions treat vibe coding as a standard utility, while others treat it as a sensitive technology requiring special clearance.

For developers, the takeaway is clear. You cannot simply download a tool and hit deploy regardless of location. You must map your tech stack against local compliance requirements. This involves checking data residency rules, copyright agreements, and audit expectations. While the specific data on regional adoption patterns is still emerging, the direction of travel is obvious: regulation is the hidden architect of our future development workflows.

Is vibe coding legal in all countries?

Generally, yes, but the data handling practices behind it vary by region. Tools that store user prompts must comply with local privacy laws like GDPR or CCPA.

Who owns code generated by AI tools?

Ownership depends on the service provider's terms of service. In the US, purely AI-generated works may lack copyright protection, so human modification is recommended.

Does vibe coding replace software engineers?

It augments them rather than replaces them. Engineers are shifting roles to focus on context engineering, architectural planning, and reviewing AI outputs.

Are there security risks associated with AI-generated code?

Yes, models can inadvertently replicate vulnerable code from training data. All generated code requires security scanning before production deployment.

How does the EU AI Act affect vibe coding?

The EU AI Act mandates risk assessments for AI systems used in sensitive sectors. Developers must ensure tools meet transparency and accuracy standards.

Comments

anoushka singh

Honestly this feels like another layer of bureaucracy disguised as innovation.

March 29, 2026 AT 15:32

Madhuri Pujari

OH! My god! They think AI writes code! But does it pass THE test! People wonder if anyone checks the work!!!

March 30, 2026 AT 15:07

vidhi patel

Your syntax is poor. The regulatory framework demands precision not emotional outbursts. You are displaying a lack of understanding here. Furthermore, your tone is unprofessional. Compliance requires exactness in every statement. Thus you are incorrect in this assertion.

April 1, 2026 AT 08:11

Aryan Jain

Big tech wants to own our minds. Code is freedom but they block it now.

April 1, 2026 AT 11:00

Tarun nahata

The horizon glows with new possibilities and electric dreams of creation! We shall rise above the shadows together! The future sparkles with bright opportunities ahead!

April 3, 2026 AT 00:56

Pramod Usdadiya

In India we have lot of startups using this tech but laws are not clear yet. It needs more guidence form government side please.

April 4, 2026 AT 20:32

Nalini Venugopal

Hey there! You almost got that part right! Guidance is spelled with an 'a' though. Great point regarding local startups!

April 6, 2026 AT 06:56

Amit Umarani

Writing styles vary wildly here but the core message remains obscured by fluff. The paragraph structure lacks cohesion entirely.

April 6, 2026 AT 21:53

Sandeepan Gupta

Structure matters but content drives value. Everyone tries their best to share thoughts clearly. Patience helps in reading these threads well.

April 7, 2026 AT 07:25

Noel Dhiraj

We see changes happening fast. Many people worry about privacy. I understand that fear completely. But we must look forward too. Technology moves regardless of us. If we stop now we fall behind. Companies will adapt regulations slowly. We need more data points actually. The EU acts differently than USA. China has its own strict rules now. This creates a fragmented landscape globally. Developers need to stay updated constantly. Learning curves are steep indeed. Yet the potential reward is high efficiency gains. Security remains a major concern for everyone. We cannot ignore that risk factor easily. Trust builds through transparency alone.

April 8, 2026 AT 08:10