Tokens and Vocabulary in Large Language Models: How Text Becomes Computation

- Mark Chomiczewski

- 7 February 2026

- 7 Comments

When you type a question into an AI chatbot, it doesn’t see words like you do. It sees numbers. Every letter, word, or part of a word gets broken down into tiny pieces called tokens. These tokens are the only thing the model understands. Think of them as the alphabet of machine language. Without tokens, there’s no way for a language model to turn your sentence into something it can think about, remember, or reply to. This is how text becomes computation.

What Exactly Is a Token?

A token is the smallest unit of text a language model processes. It can be a whole word like "cat," a part of a word like "run" and "ning" in "running," or even punctuation like "," or "?". The model doesn’t care about grammar or meaning-it just matches each piece of text to a number in its internal dictionary, called a vocabulary. That number then gets turned into a vector-a list of numbers that represent that token’s meaning in the model’s mind.

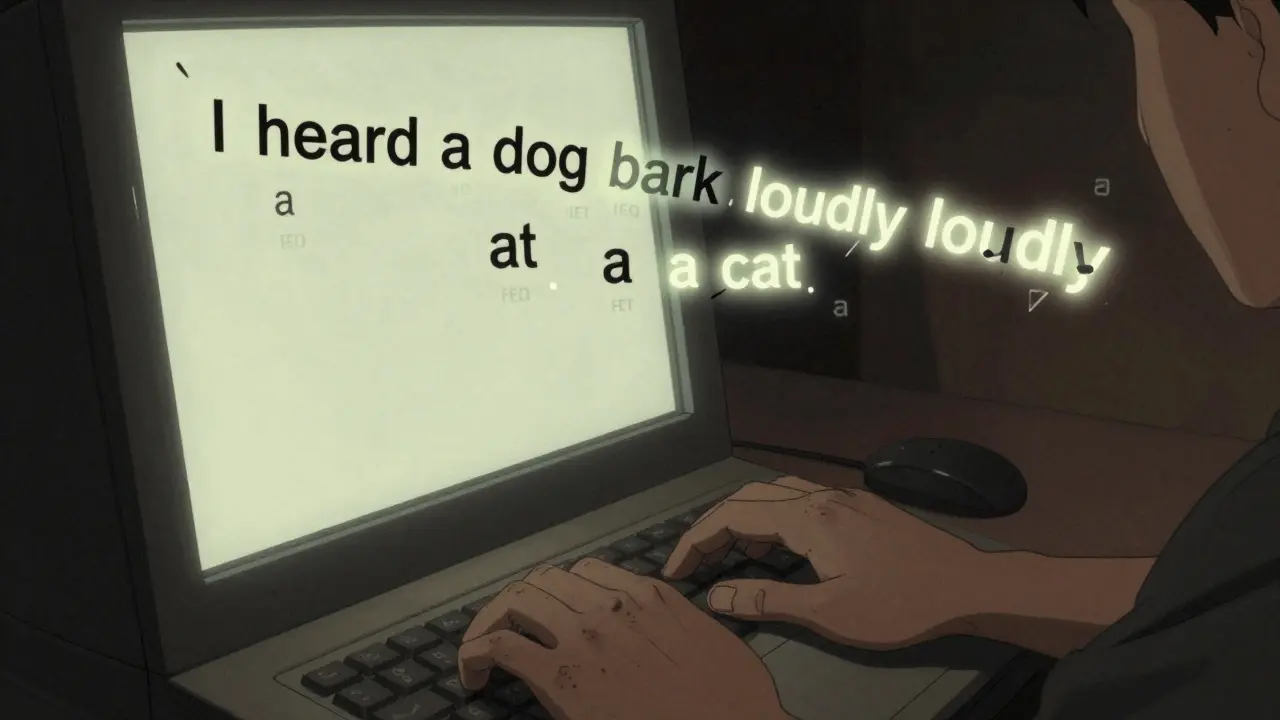

For example, the sentence "I heard a dog bark loudly at a cat" might be split into 9 tokens if using word-level tokenization. But if you used character-level tokenization, it would become 34 tokens. That’s a big difference. More tokens mean more memory, more processing, and higher costs. That’s why modern models don’t use either extreme. They use something smarter: subword tokenization.

Why Subword Tokenization Rules the Roost

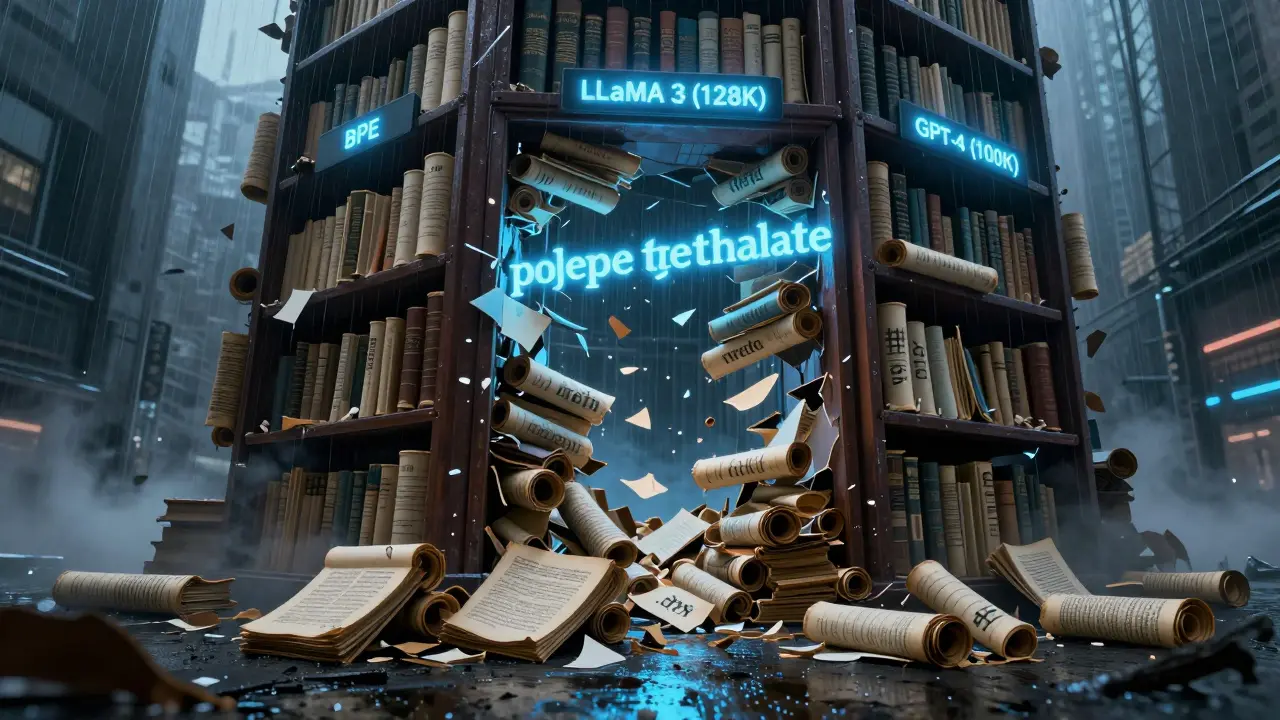

Early models tried word-level tokenization. But that meant needing a vocabulary with over a million entries to cover every possible word, including rare ones like "university" or "polyethylene terephthalate." That’s not practical. Character-level tokenization was too slow. Every word became a string of letters, and the model had to process way too many steps just to understand a single sentence.

Enter Byte-Pair Encoding (BPE). This method starts with individual characters and learns which ones appear together most often. Over time, it merges them into bigger chunks. So "dog" stays as one token, but "running" becomes "run" + "ning" because those two parts show up often in training data. It’s not based on linguistics-it’s based on frequency. That’s why "dogs" and "dog" are often separate tokens, even though they’re related. And why "racket" might split into "rack" and "##et" even though it’s one word.

Most major models today use BPE or a variation. GPT-4 uses around 100,000 tokens. LLaMA 3 uses 128,000. BERT, older but still widely used, has about 30,000. Each of these numbers represents the size of the model’s vocabulary-the list of all the pieces it knows how to recognize.

How the Vocabulary Works Behind the Scenes

The vocabulary is like a massive lookup table. When you input text, the tokenizer scans it from left to right, trying to match the longest possible sequence from its vocabulary. If it hits a word it doesn’t know, it breaks it into smaller pieces it does know. This is why technical terms often explode into multiple tokens. "Polyethylene terephthalate"? That’s five tokens for many models. But "cat"? One token. Simple.

Each token has an ID. That ID points to an embedding-a dense vector of numbers that the model uses to understand relationships. "King" and "queen" might have similar embeddings because they appear in similar contexts. "Dog" and "bark" might be close because they often appear together. These relationships are learned during training. Tokens are the bridge between human language and these mathematical representations.

Why Token Count Matters More Than You Think

Token count isn’t just a technical detail. It’s money. Most LLM APIs charge per token. Input tokens cost a few cents per thousand. Output tokens cost more. If your document gets split into twice as many tokens as expected, your bill doubles.

And it’s not just cost. Context windows-the maximum number of tokens a model can handle at once-directly limit what you can do. GPT-4 can handle 128,000 tokens. That’s about 300 pages of text. But if your input uses 100,000 tokens just to process a long legal contract, you’ve got almost no room left for the model’s response. That’s why developers now check token counts before sending anything to an API.

Simple word counting can be wildly wrong. Microsoft found that for technical documents, word counts can underestimate token usage by 30-50%. A sentence with 10 words might use 22 tokens. That’s why tools like Hugging Face’s tokenizer visualizer are now standard. Developers paste text in, see exactly how it splits, and adjust before sending it to the model.

Problems You Won’t Find in Tutorials

Here’s what no one tells you: tokenization is messy. Non-English languages often get tokenized worse than English. A Spanish word like "desarrollo" might split into "des" + "arrollo" if it’s rare, even though it’s one word. That adds tokens and slows things down. In 2024, developers reported an 18% drop in token count for Spanish text when switching from GPT-3.5 to GPT-4-because its vocabulary got bigger and better at handling common non-English words.

Medical, legal, and scientific terms are the worst offenders. IBM’s 2023 study showed medical jargon needed 22% more tokens than regular text. Why? Because models weren’t trained on enough of it. The tokenizer has never seen "methotrexate" before, so it breaks it into "meth" + "ot" + "rex" + "ate." That’s four tokens for one drug name. In a 10-page patient report, that adds up fast.

And then there’s inconsistency. Different versions of the same model can tokenize the same text differently. Hugging Face had over 200 open issues in early 2024 about tokenization changes between model updates. One developer on Reddit spent three days debugging why their code broke after upgrading from LLaMA 2 to LLaMA 3-turns out, the tokenizer split "JSON" differently.

What’s Changing in 2025 and Beyond

Models are getting smarter about tokens. LLaMA 3.1, released in January 2025, improved tokenization for non-English languages by 12-18%. That’s huge for global applications. OpenAI is working on GPT-5 with adaptive vocabulary sizing-meaning the model could dynamically adjust its token list based on the input. If you’re asking about quantum physics, it might temporarily expand its vocabulary to handle "superposition" and "entanglement" more efficiently.

Companies are starting to build custom tokenizers. IBM, Salesforce, and others are training their own vocabularies using internal documents. One enterprise client cut LLM costs by 37% just by preprocessing text to combine technical terms into single tokens before sending them to the model.

But the future isn’t all about bigger vocabularies. Researchers are exploring alternatives-like continuous embeddings that don’t rely on discrete tokens at all. But those are still experimental. For now, tokens are here to stay. Gartner predicts that by 2027, 60% of enterprise LLMs will use custom tokenizers tailored to their industry.

What You Need to Do Today

If you’re using LLMs in your work, here’s what matters:

- Always check token counts before sending long texts. Use Hugging Face’s tokenizer or OpenAI’s official tool.

- Preprocess text: Combine known technical terms into single tokens. Replace "polyethylene terephthalate" with "PET" if your audience knows it.

- Don’t assume word count = token count. For technical content, expect 30-50% more tokens.

- Test across models. GPT-4 handles non-English better than older models. LLaMA 3 is better for code. Choose based on your text.

- If you’re building an app, build token monitoring into your pipeline. Track cost per token, not per request.

Tokenization isn’t glamorous. It’s not AI magic. But it’s the foundation. Get it wrong, and your model is slow, expensive, or broken. Get it right, and you unlock faster, cheaper, more reliable AI.

What’s the difference between tokens and words?

A word is what humans use. A token is what the model uses. One word can be one token (like "cat"), or it can be split into multiple tokens (like "running" → "run" + "ning"). Sometimes, one token represents part of a word. So token count is almost always higher than word count, especially for technical or compound words.

Why do some models have bigger vocabularies than others?

Larger vocabularies help models handle rare words, technical terms, and multiple languages better. LLaMA 3’s 128,000-token vocabulary lets it understand more niche words than GPT-4’s 100,000. But bigger vocabularies mean larger models, more memory, and higher costs. Some models, like Google’s Gemma, use smaller vocabularies (32,000) to stay lightweight for edge devices.

Can I add my own tokens to a model’s vocabulary?

Yes, if you’re fine-tuning or using a library like Hugging Face. You can add custom tokens for company jargon, product names, or domain-specific terms. For example, adding "CRISPR-Cas9" as one token instead of letting it split into five reduces cost and improves accuracy. But you can’t change the base vocabulary of commercial APIs like GPT-4-you can only preprocess your input.

Does tokenization affect AI bias?

Yes. Tokenization favors frequent patterns in training data. If a language has less data-like Swahili or Bengali-it gets broken into smaller pieces more often. That makes it harder for the model to learn meaningful relationships. This is why models perform worse on low-resource languages. Experts like Dr. Emily Bender warn this creates systemic bias, not just in output, but in how language itself is processed.

How do I count tokens accurately?

Never guess. Use the official tokenizer for the model you’re using. For OpenAI models, use their tokenizer tool. For open-source models like LLaMA, use Hugging Face’s Tokenizers library. Paste in your text, and it’ll show you exactly how it splits. This is critical for budgeting and avoiding unexpected API costs.

Comments

Cynthia Lamont

This is the most insane thing I’ve ever read. Tokens aren’t just numbers-they’re the goddamn skeleton of AI. You type ‘cat’ and it sees 472. You type ‘running’ and it sees 12 and 89. No meaning. No context. Just numbers. And we’re okay with this? We’re building civilizations on this? I’m terrified.

And don’t even get me started on how ‘desarrollo’ gets chopped into ‘des’ and ‘arrollo’. That’s not tokenization-that’s linguistic assault. Spanish speakers deserve better. This isn’t progress. It’s colonialism with a GPU.

February 8, 2026 AT 06:43

Patrick Tiernan

lol so basically ai is just a really overcomplicated autocomplete

who cares how many tokens ‘polyethylene terephthalate’ takes as long as it spits out the right answer

also why is everyone acting like this is new news? this has been the case since like 2018

February 10, 2026 AT 05:59

Patrick Bass

Actually, the tokenization of compound words like ‘polyethylene terephthalate’ isn’t random-it’s based on frequency in training data. If a term appears rarely, it’s broken down into subword units that have higher statistical presence. This isn’t a flaw-it’s optimization.

Also, ‘desarrollo’ splitting into ‘des’ and ‘arrollo’ is likely due to low-frequency occurrence in English-centric training sets. Not a bug, just data imbalance.

February 10, 2026 AT 12:23

Tyler Springall

Let me be the first to say this: tokenization is the most boring subject in AI, and yet here we are, writing a 2000-word essay on it like it’s the Rosetta Stone.

Who decided we needed 128,000 tokens? Who gave them permission? I’m not even mad-I’m just disappointed. We could’ve been building flying cars. Instead, we’re arguing over whether ‘JSON’ should be one token or two.

Also, ‘racket’ → ‘rack’ + ‘##et’? That’s not intelligence. That’s a toddler with a hammer.

February 11, 2026 AT 23:22

Amy P

THIS. This is exactly why I hate using GPT-4 for legal docs. I paste in a 5-page contract and it costs $2.70. Then I realize-half the tokens are from ‘defendant’ being split into ‘def’, ‘end’, ‘ant’. That’s not efficiency. That’s a tax on precision. I’ve started pre-tokenizing everything now. It’s a pain, but it saves me $1200/month. If you’re not checking token counts, you’re bleeding money.

February 12, 2026 AT 09:25

Ashley Kuehnel

OMG thank you for this post!! I’ve been using LLMs for customer service bots and had no idea token count was screwing up my budget. I thought word count = cost. Nope. My ‘Hi, how can I help you today?’ was 12 tokens. My ‘Please send your invoice’ was 15. I was losing money on simple replies!

Just started using Hugging Face’s tokenizer tool-game changer. Also, I added ‘customer service rep’ as one token and cut our costs by 22%. Small tweaks = big savings. You guys are lifesavers!!

February 13, 2026 AT 18:59

adam smith

While the article presents a technically accurate overview of tokenization, it lacks sufficient empirical citations regarding the 30-50% underestimation claim. Furthermore, the assertion that ‘tokenization is messy’ is an oversimplification. The observed variance is attributable to corpus-specific token distribution, not systemic failure. A more rigorous analysis would contextualize token entropy across language families.

February 15, 2026 AT 18:46