How to Avoid LLM Vendor Lock-In: A Practical Migration Guide for 2026

- Mark Chomiczewski

- 16 May 2026

- 0 Comments

You are paying a "Token Tax" that is quietly eating your margins. Every time your application calls an external API for Large Language Models (LLMs), you are renting intelligence rather than owning it. In 2026, this dependency has shifted from a convenient shortcut to a strategic vulnerability. When providers raise prices, change terms, or experience outages, your business halts. The solution isn't just switching providers; it is building portable AI infrastructure.

Migrating between LLM providers is no longer a binary choice between "cloud API" and "self-hosted." It is a spectrum of control. By adopting a model-agnostic architecture, you can transition from full dependency to complete sovereignty without rewriting your codebase. This guide breaks down the practical steps to escape vendor lock-in, optimize latency, and secure your data.

The Five Classes of LLM Sovereignty

To understand where you stand, you need a framework. Industry analysts define five classes of LLM deployment that map your level of independence:

- Class 1: Pure API-dependent models. You have zero control over pricing, latency, or data privacy. This is full vendor dependency.

- Class 2: Fine-tuning proprietary models via APIs. You customize the output but remain locked into the provider's ecosystem and pricing structure.

- Class 3: Open-source models via managed endpoints. You use open weights but rely on a third party to host them. This is the first step toward portability.

- Class 4: Managed Kubernetes or serverless deployments. You control the infrastructure stack, often using services like Amazon EKS, but still depend on cloud providers for hardware provisioning.

- Class 5: Fully self-hosted infrastructure. You own the GPUs, the software, and the data. This offers maximum control but requires significant operational expertise.

The goal is not to jump straight to Class 5. That is risky and expensive. Instead, start in Class 3 to validate your application's performance with open-source models like Llama 3.1. Move to Class 4 when transaction volumes justify dedicated infrastructure. Graduate to Class 5 only when your internal team has the skills to manage GPU clusters reliably.

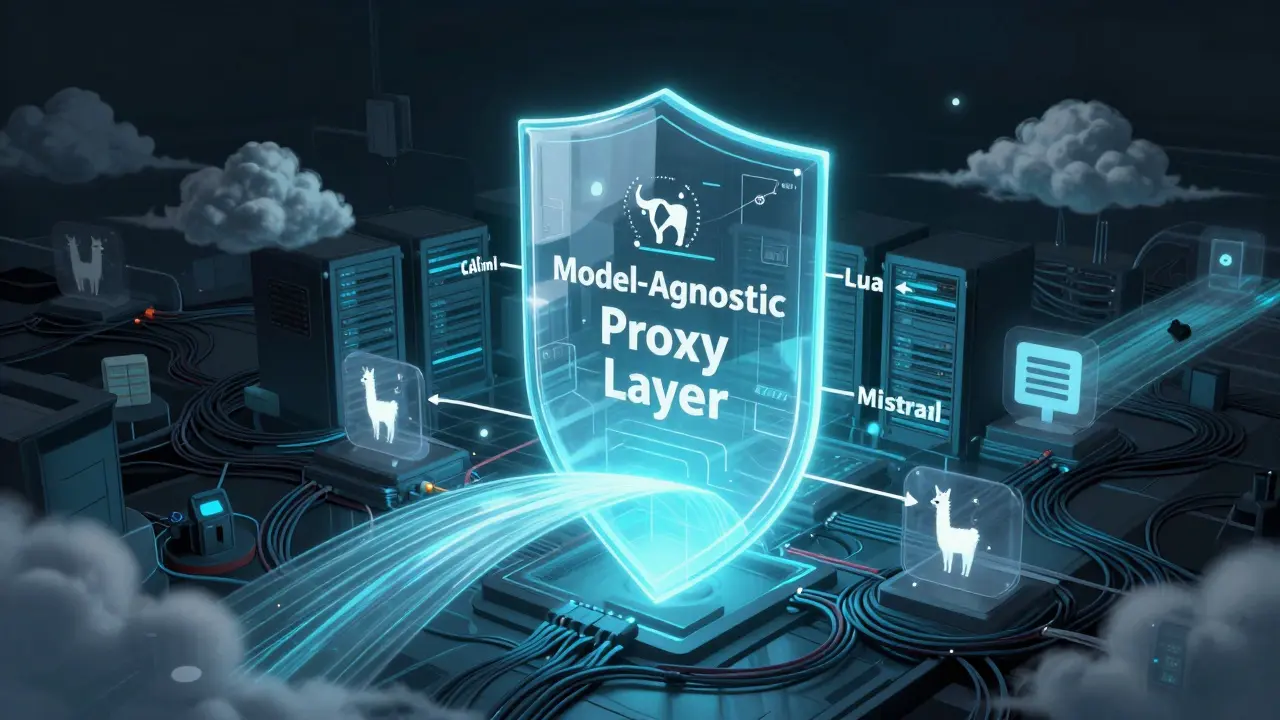

Building a Model-Agnostic Proxy Layer

The single most important technical step in avoiding lock-in is implementing a model-agnostic proxy layer. This sits between your application code and the LLM providers. It abstracts the underlying model implementation, ensuring your business logic remains unchanged regardless of which model is active.

With a properly configured proxy, you can switch from Llama 3.1 to Mistral Large 2 by editing a single configuration file, such as config.yaml. If a cloud provider goes down, the proxy can automatically failover to a local instance or a different cloud endpoint.

This layer also enables intelligent routing. You can configure rules to send simple tasks, like summarization, to fast, cheap local models like Phi-4. Complex reasoning tasks can be escalated to frontier models like Claude 3.5 Sonnet. This hybrid approach optimizes both cost and performance while maintaining flexibility.

| Class | Control Level | Cost Structure | Operational Complexity |

|---|---|---|---|

| Class 1 | None | Variable (Per-token) | Low |

| Class 3 | Medium | Fixed (Managed Hosting) | Medium |

| Class 5 | Full | Fixed (CapEx + OpEx) | High |

Latency and Performance Advantages

Cloud APIs introduce network overhead and queuing delays. During peak usage, response times can spike unpredictably. By deploying specialized Small Language Models (SLMs) on local hardware, you can achieve sub-200ms response times. This is critical for real-time applications like voice AI or instant autocomplete features.

When you eliminate the round-trip to a remote data center, you gain consistent latency. For applications requiring responses under 500ms, every millisecond counts. Local inference ensures that user experience quality does not degrade during high-traffic periods. This reliability is a key driver for migrating sensitive or high-volume workloads to self-hosted environments.

Data Privacy and Knowledge Base Migration

Vendor lock-in isn't just about compute; it's about data. Many organizations migrate their inference engines but leave their vector databases in the cloud. Services like Pinecone are convenient, but they create a secondary dependency. To achieve true sovereignty, you must migrate your knowledge base to self-hosted engines like Milvus or Qdrant.

Simultaneously, replace external embedding endpoints with local models like BGE-M3. This ensures that the semantic mapping of your proprietary documents never leaves your security perimeter. Sending sensitive legal or financial documents to external APIs for embedding generation introduces unnecessary data leakage risks. Keeping embeddings local closes this gap.

Infrastructure Orchestration Options

If you are not ready for full self-hosting, consider intermediate infrastructure options. Serverless GPU clusters from providers like Lambda Labs, RunPod, or CoreWeave offer automatic scaling without long-term hardware commitments. These platforms allow you to run open-source models on demand, reducing costs during low-usage periods.

For enterprises needing industrial-grade reliability, managed Kubernetes deployments using NVIDIA H100 GPUs provide a robust solution. Running these instances within a corporate VPC ensures that your AI infrastructure scales with user demand while remaining behind your firewall. This setup balances the ease of managed services with the security of private infrastructure.

Cost Analysis: The Token Tax vs. CapEx

The economic case for migration becomes clear at scale. API costs scale linearly with usage, creating what industry experts call the "Token Tax." As your user base grows, these costs can exceed the one-time investment in self-hosted hardware. Self-hosted infrastructure costs are largely fixed, providing price protection against future API increases.

Calculate your break-even point by comparing monthly API spend against the cost of equivalent GPU capacity. For high-volume applications, the return on investment for self-hosting often occurs within 12-18 months. Additionally, regulatory changes like the EU Cloud and AI Development Act (2026) may impose compliance requirements that favor portable, multi-provider architectures. Building flexibility now avoids costly rewrites later.

Operational Risks of Self-Hosting

Self-hosting introduces new challenges. Security responsibility shifts from the provider to your team. Misconfigurations can lead to vulnerabilities. Staff turnover becomes a risk if only one engineer understands the GPU stack. Hardware aging is another factor; NVIDIA drivers and software stacks require continuous updates to maintain performance and security.

To mitigate these risks, adopt an incremental migration strategy. Start with small workloads in Class 3 or 4 to build operational expertise. Document every configuration change. Implement automated monitoring and alerting for GPU health and latency metrics. Do not attempt to move your entire production workload to Class 5 overnight. Validate your ability to manage the infrastructure before committing fully.

What is the best way to start avoiding LLM vendor lock-in?

Begin by implementing a model-agnostic proxy layer. This allows you to swap models without changing application code. Start testing with open-source models via managed endpoints (Class 3) to validate performance and cost savings before investing in self-hosted infrastructure.

Is self-hosting LLMs more expensive than using APIs?

For low-volume usage, APIs are cheaper due to zero upfront costs. However, for high-volume applications, self-hosting becomes more cost-effective as API "Token Tax" scales exponentially. Calculate your break-even point based on your specific query volume and model size.

Which open-source models are best for migration?

Models like Llama 3.1, Mistral Large 2, and Phi-4 are excellent choices. They offer strong performance across various tasks and are widely supported by infrastructure providers. Phi-4 is particularly useful for low-latency, simple tasks due to its smaller size.

How do I handle data privacy during migration?

Migrate both your inference engine and your vector database. Use self-hosted engines like Milvus or Qdrant instead of cloud services like Pinecone. Replace external embedding APIs with local models like BGE-M3 to ensure sensitive data never leaves your security perimeter.

What are the risks of moving to Class 5 self-hosting?

Risks include increased operational complexity, security misconfiguration, staff dependency, and hardware maintenance. Mitigate these by incrementally building expertise in lower classes, automating monitoring, and maintaining up-to-date documentation for your GPU stack.