From Rule-Based NLP to Large Language Models: How AI Learned to Understand Language

- Mark Chomiczewski

- 22 March 2026

- 8 Comments

Thirty years ago, computers struggled to understand a simple sentence like "I want to book a flight to Denver." Today, they can write essays, solve math problems, and hold conversations that feel human. This isn’t magic. It’s the result of a quiet revolution in how machines learn language - one that moved from rigid rules to something far more powerful: learning from billions of examples.

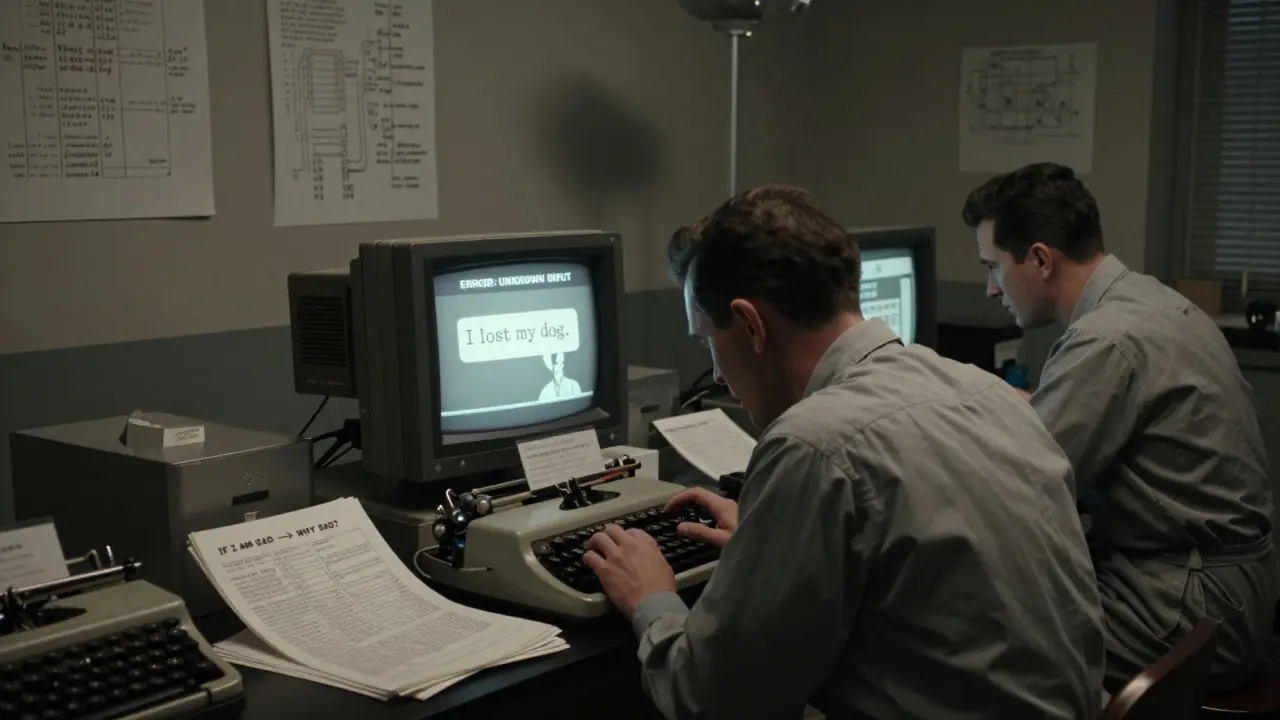

The Age of Hand-Coded Rules

In the 1950s, NLP was all about rules. Engineers sat down with linguists and wrote down every possible way someone might say something. If you typed "I am sad," a program like ELIZA would reply with "Why are you sad?" It looked smart - until you tried something unexpected. "I lost my dog today." ELIZA had no rule for that. So it replied with something random, or just froze. These systems worked only in tiny, controlled worlds. Every new phrase needed a new rule. Every exception needed a new line of code. It was like trying to build a map of every street in the world by hand - impossible at scale.

The Rise of Statistics

The 1980s brought a shift. Instead of writing rules, researchers started counting. They fed computers millions of sentences from books, newspapers, and transcripts. The idea? If "I want to" is often followed by "book a flight," then the computer should guess that next word. This was the birth of N-grams. A trigram, for example, looked at the last two words and picked the most likely third. Suddenly, machines could handle new phrases without being told. But there was a catch: they forgot context fast. If you said, "I went to the store. I bought milk. I need more," the system had no idea "more" meant milk. It only saw pairs or triplets. Long sentences? Too much to remember. This was the curse of dimensionality - too many combinations, not enough memory.

Neural Networks Enter the Game

By the 1990s, researchers started building networks that mimicked the brain. Early neural nets could learn patterns from data, but they were terrible with sequences. They treated each word like a separate puzzle piece, not part of a story. Then came RNNs - Recurrent Neural Networks. These could remember the last few words by looping information back. It was a breakthrough. But they had a fatal flaw: the longer the sentence, the more they forgot. Imagine trying to recall the beginning of a book after reading 300 pages. That’s what RNNs did. They lost track.

LSTMs and the Memory Breakthrough

In 1997, a team at ETH Zurich solved this with LSTMs - Long Short-Term Memory networks. Think of them as a brain with sticky notes. Instead of forgetting everything, LSTMs had gates: one to forget unimportant stuff, one to remember new info, and one to output what mattered. Suddenly, machines could follow a conversation across paragraphs. Machine translation got better. Speech recognition improved. But even LSTMs had limits. They still processed words one at a time - slow, sequential, and inefficient. And they couldn’t easily link "Denver" to "flight" if they were far apart in a sentence.

The Transformer Revolution

All of that changed in 2017. Google released a paper called "Attention Is All You Need." It introduced the transformer - a model that didn’t process words in order. Instead, it looked at the whole sentence at once. Using attention, it could say, "The word 'flight' matters most when I see 'book' and 'Denver.'" This wasn’t just faster. It was revolutionary. Transformers could handle thousands of words in parallel. They didn’t forget. They didn’t struggle with long sentences. They just… understood.

The Scaling Explosion

Once transformers were in place, the real race began: bigger models. BERT, released in 2018, read text both forward and backward - so it knew not just what came before, but what came after. GPT-1, GPT-2, and GPT-3 followed, each with more parameters than the last. By GPT-3, the model had 175 billion adjustable weights. That’s more than the number of synapses in a human brain. It wasn’t programmed. It learned by reading the internet. It learned to write poems, answer questions, and even write code - all from a single training process.

Today’s LLMs: Reasoning, Not Just Responding

By 2025, models like GPT-5 didn’t just generate text. They thought. They solved math problems on the AIME 2025 exam - perfectly. They didn’t memorize answers. They worked them out step by step. How? Training changed. Instead of just predicting the next word, models were trained to reason. They learned to check their own work. To generate multiple solutions. To pick the best one. DeepSeek R1-Zero showed that even without examples, a model could learn to reason just by being rewarded for correct logic. This wasn’t imitation. It was understanding.

How Models Are Trained Now

Modern LLMs aren’t trained in one step. It’s a pipeline. First, pre-training: reading every book, article, and code snippet they can find. Then, supervised fine-tuning: showing them exactly how to answer questions. Then, reinforcement learning from human feedback - humans rank answers, and the model learns what feels right. Some now skip the reward model entirely, using Direct Preference Optimization to go straight from human choices to better outputs. It’s not magic. It’s data, feedback, and massive computing power.

What’s Next?

The field is moving beyond just size. GPT-5 handles 400K tokens - that’s like reading a 1,000-page book in one go. OpenAI released open-source versions like gpt-oss-120b so anyone can experiment. The focus is shifting from raw scale to smarter inference. Models like o3 don’t just spit out one answer. They generate five, evaluate them, and refine the best. This test-time compute - thinking harder at the moment of response - is becoming as important as training. The goal isn’t just to answer faster. It’s to answer better.

Why This Matters

This evolution wasn’t about making chatbots more clever. It was about removing barriers. Rule-based systems were brittle. Statistical models were shallow. RNNs forgot. LSTMs were slow. Transformers broke the bottleneck. Now, LLMs don’t just understand language - they reason, create, and adapt. They’re not perfect. They still hallucinate. But they’re learning to self-correct. In 2026, the line between human and machine language isn’t about grammar. It’s about depth. And that’s a new kind of intelligence.

What was the biggest limitation of rule-based NLP systems?

Rule-based systems could only handle exactly what they were programmed for. If you said something unexpected - like "I lost my dog" - they had no rule to respond. Every new phrase needed a new line of code, making them impossible to scale beyond tiny, controlled tasks.

How did statistical models improve on rule-based systems?

Statistical models learned from data instead of rules. By counting word patterns in millions of sentences, they could guess what came next - even if they’d never seen that exact phrase before. This made them far more flexible, though they still struggled with long-range context and memory.

Why did RNNs fail at long sequences?

RNNs suffered from the vanishing gradient problem. As sentences got longer, the model lost track of earlier words. It was like trying to remember the first page of a novel while reading the last - the connection faded. This made them useless for tasks requiring context across paragraphs.

What made transformers so different from earlier models?

Transformers processed entire sentences at once using attention mechanisms. Instead of reading word by word, they could instantly see which words mattered most - like linking "flight" to "Denver" even if they were far apart. This allowed parallel processing, massive scaling, and far better context retention.

How do modern LLMs like GPT-5 learn to reason?

Modern LLMs use specialized training techniques like reinforcement learning with process rewards. Instead of just rewarding the final answer, they reward correct reasoning steps. Models are trained to generate multiple solutions, check their logic, and refine - turning them from pattern matchers into problem solvers.

What is test-time compute, and why is it important?

Test-time compute means spending more time thinking at the moment of response - not during training. Models like o3 generate several answers, evaluate them, and pick the best. It trades speed for accuracy, letting models think harder when answering - a major step beyond just scaling up training data.

Comments

ravi kumar

Really appreciate this breakdown. I’ve been working with rule-based systems in legacy banking software, and the frustration was real. One typo in a customer’s message and the whole thing crashed. Seeing how we moved from that to LLMs feels like going from a bicycle to a rocket.

Still, sometimes I miss when systems were predictable. Now, when an AI says something weird, you can’t just patch it-you gotta retrain the whole thing. Not always better, just different.

March 24, 2026 AT 01:45

Megan Blakeman

Wow... just... wow. 😭 I didn’t think I’d get emotional reading about NLP history, but this? This is like watching a child grow up and become a philosopher. The way transformers just... *see* the whole sentence? That’s not code. That’s poetry. I’m crying. I’m so proud of us. 🫶

March 24, 2026 AT 23:32

Akhil Bellam

Let’s be real-this whole ‘LLMs understand language’ narrative is peak anthropomorphism. You feed a model 10^14 tokens and call it ‘reasoning’? Please. It’s just a probabilistic autocomplete with a fancy name. GPT-5 didn’t ‘solve’ math-it memorized patterns from 10,000 AIME problems disguised as ‘steps.’

Real intelligence doesn’t need 175B parameters. Real intelligence knows when to say ‘I don’t know.’ This? This is just very expensive mimicry. And it’s terrifying how many people think it’s sentient.

Also, ‘test-time compute’? That’s just putting a Band-Aid on a broken model. We’re not advancing. We’re just slapping GPUs on a house of cards.

March 25, 2026 AT 10:30

Amber Swartz

OMG I JUST CRIED READING THIS. I mean, like, full ugly cry. 😭😭😭

Remember when we used to think AI would just be robots? But nooo-it’s like… it’s like language became alive. And now it’s writing poems and solving math like it’s breathing? I’m not ready for this. I’m not ready to live in a world where the machine knows me better than my therapist.

Also, who else is scared that one day it’ll just… stop asking for permission? I need a hug.

March 25, 2026 AT 20:38

Robert Byrne

Correction: LSTMs didn’t ‘lose track’-they were *designed* to forget. That’s not a flaw, it’s a feature. You don’t want a model holding onto every irrelevant word like a hoarder. Attention mechanisms? Brilliant. But let’s not pretend they’re magic. The real breakthrough was scaling data + compute + architecture in unison. You can’t credit one component.

Also, ‘GPT-5 solved AIME’? No. It *simulated* solving it. Big difference. And you call that ‘reasoning’? Please. It’s pattern recognition with confidence intervals. Stop romanticizing math. It’s not thinking. It’s counting.

March 27, 2026 AT 14:04

Tia Muzdalifah

so like… i just read this whole thing and i’m like… wow. i didnt even know nlp started with like, eliza? and now its writing code? wild. i think its kinda beautiful how we went from ‘why are you sad’ to ‘let me solve this calculus problem step by step’. kinda like evolution but with computers. 🤖💖

March 28, 2026 AT 00:13

Zoe Hill

This is so beautiful. I love how we went from rigid rules to something that feels… alive. Not perfect, no-but trying. Learning. Improving. That’s what makes it human. Even if it’s not human, it’s learning to be better. And that’s the most hopeful thing I’ve read all year.

Also, ‘test-time compute’? Yes. Let’s let it think. Let it pause. Let it wonder. We’re not in a rush anymore. 🌱

March 28, 2026 AT 16:11

Albert Navat

Look, I’ve been in this field since 2010, and I’ve seen every hype cycle. Transformers? Attention? All just glorified matrix multiplication with better packaging. The real innovation? The fact that we stopped caring about interpretability and just threw compute at the problem. That’s not science. That’s engineering by brute force.

And ‘reasoning’? Please. We’re training models to output what humans *want* to hear, not what’s logically sound. The AIME ‘solution’? It’s a statistical echo of the training data. No self-reflection. No meta-cognition. Just a very large, very expensive autocomplete.

Don’t get me wrong-I’m impressed. But don’t call it intelligence. Call it scale. And call it scary.

March 30, 2026 AT 02:22